The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

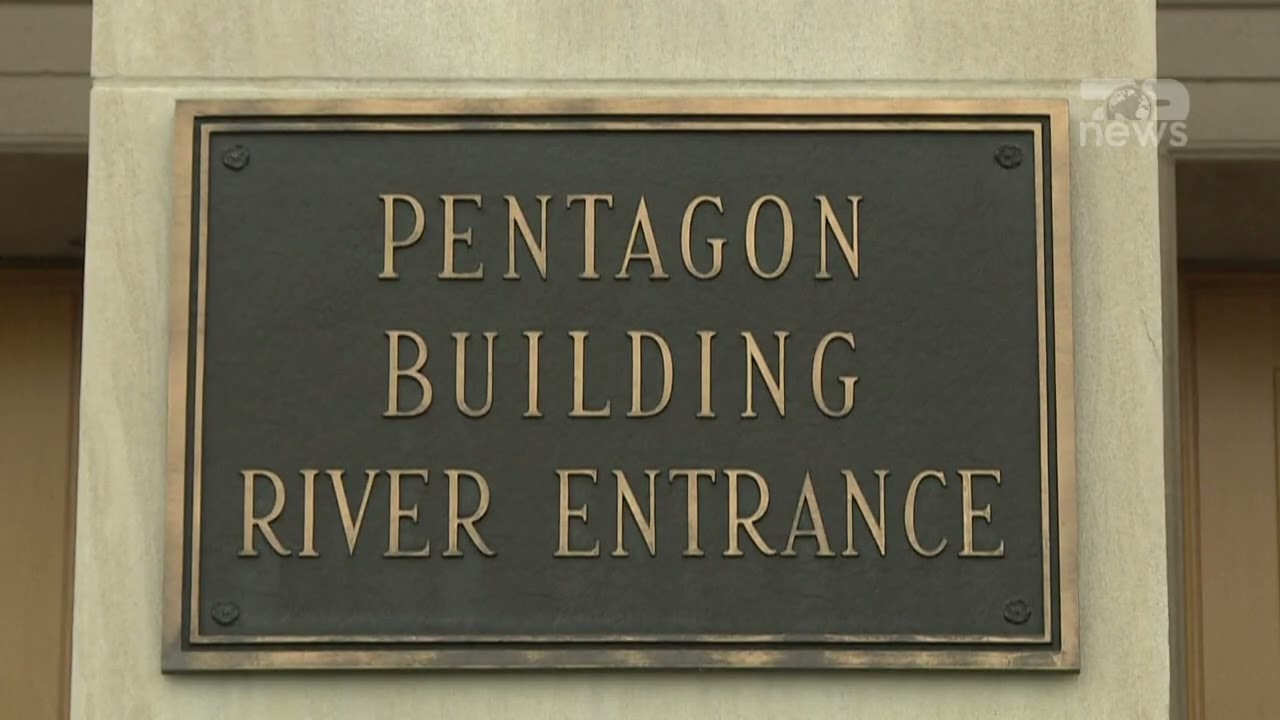

A mass online boycott campaign, "QuitGPT," has mobilized over 1.5 million people to protest OpenAI's agreement with the U.S. Pentagon to deploy AI models in classified military networks. The campaign highlights public fears of potential misuse, such as autonomous weapons and mass surveillance, and follows Anthropic's refusal to grant similar access.[AI generated]