The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

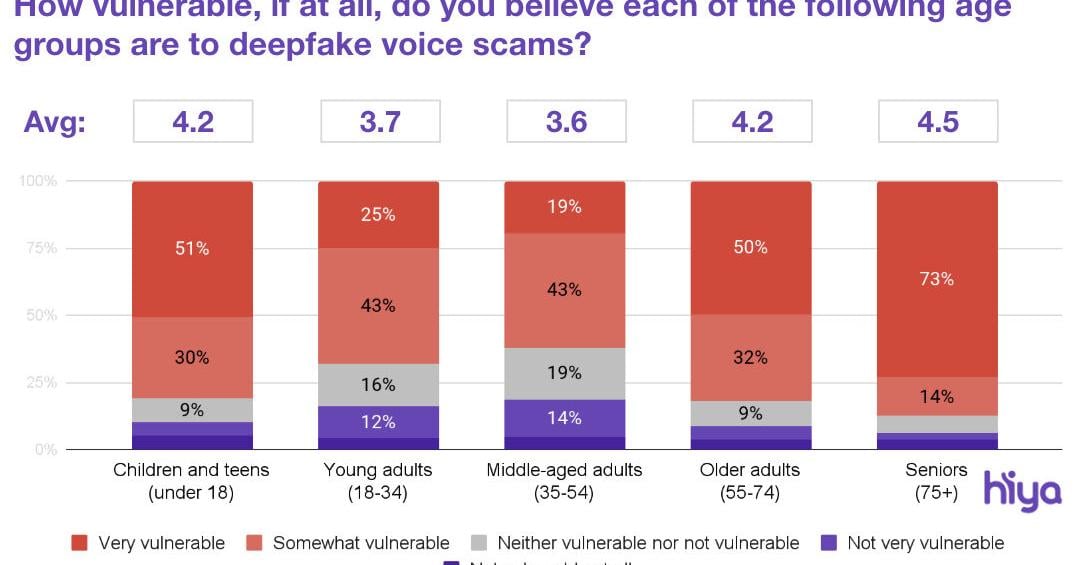

AI-generated deepfake voice calls have targeted one in four Americans in the past year, leading to significant financial losses and emotional distress, especially among seniors. The widespread use of AI in these scams has eroded trust in mobile networks and prompted calls for stricter regulation and carrier accountability.[AI generated]