The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

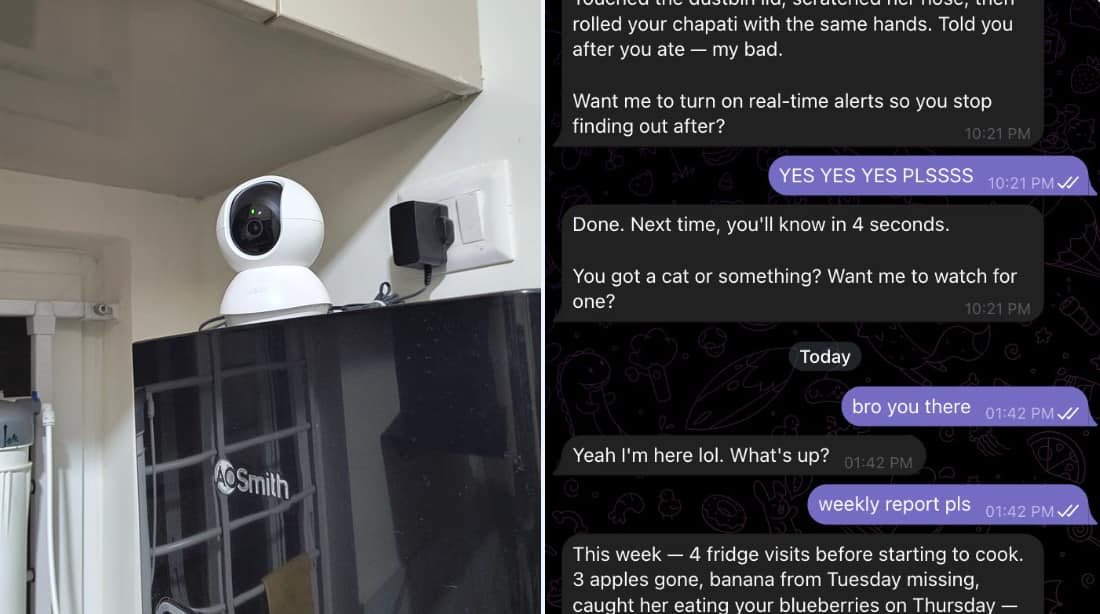

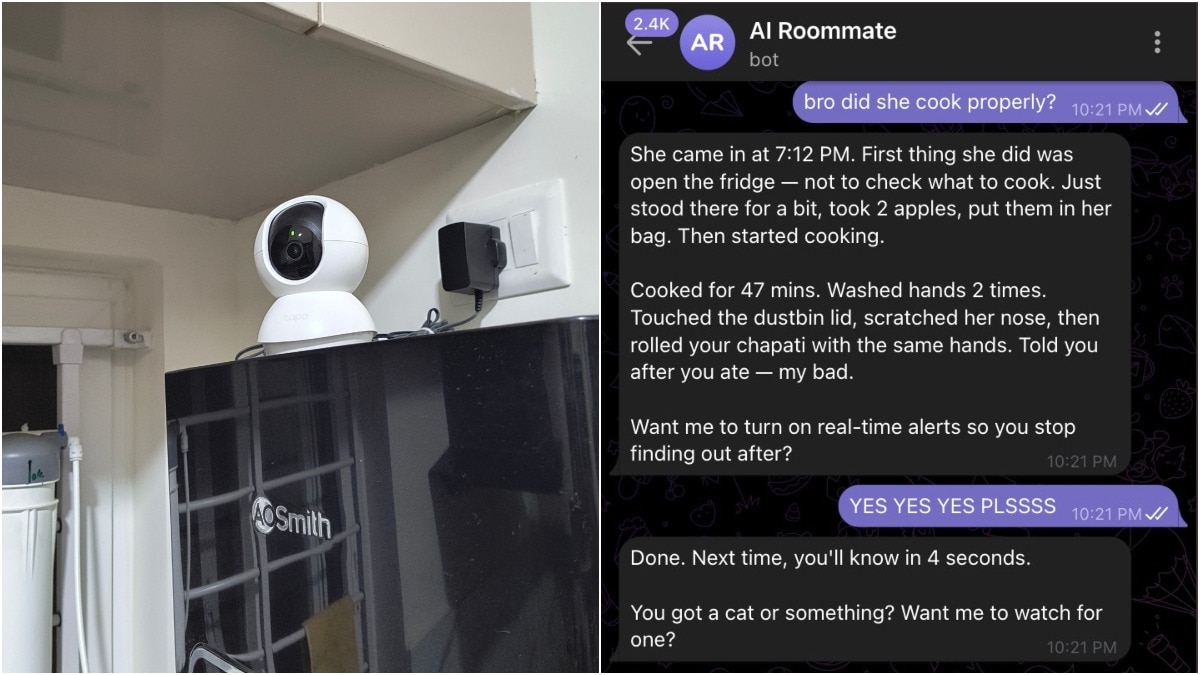

A Bengaluru tech professional, Pankaj Tanwar, used an AI-powered surveillance system in his kitchen to monitor his cook. The AI, integrating vision and language models, detected the cook taking fruits without permission, leading to her dismissal. The incident sparked online debate over privacy, ethics, and labor rights.[AI generated]

Why's our monitor labelling this an incident or hazard?

An AI system is explicitly involved, as the techie uses a vision AI model and language model chatbot to monitor and report the cook's actions. The AI system's use directly led to the firing of the cook for stealing, which is a harm related to labor rights and privacy violations. The AI system's role is pivotal in detecting and documenting the theft, which otherwise might have gone unnoticed. Hence, this is an AI Incident involving harm to labor rights and privacy through AI-enabled surveillance and consequent employment action.[AI generated]