The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

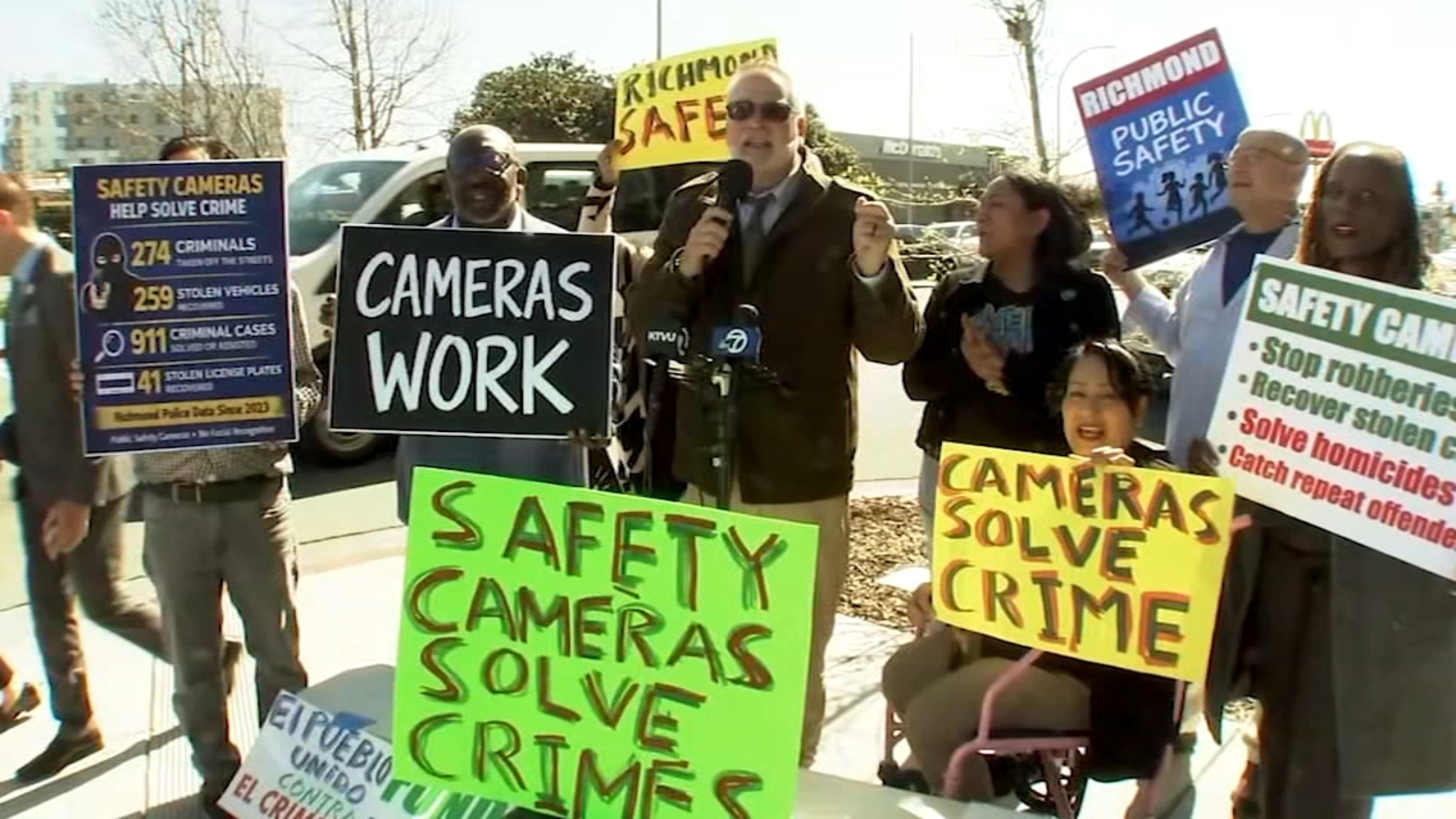

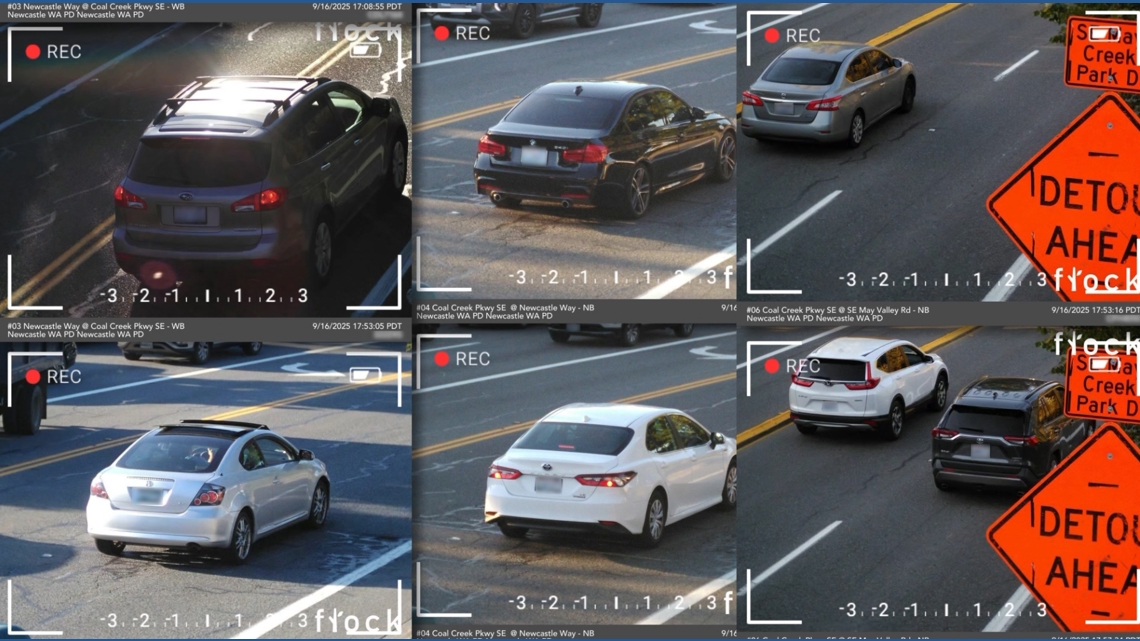

Flock Safety's AI-powered license plate readers, used by law enforcement in California, have come under scrutiny after data was shared with federal agencies, including ICE and Border Patrol, without proper oversight. This has led to privacy violations, public backlash, and contract terminations by cities and Amazon's Ring, highlighting risks of AI surveillance misuse.[AI generated]