The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

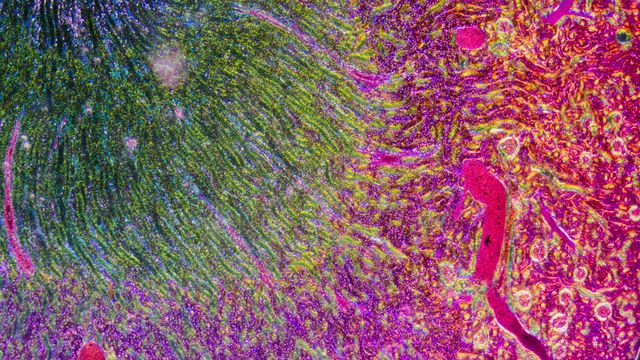

Research from the University of Warwick reveals that many AI systems used in cancer pathology rely on superficial data correlations, or "shortcut learning," rather than genuine biological signals. This raises concerns that such tools may be unreliable and could lead to harm if adopted in clinical settings without further validation.[AI generated]