The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

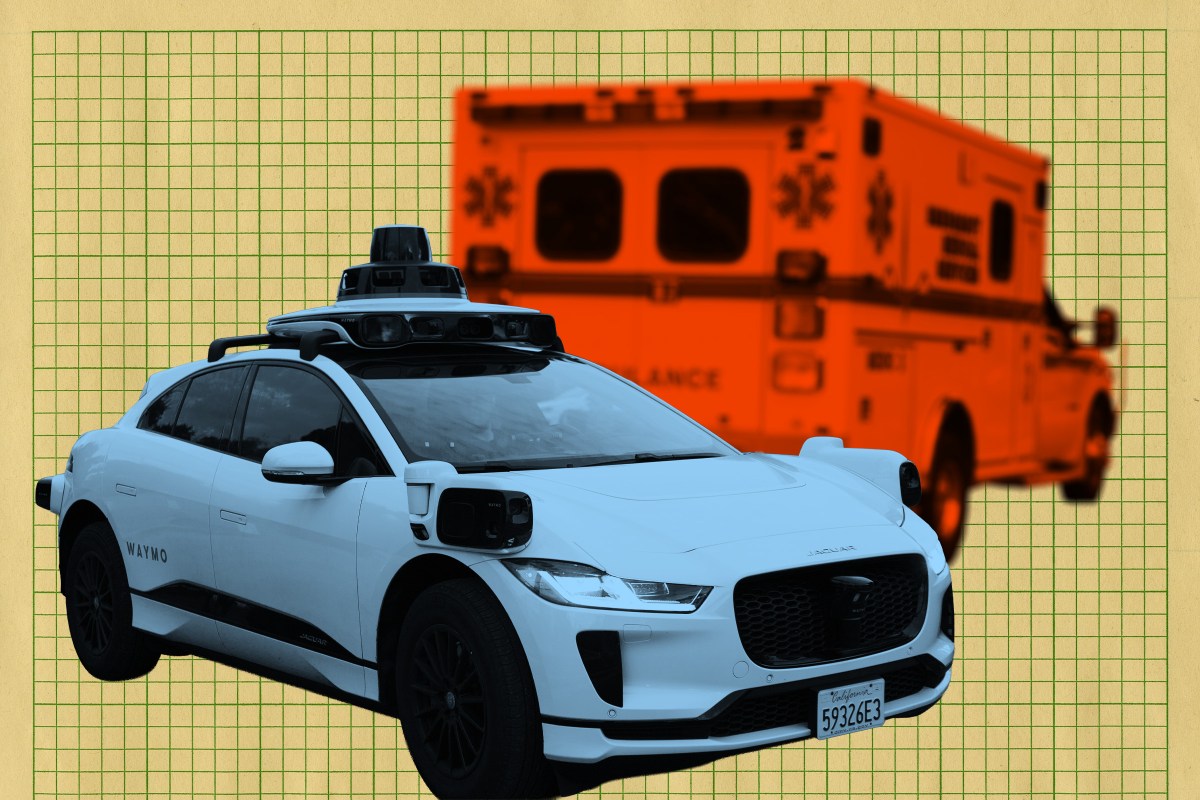

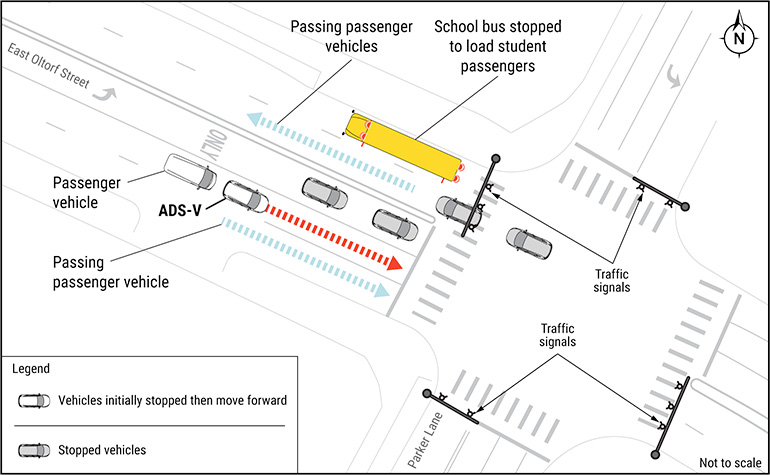

In Austin, Texas, a Waymo self-driving taxi blocked emergency vehicles during a fatal mass shooting, briefly delaying ambulance access. In a separate incident, another Waymo robotaxi was shot at while carrying a passenger, causing vehicle damage but no injuries. Both incidents highlight safety and reliability concerns for autonomous vehicles in critical situations.[AI generated]