The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

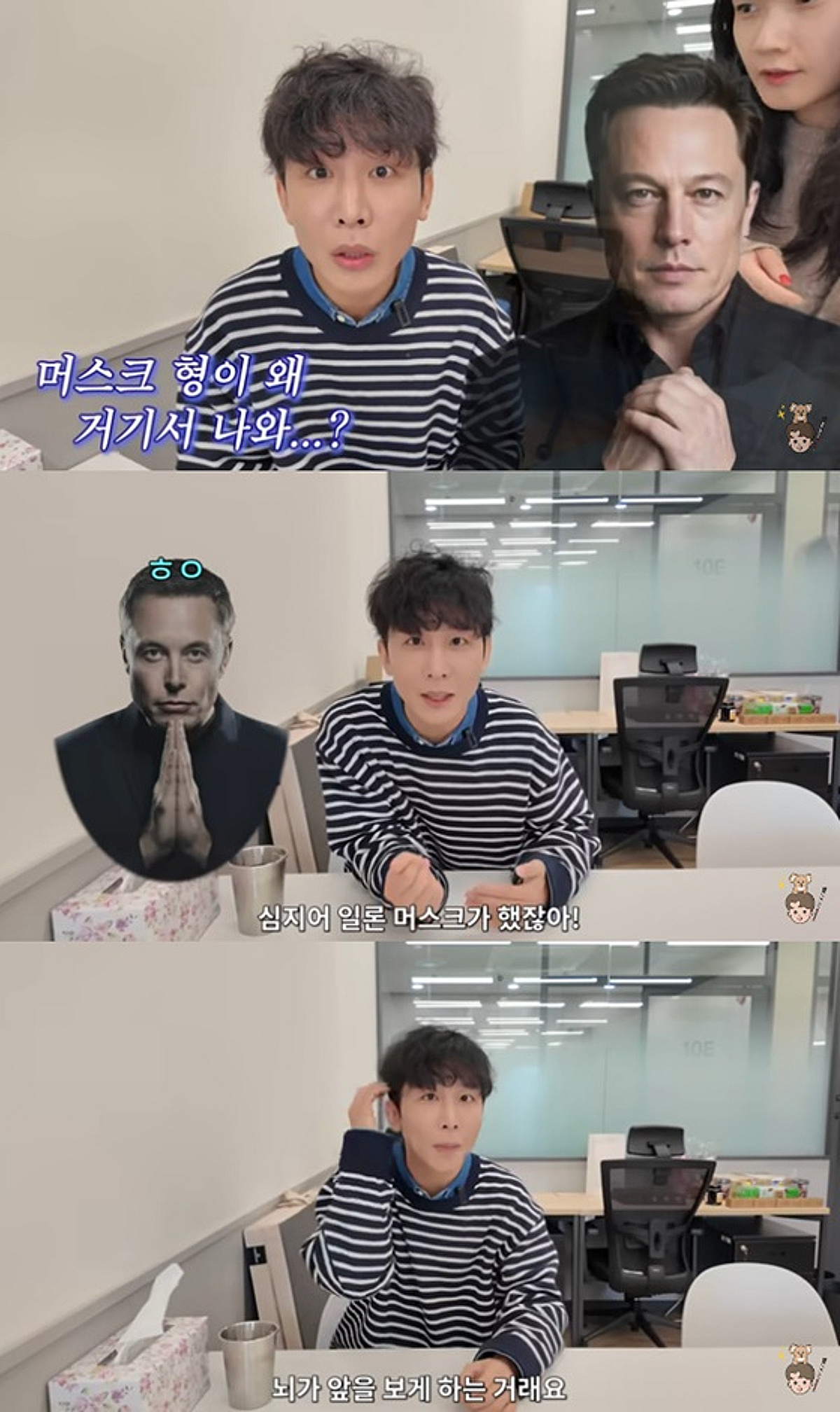

Blind Korean YouTuber 'Oneshot Hansol' has applied to participate in Neuralink's clinical trial for 'Blindsight,' an AI-powered brain implant aiming to restore vision by stimulating the visual cortex. While no harm has occurred, concerns about privacy, hacking, and social inequality have been raised regarding the technology's future use.[AI generated]