The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

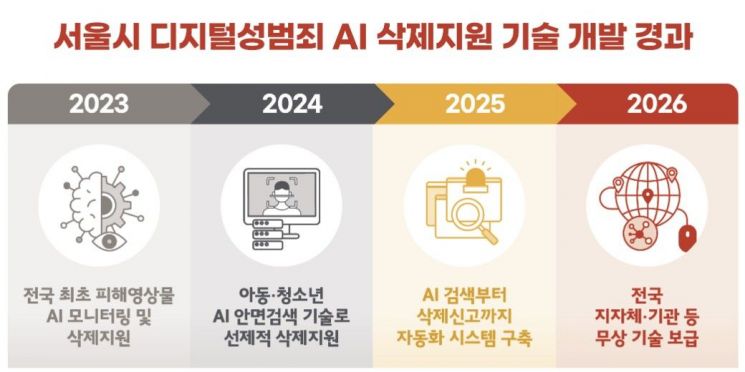

Seoul City developed an AI system that detects and deletes illegal digital sexual exploitation content online, reducing removal time from 3 hours to 6 minutes and increasing accuracy. The technology, credited with significantly increasing deleted harmful content, is now being distributed free to institutions across South Korea to better protect victims.[AI generated]