The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

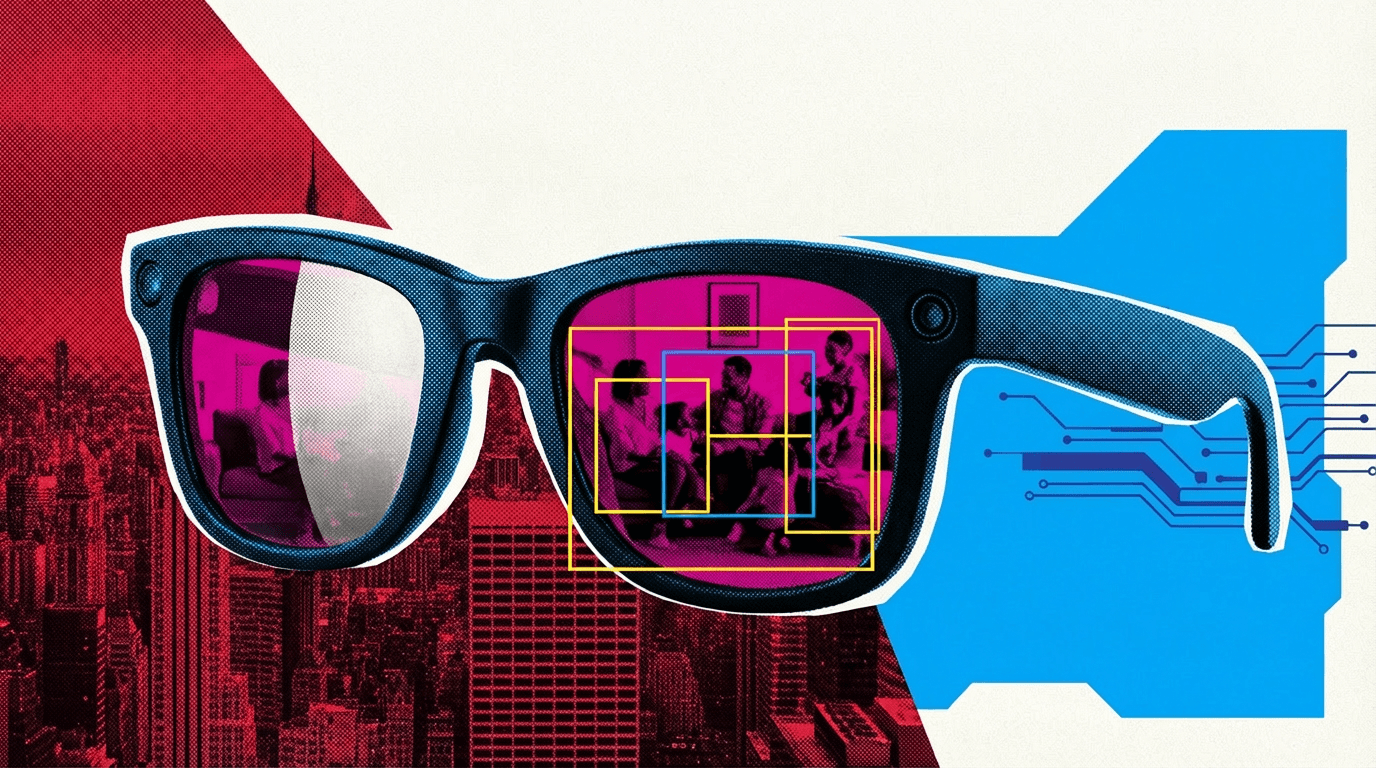

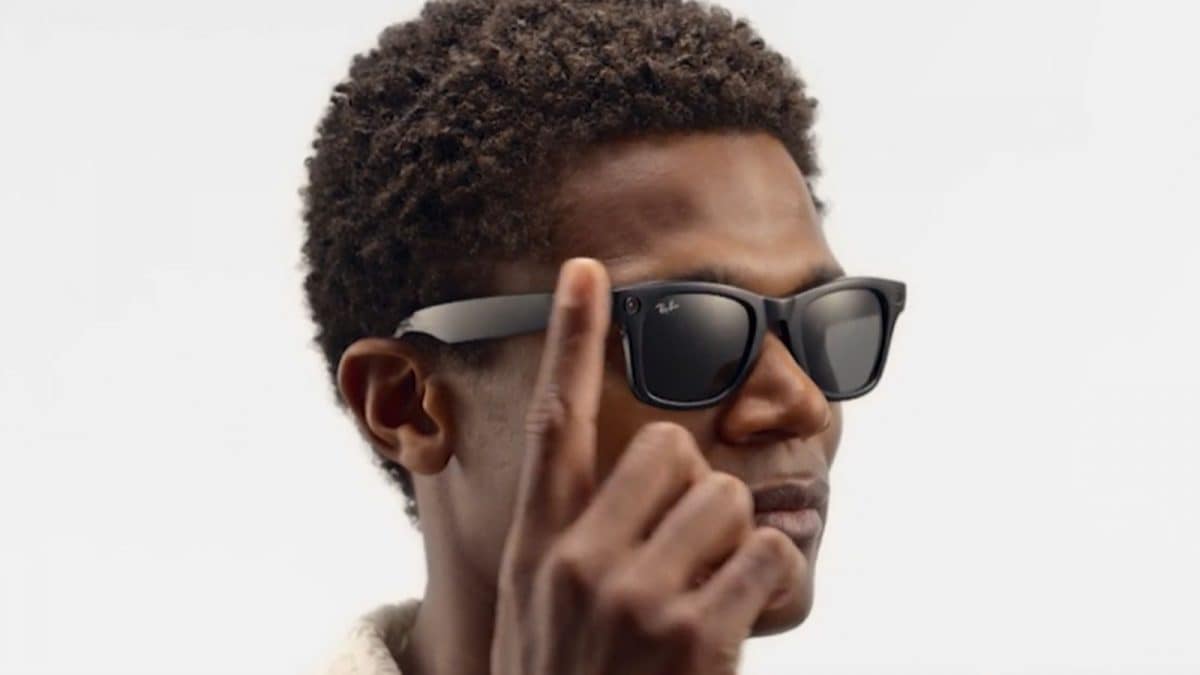

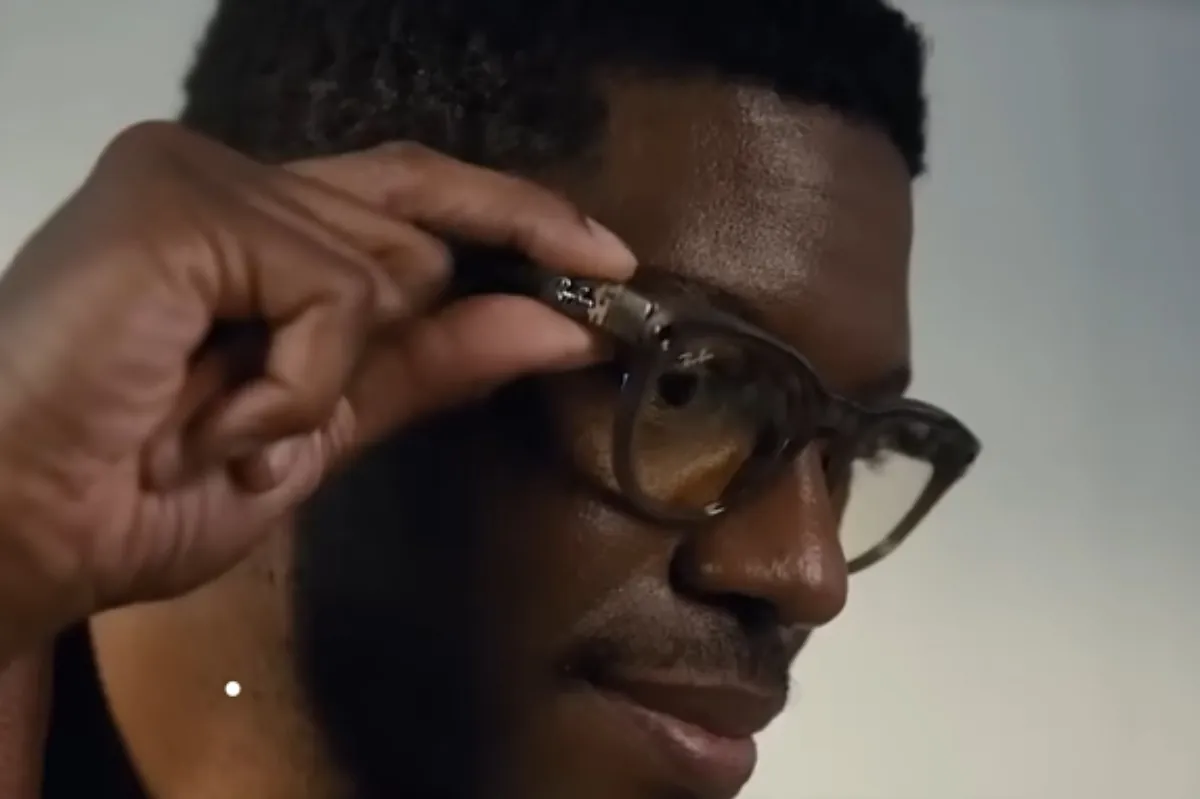

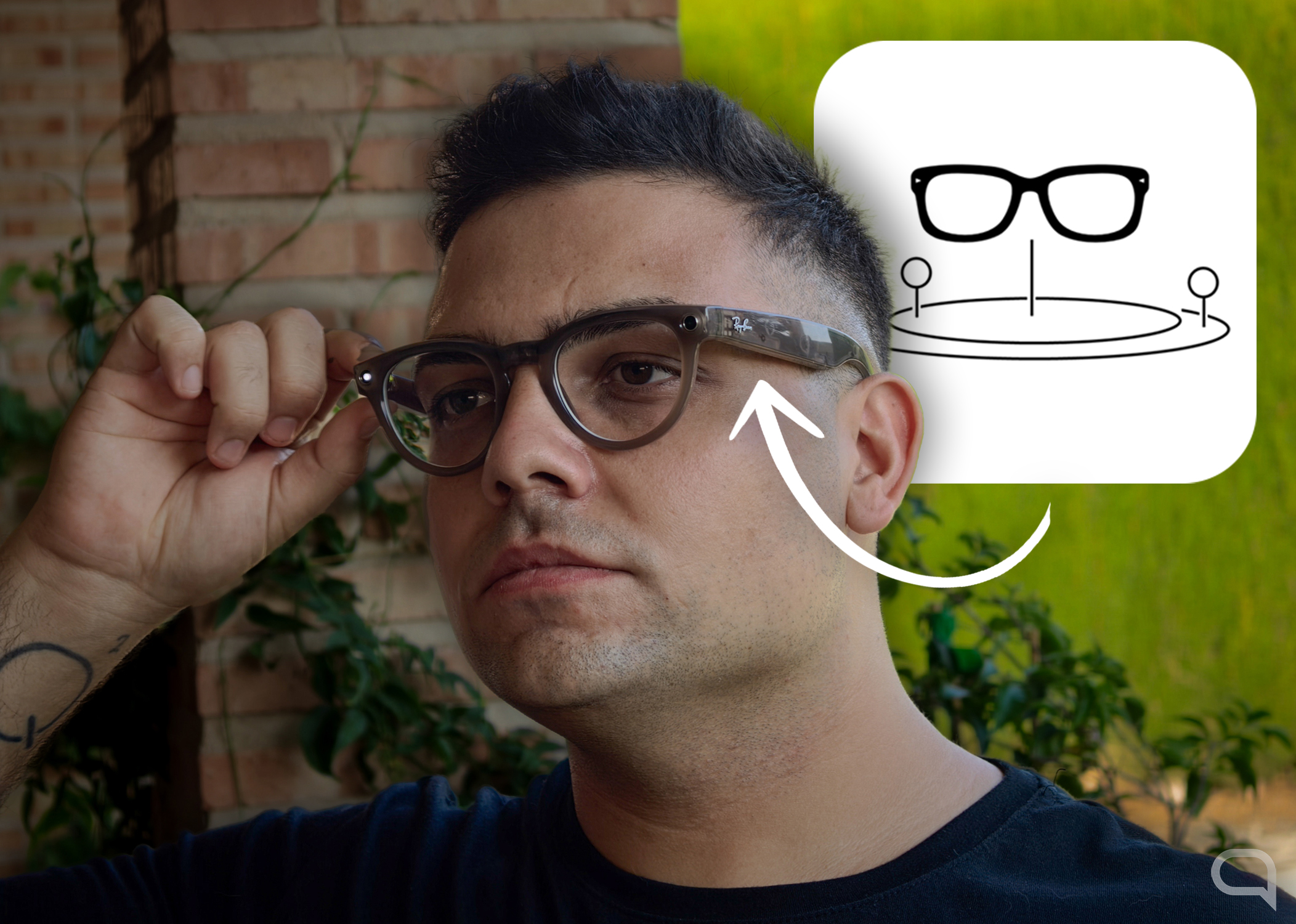

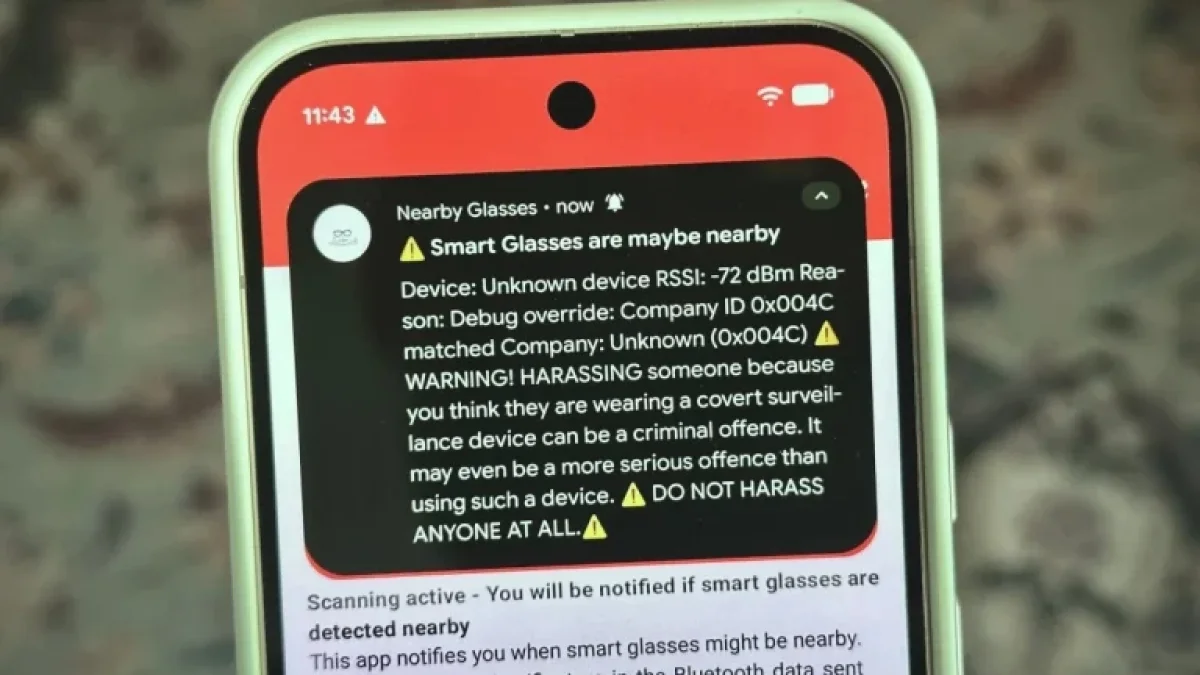

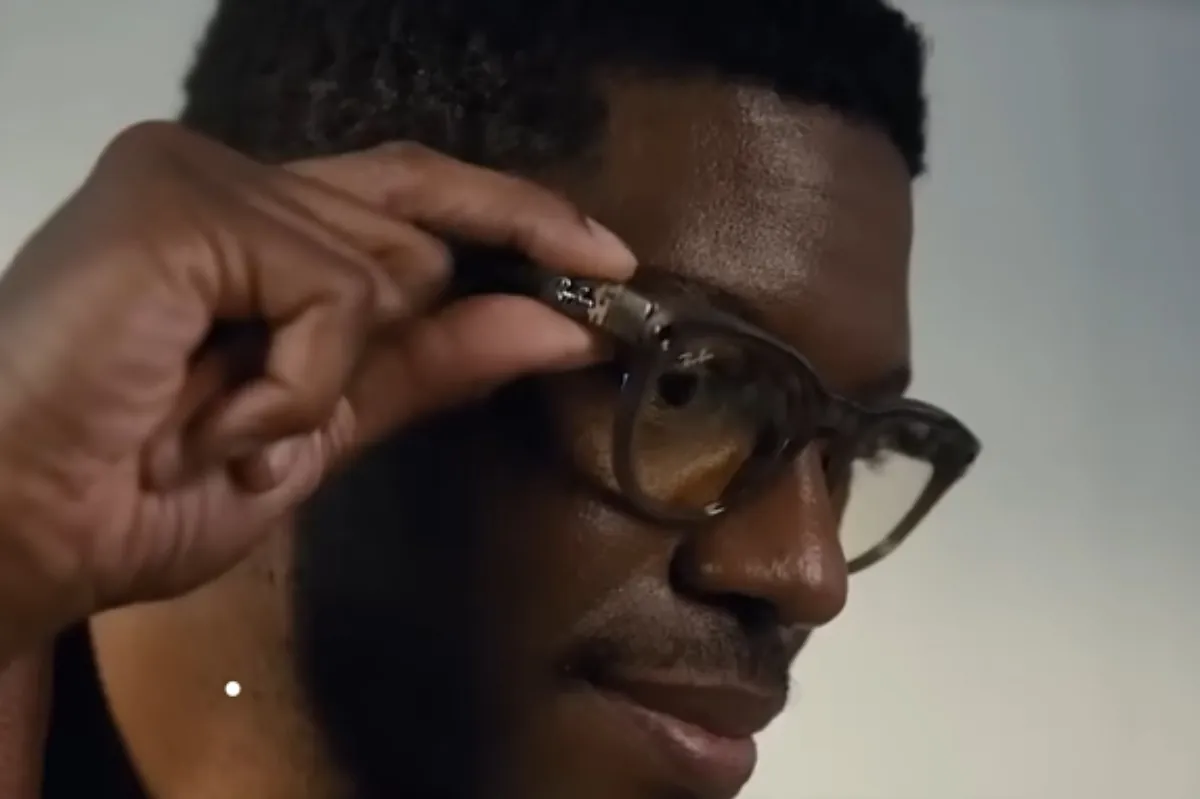

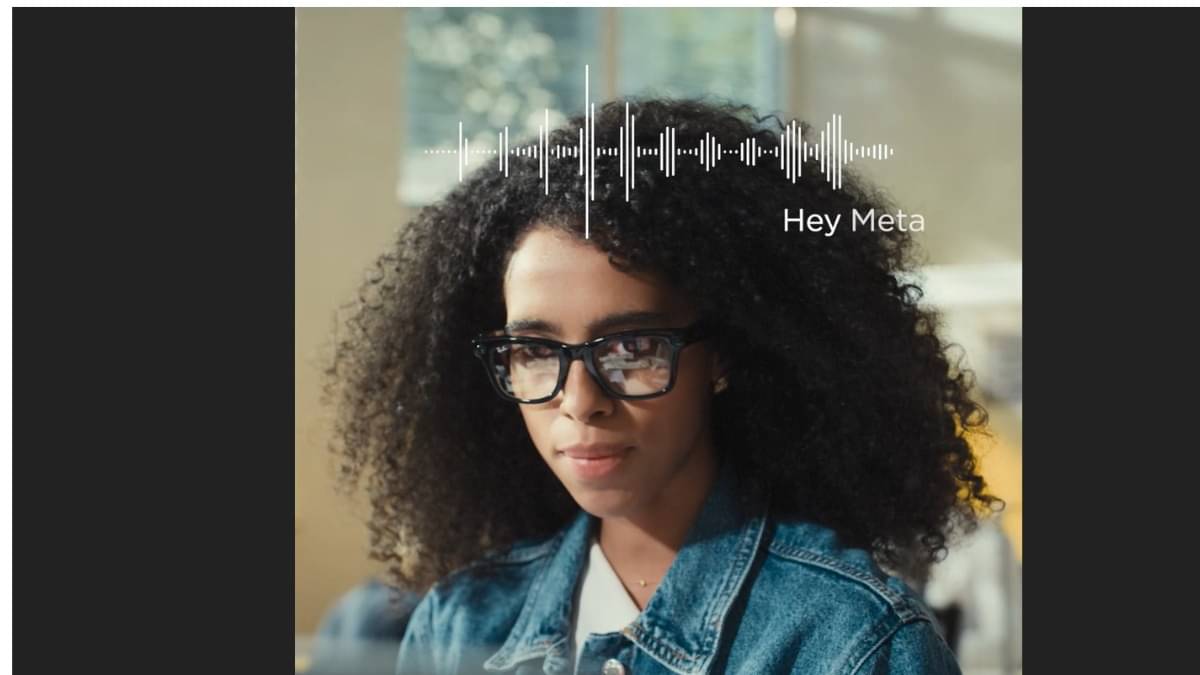

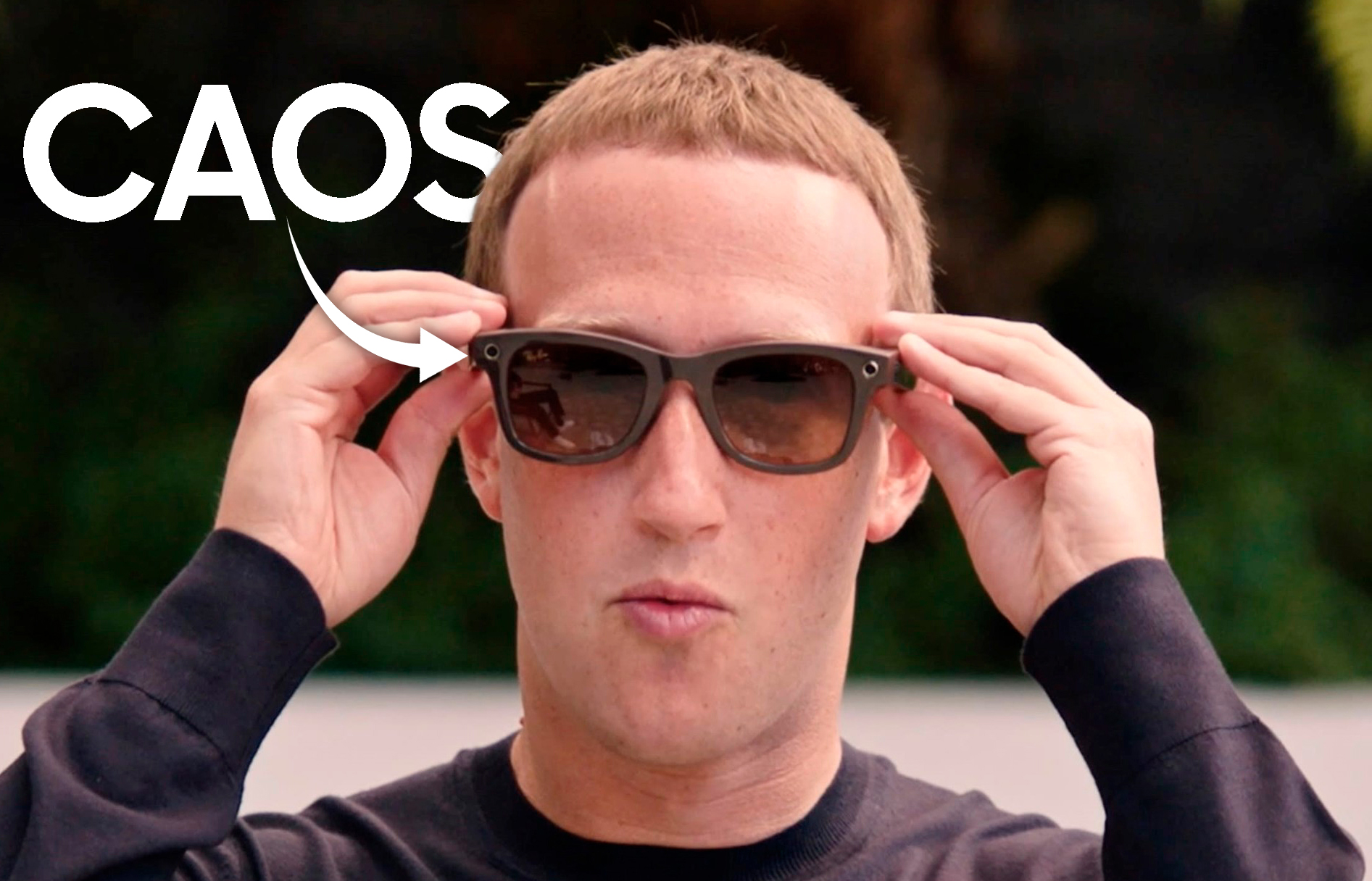

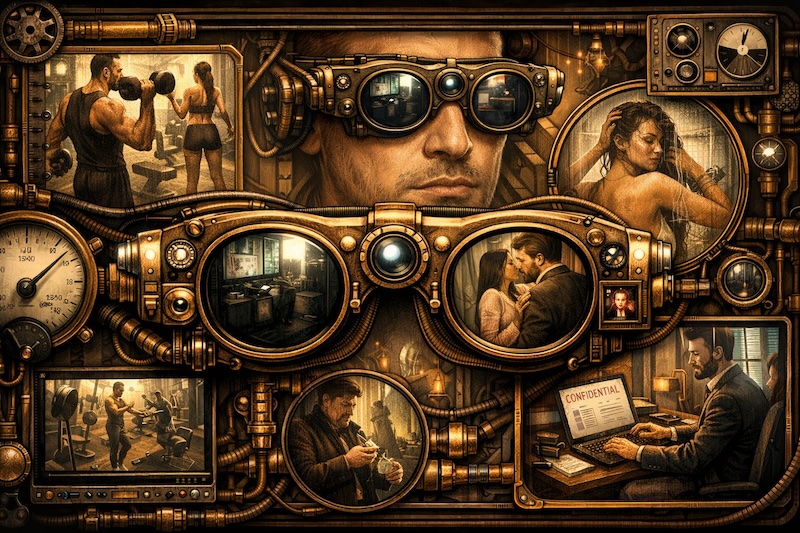

Meta's AI-powered Ray-Ban smart glasses record sensitive user data, including intimate and financial information, which is reviewed by human annotators in Kenya to train AI models. Users in Europe are often unaware their private footage is sent abroad, raising serious privacy and GDPR violation concerns.[AI generated]

)

/https://i.s3.glbimg.com/v1/AUTH_08fbf48bc0524877943fe86e43087e7a/internal_photos/bs/2023/U/G/ZdjcQWQHKBw4avrdrOLg/ray-ban-meta-smart-glasses-shiney-and-matte-black-sunglasses-.png)

)