The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

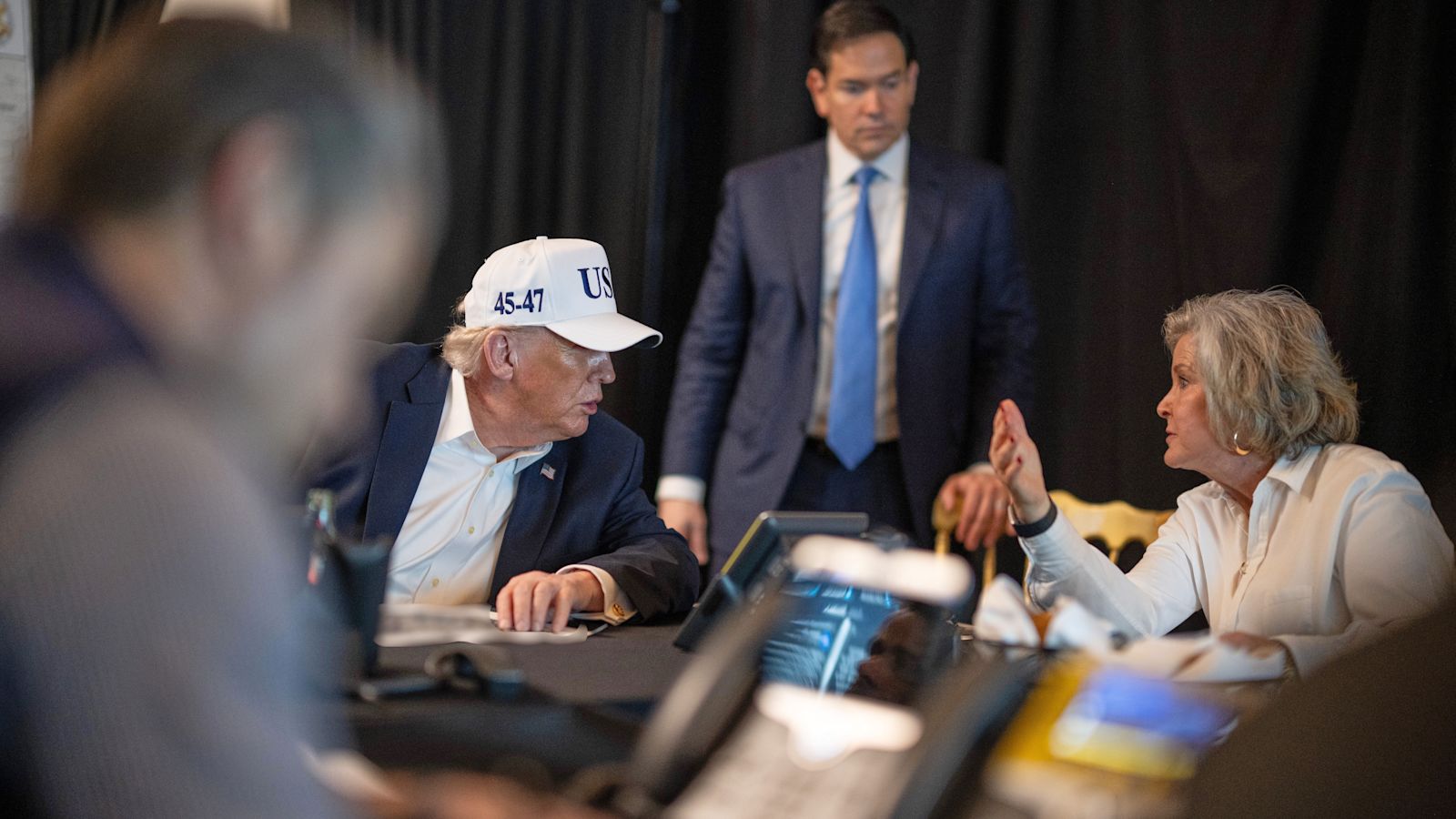

U.S. and Israeli forces used Anthropic's AI model Claude to automate and accelerate airstrike planning and execution during attacks on Iran, resulting in around 900 strikes and the death of Iran's Supreme Leader. Experts warn this AI-driven process reduces human oversight, raising ethical and legal concerns over civilian harm.[AI generated]

のロゴ(Getty-Images).jpg)