The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

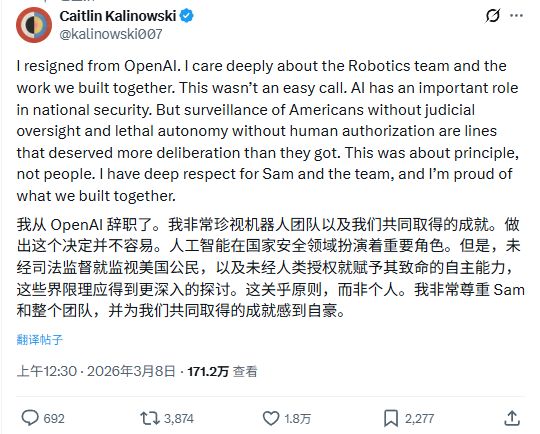

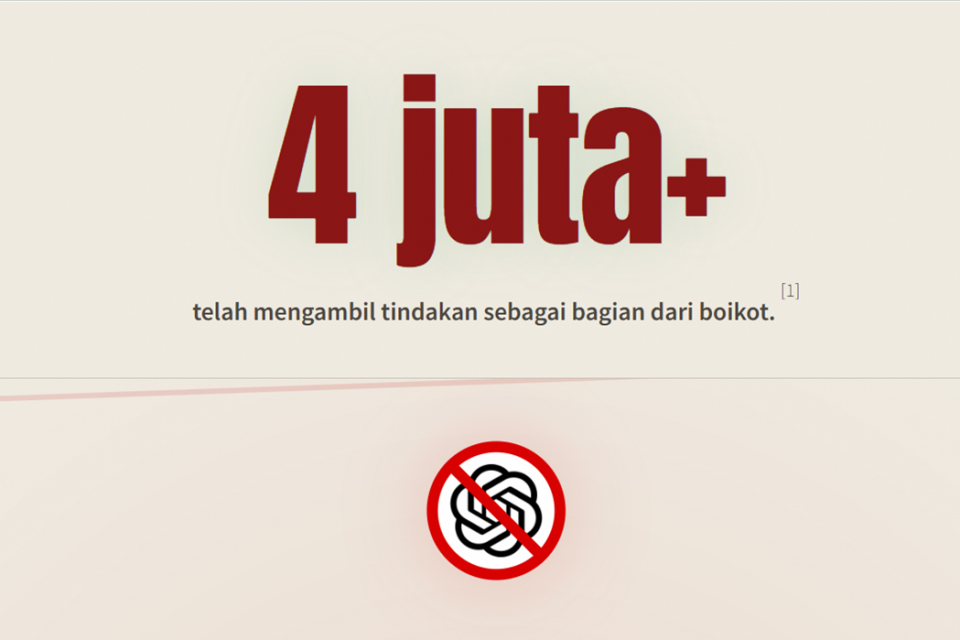

OpenAI's decision to supply AI systems for Pentagon military operations has sparked internal dissent, leading to the resignation of its robotics head, Caitlin Kalinowski. The move follows a similar controversy involving Anthropic and raises ethical concerns about AI misuse, surveillance, and autonomous weapons, though no direct harm has occurred yet.[AI generated]

/data/photo/2025/09/17/68caa77f3eabb.png)

/https://i.s3.glbimg.com/v1/AUTH_da025474c0c44edd99332dddb09cabe8/internal_photos/bs/2026/Y/g/UNHBAfQzObtjBqgyjR5Q/whatsapp-image-2026-03-02-at-17.04.02.jpeg)