The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

AI systems, including Anthropic's Claude, have been actively used by the US and Israel in military operations against Iran and in Gaza, assisting in target identification and decision-making that led to lethal outcomes. Experts warn of the dangers and lack of oversight as AI accelerates modern warfare's lethality.[AI generated]

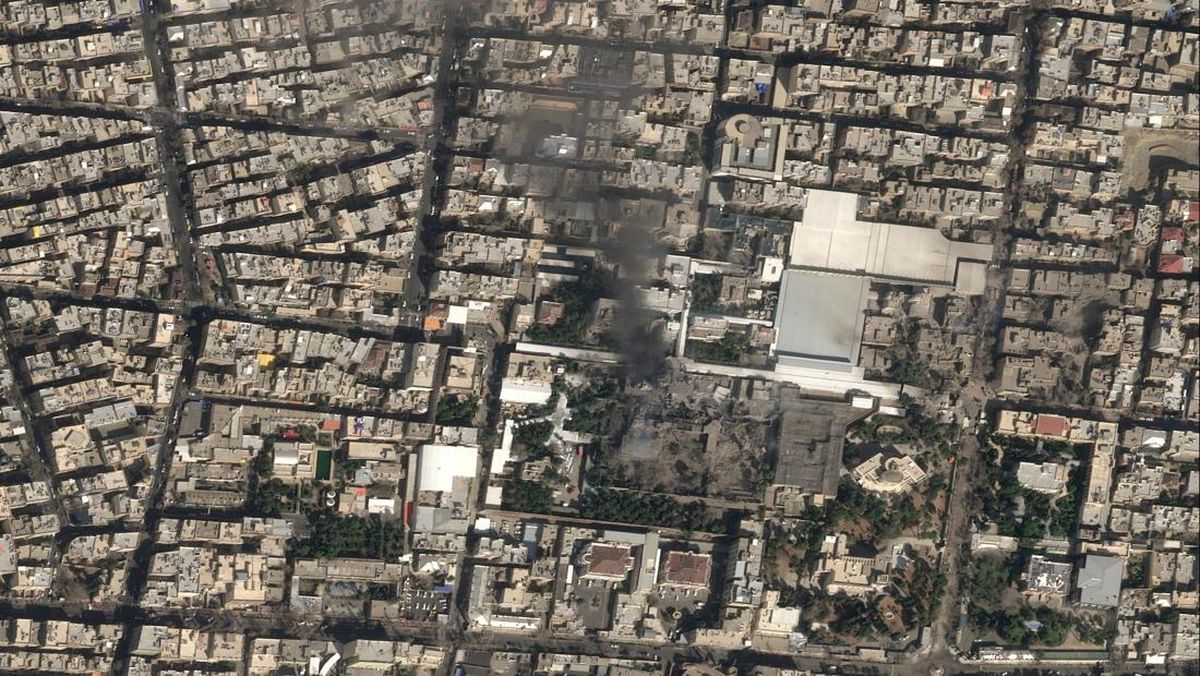

:quality(80)/https://cdn-dam.kompas.id/images/2026/03/04/52abe7acbb4221a7949e35dfbd543217-20260305_epic_fury_tomahawk.jpg)