The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

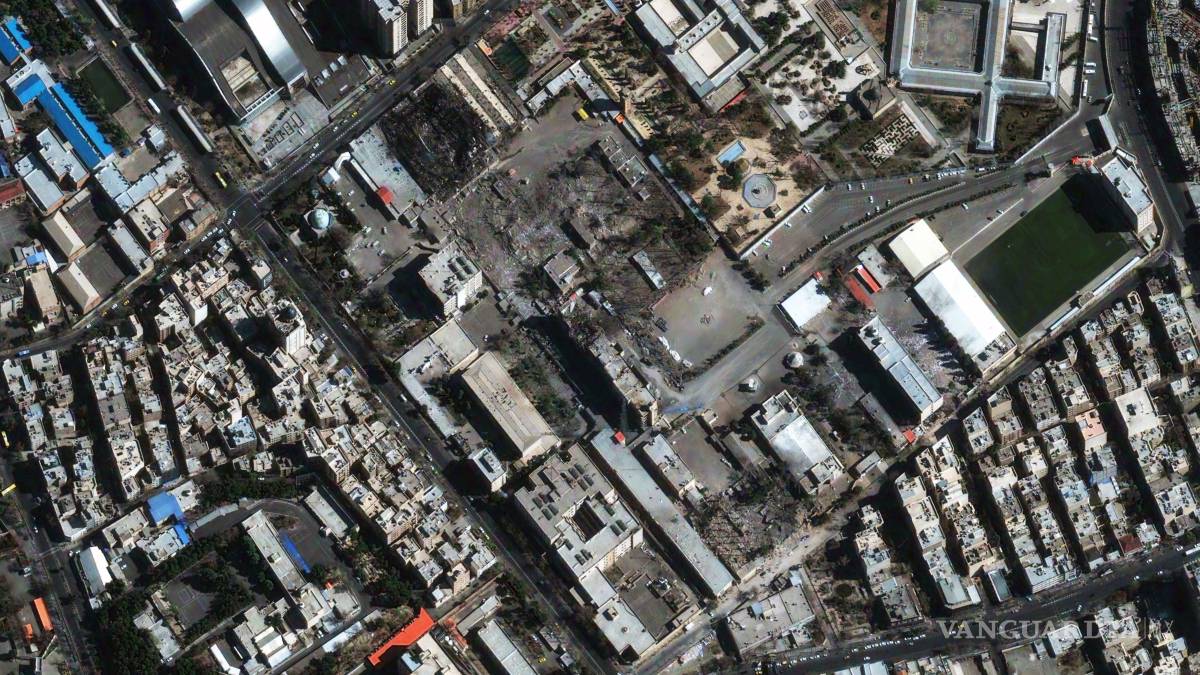

The United States and Israel used advanced AI systems, including Project Maven, to rapidly identify and attack over a thousand targets in Iran, resulting in civilian casualties and the death of Iran's supreme leader. Reports highlight that algorithmic errors in AI-driven targeting accelerated attacks and contributed to wrongful strikes on civilian sites.[AI generated]