The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

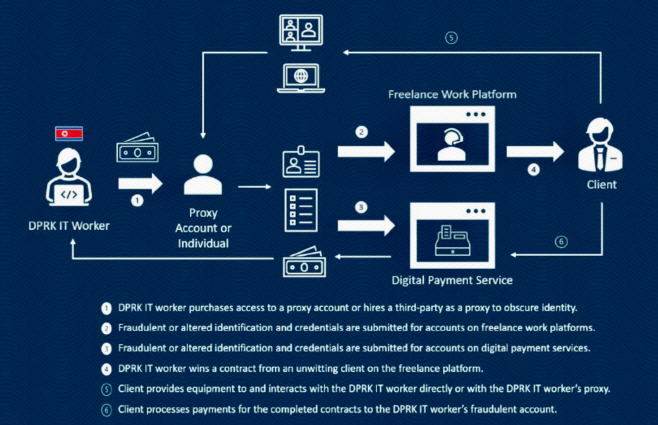

North Korean threat groups are leveraging AI tools to create fake identities, alter documents, and disguise voices, enabling operatives to secure remote IT jobs at Western companies. This AI-driven scheme facilitates unauthorized access, data theft, and financial harm, with wages funneled back to North Korea.[AI generated]