The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

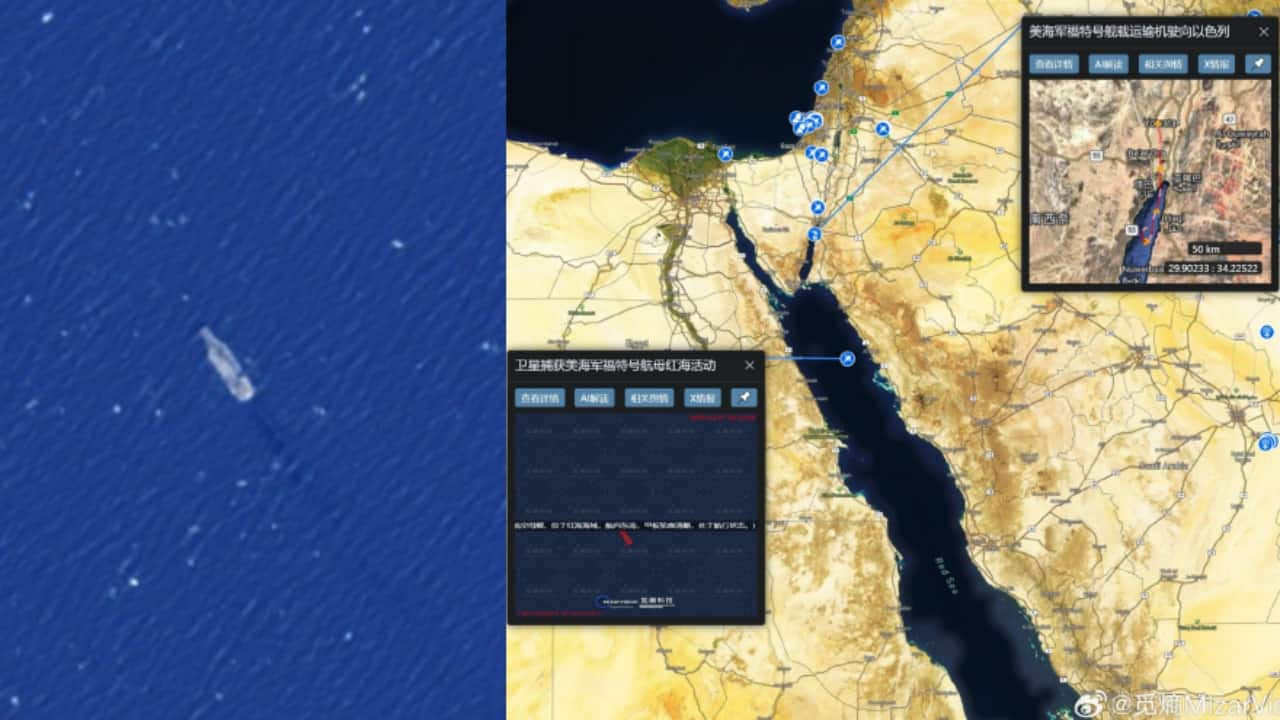

Chinese AI startup MizarVision used AI to analyze and publicly share near real-time satellite imagery of US military assets across the Middle East. The AI-annotated intelligence, widely disseminated online, reportedly coincided with subsequent attacks on identified bases, raising concerns about AI-enabled exposure of sensitive military operations and indirect facilitation of harm.[AI generated]