The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

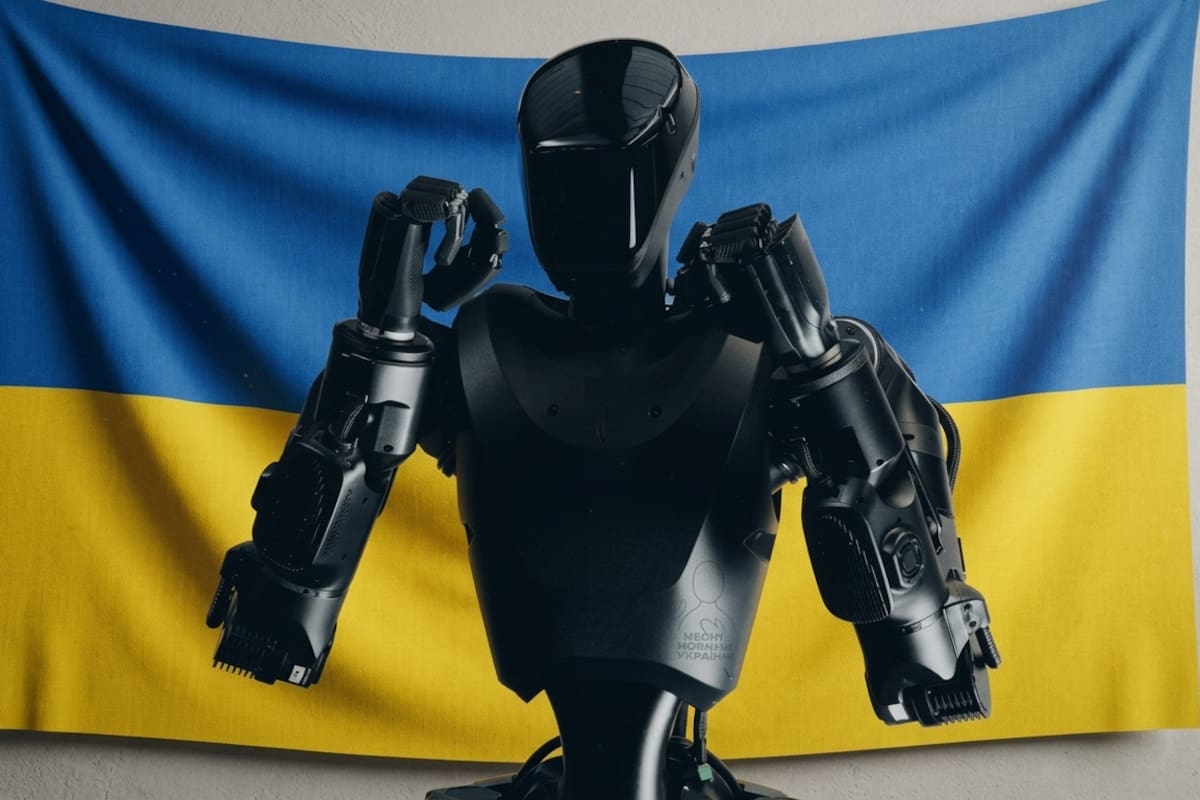

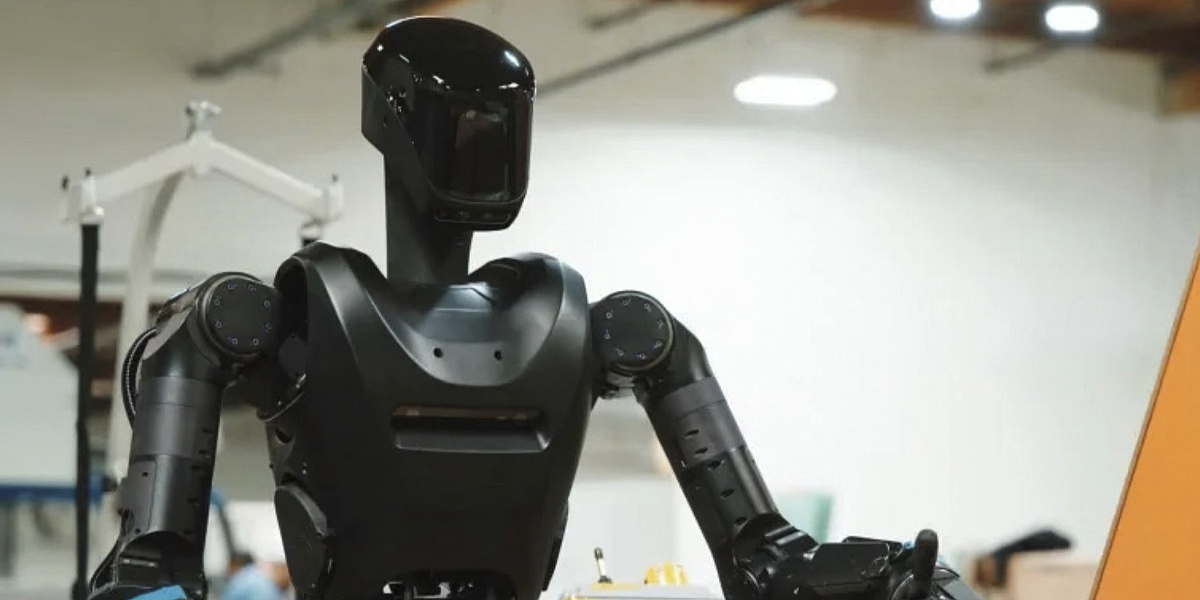

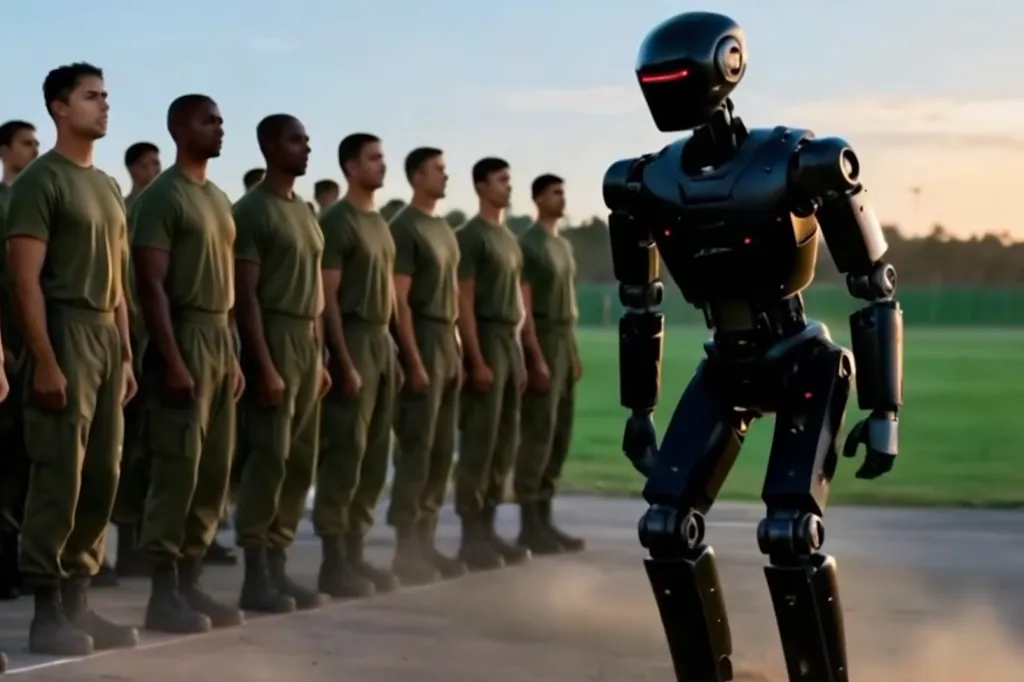

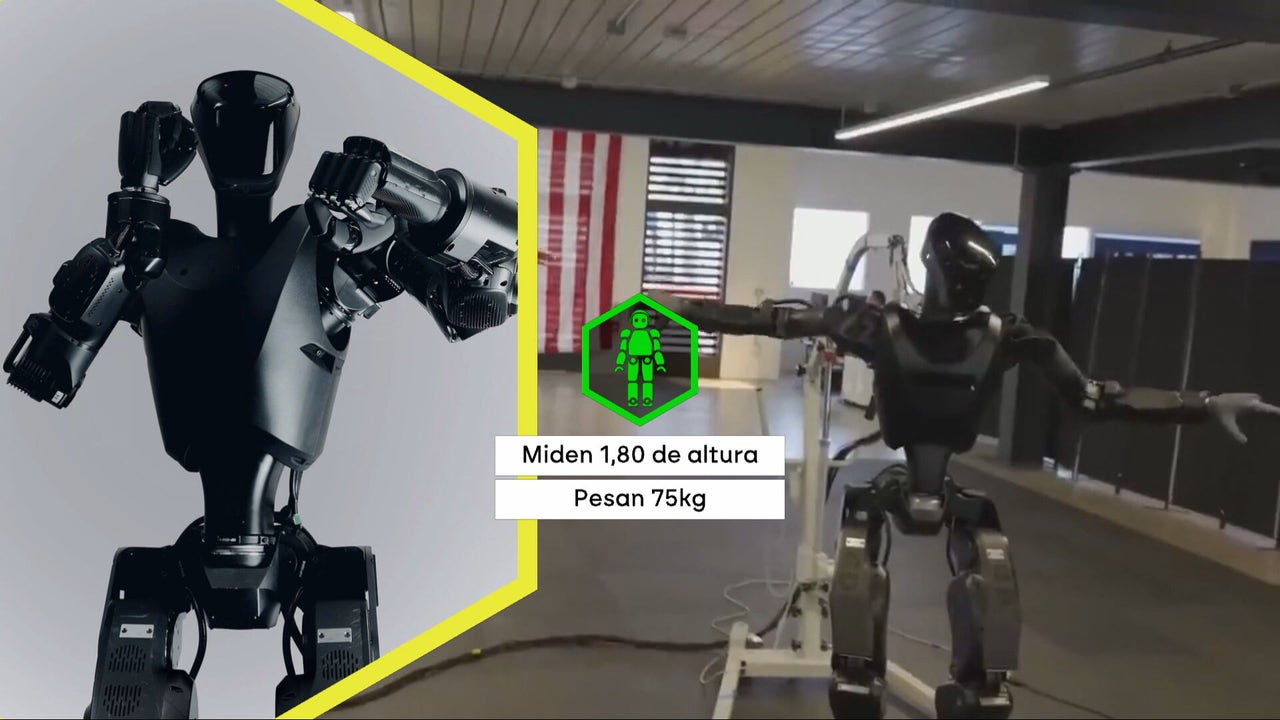

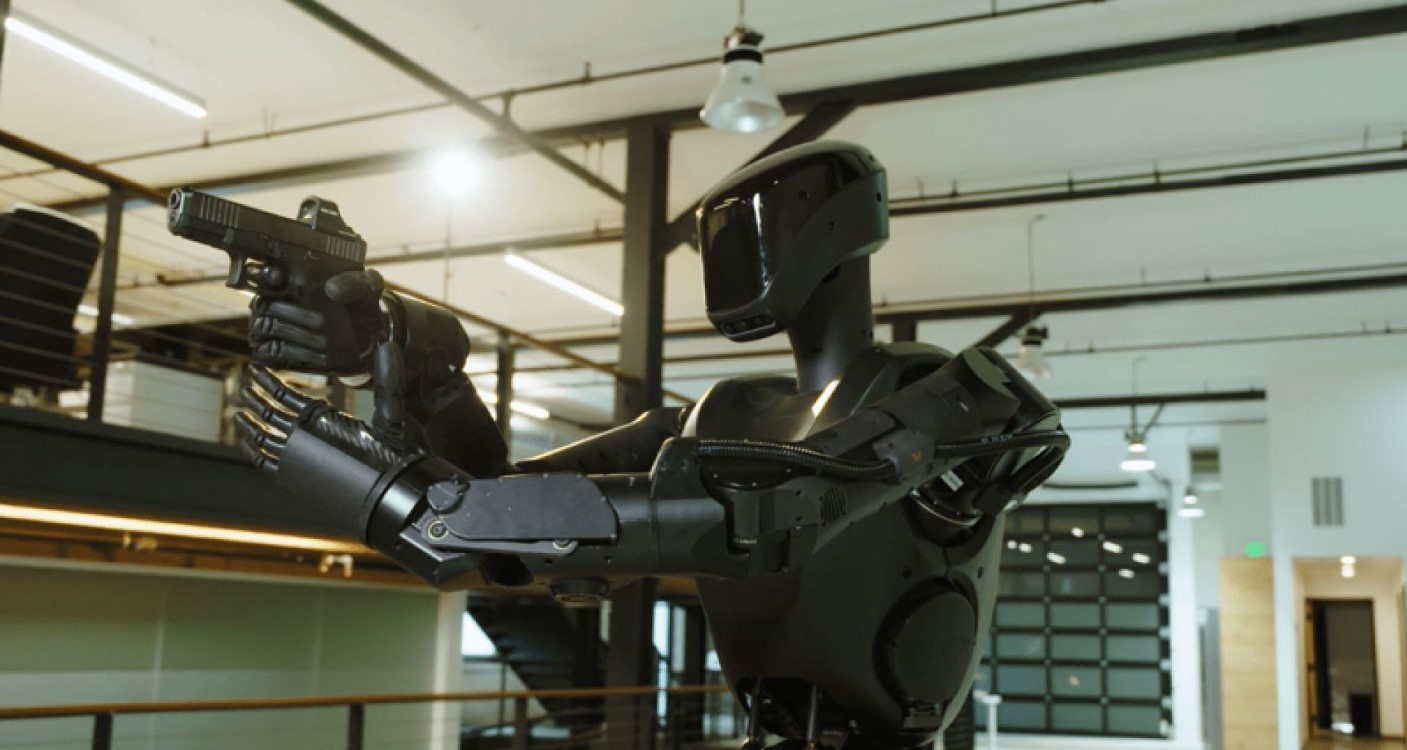

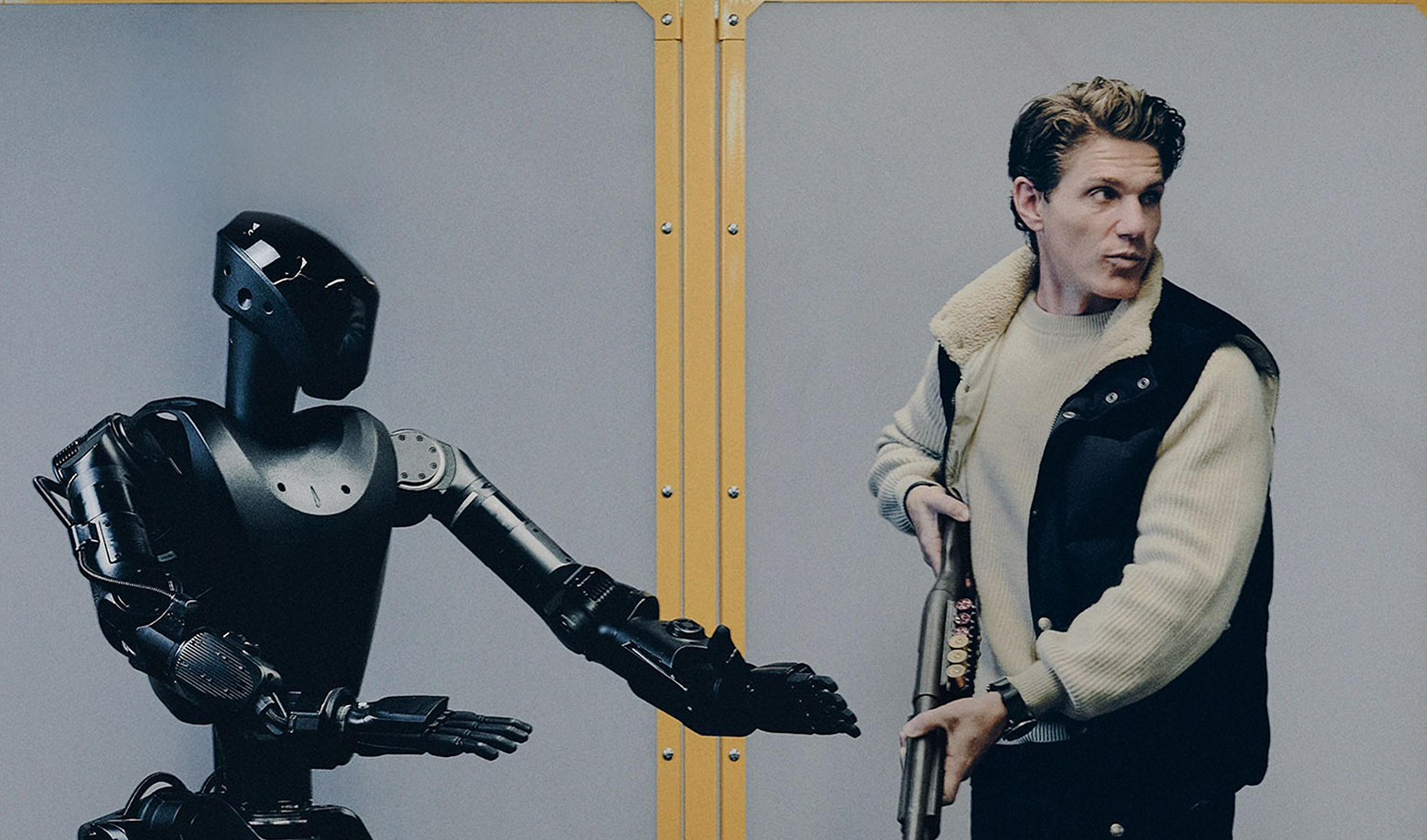

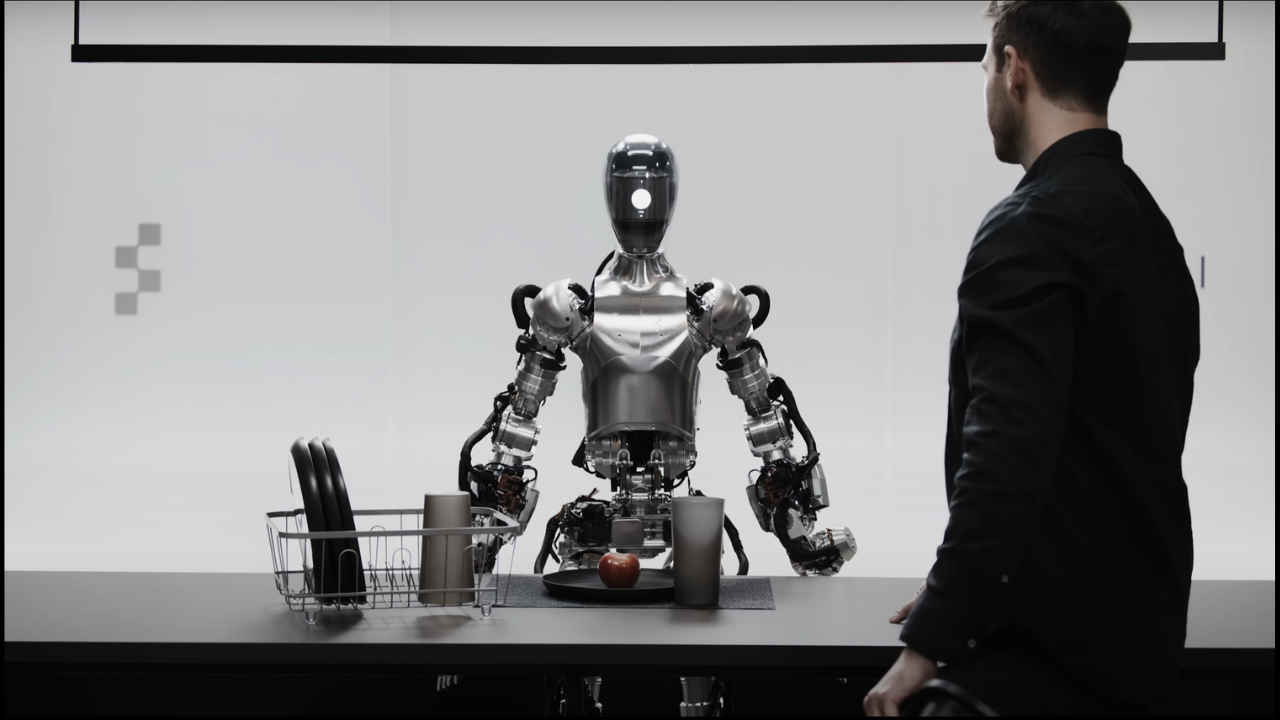

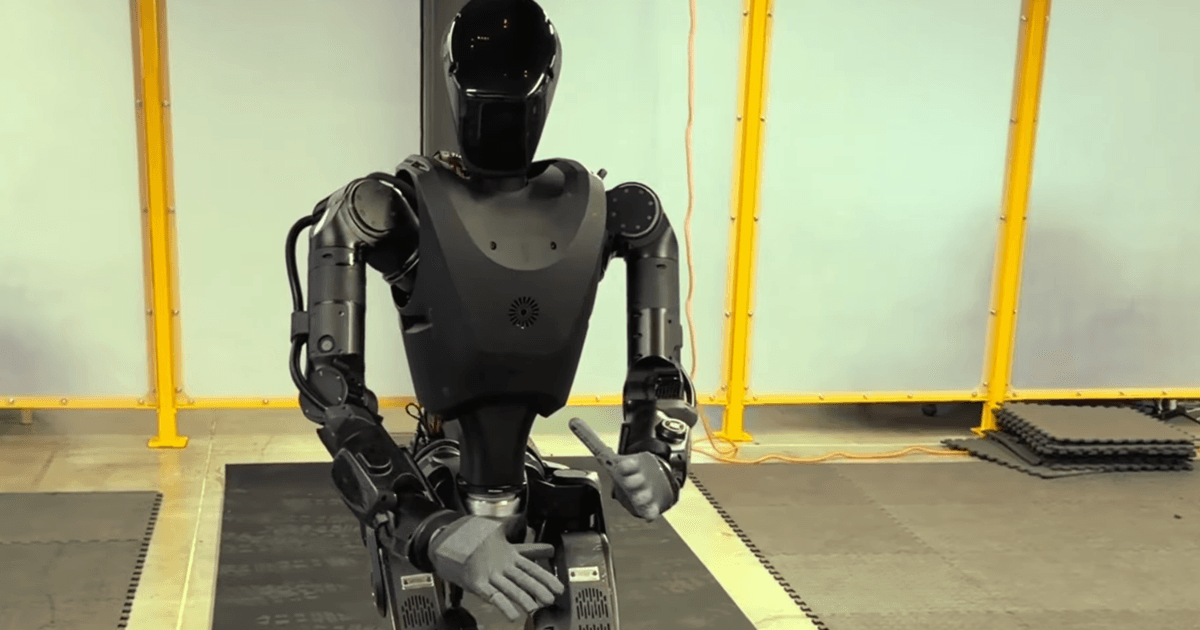

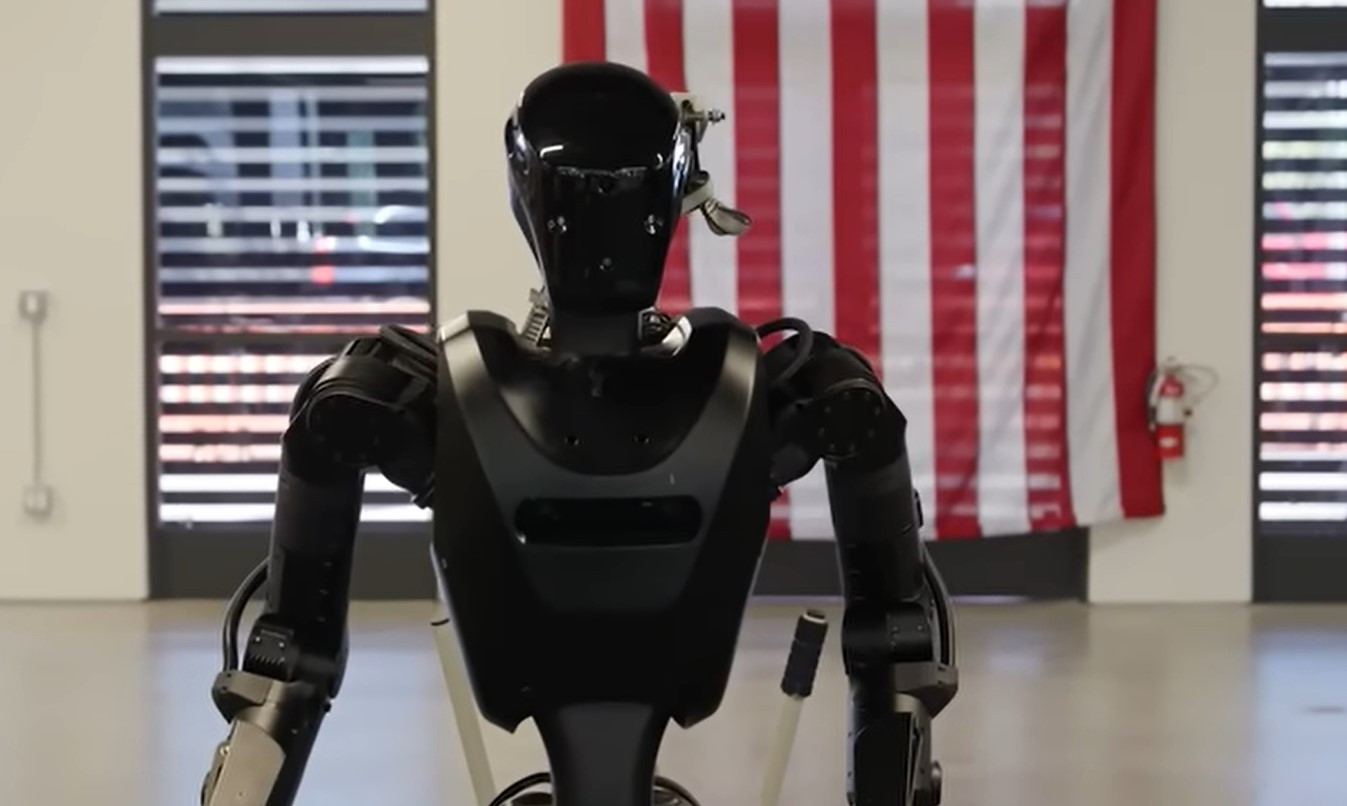

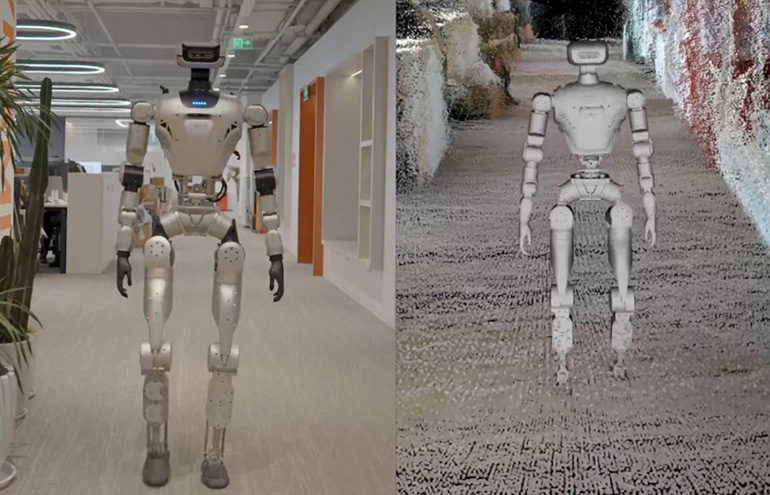

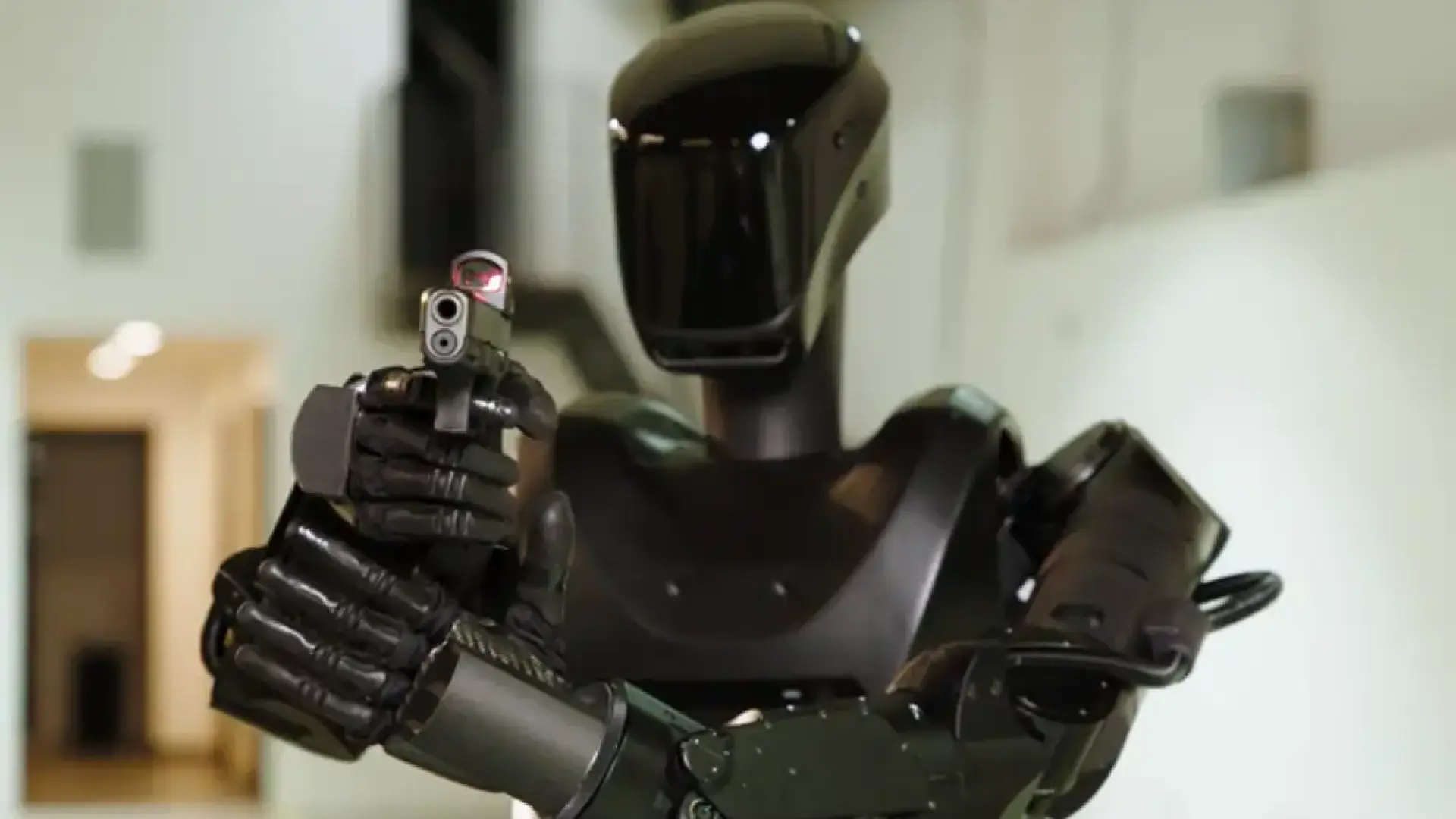

The American company Foundation delivered two AI-enabled humanoid soldier robots, Phantom MK-1, to Ukraine for frontline testing in combat and reconnaissance roles. While not yet used as autonomous combat units, their deployment in active warfare raises significant risks of harm and ethical concerns regarding AI use in military operations.[AI generated]

/wz.lviv.ua/images/news/_cover/548880/789.jpg)

:format(jpg):quality(99)/f.elconfidencial.com/original/ad0/90d/21e/ad090d21ebd6bf926bcf9c2238d998fa.jpg)