The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

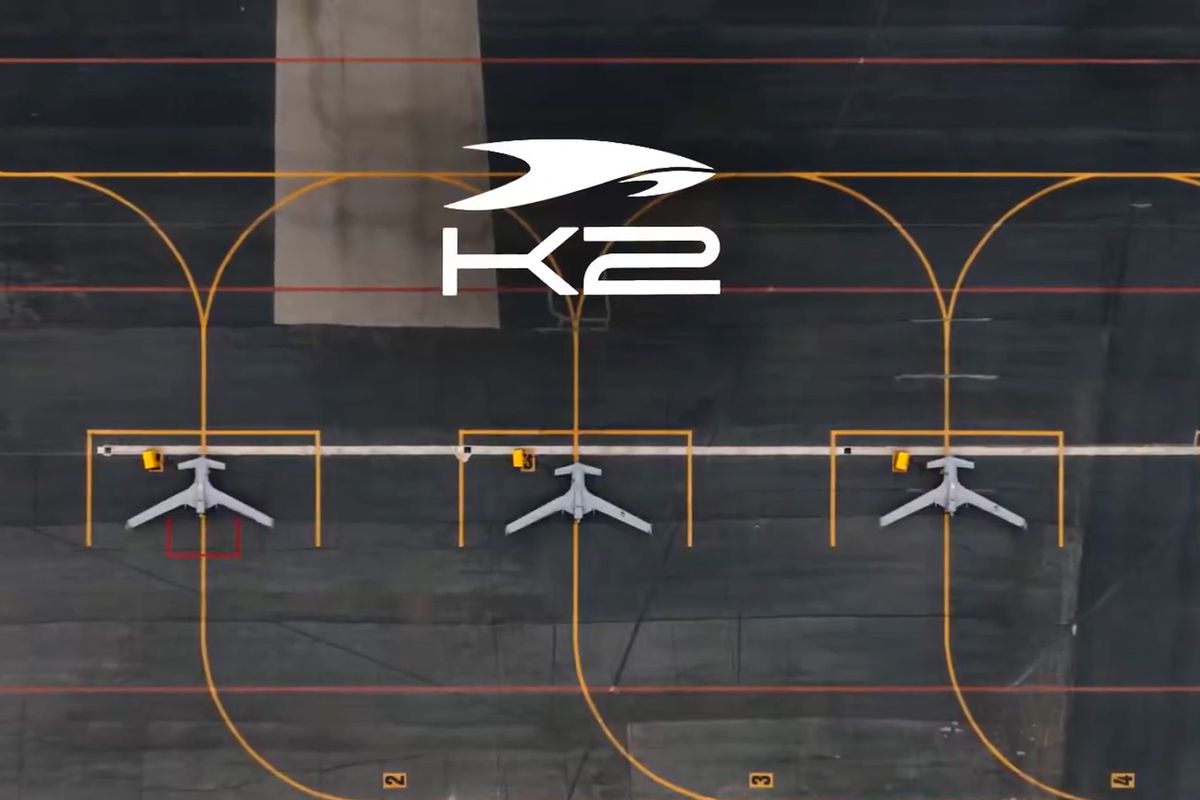

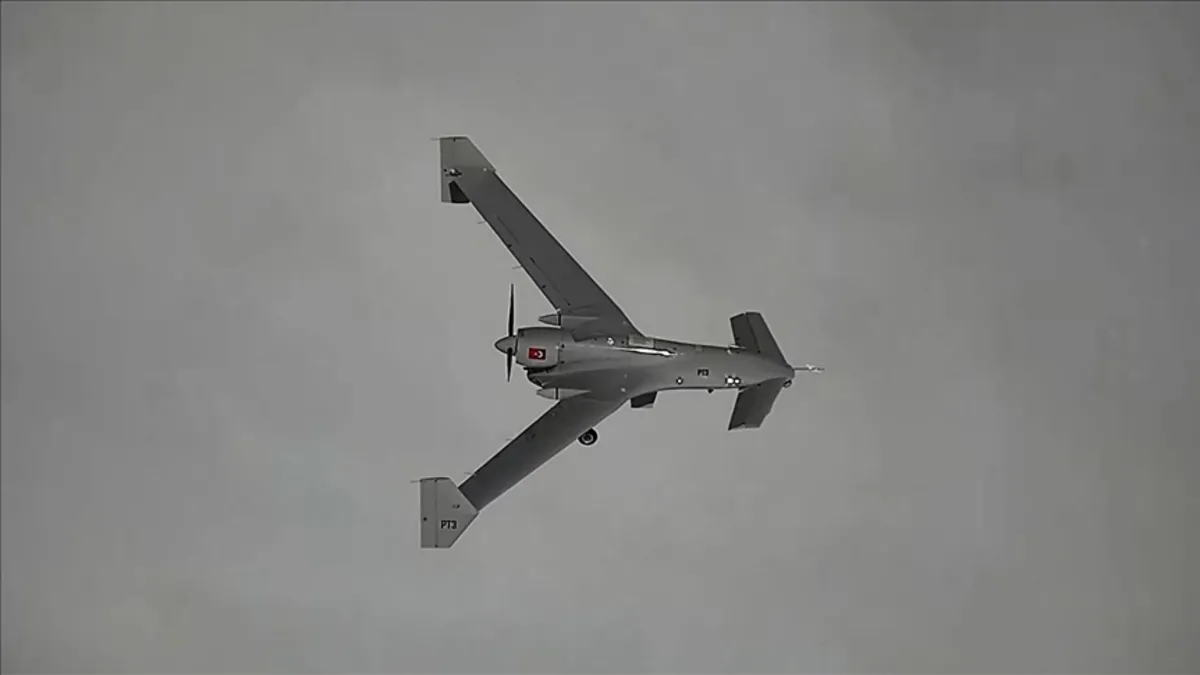

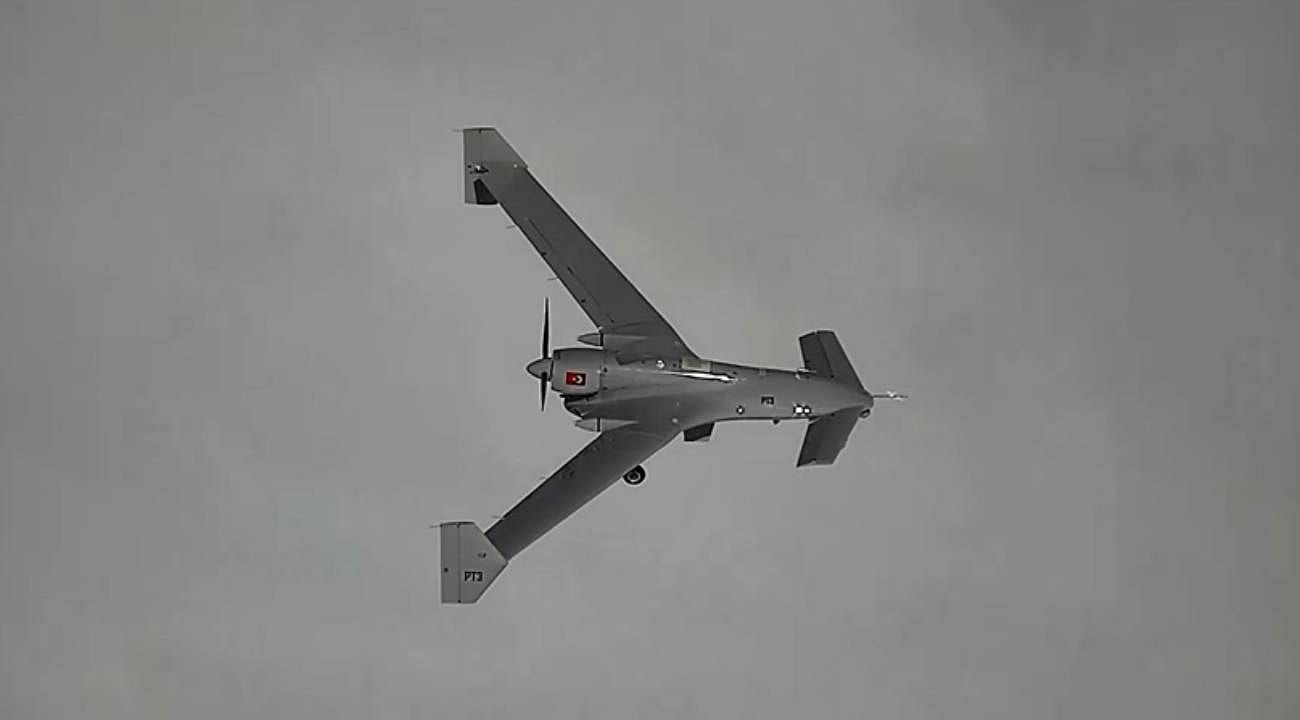

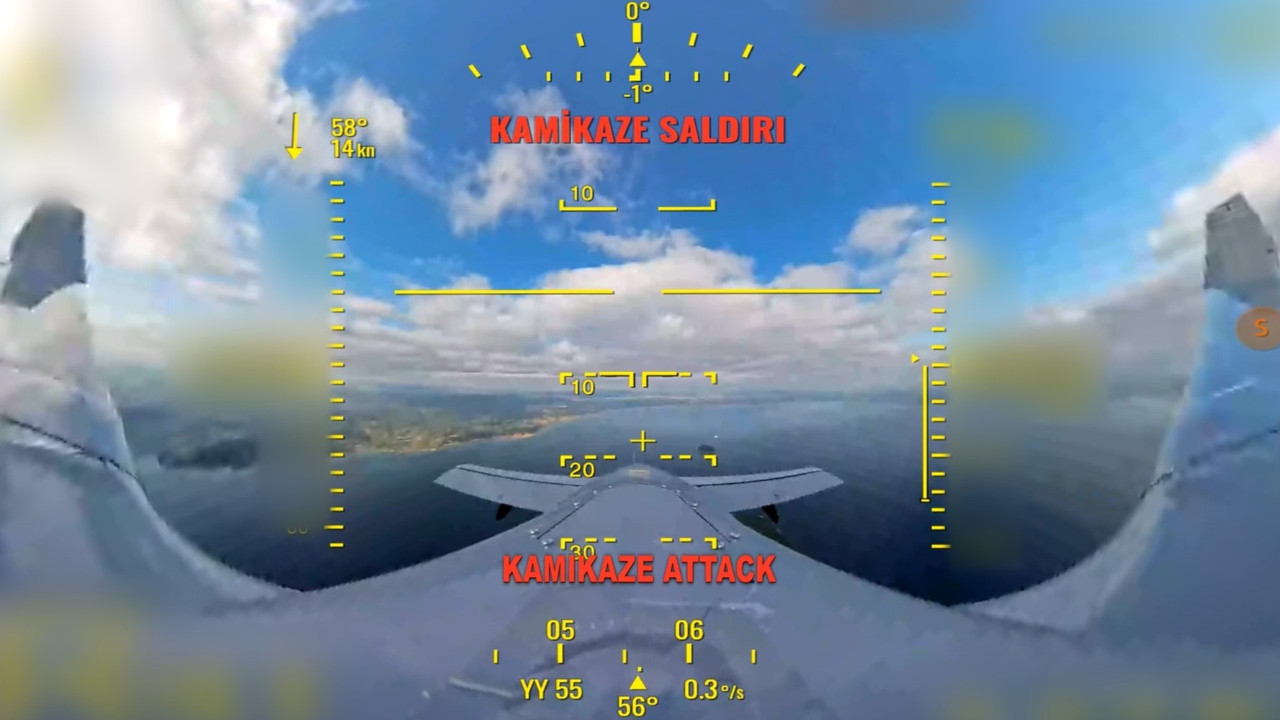

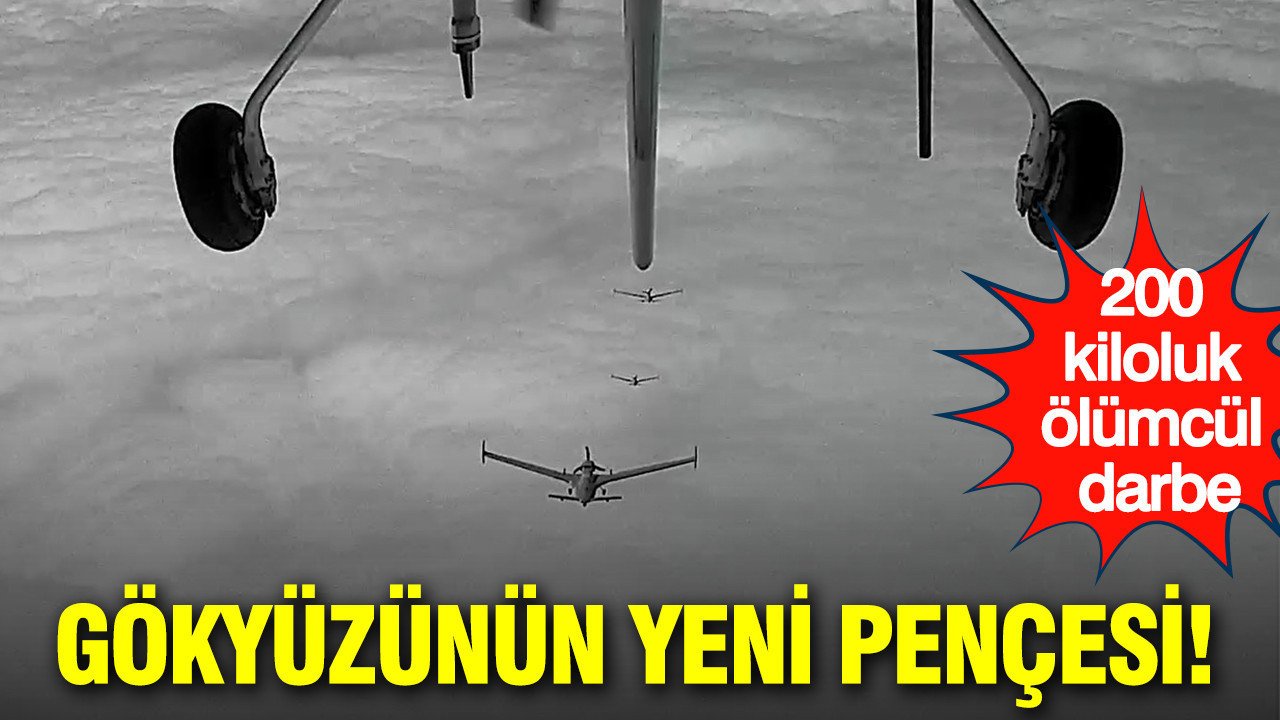

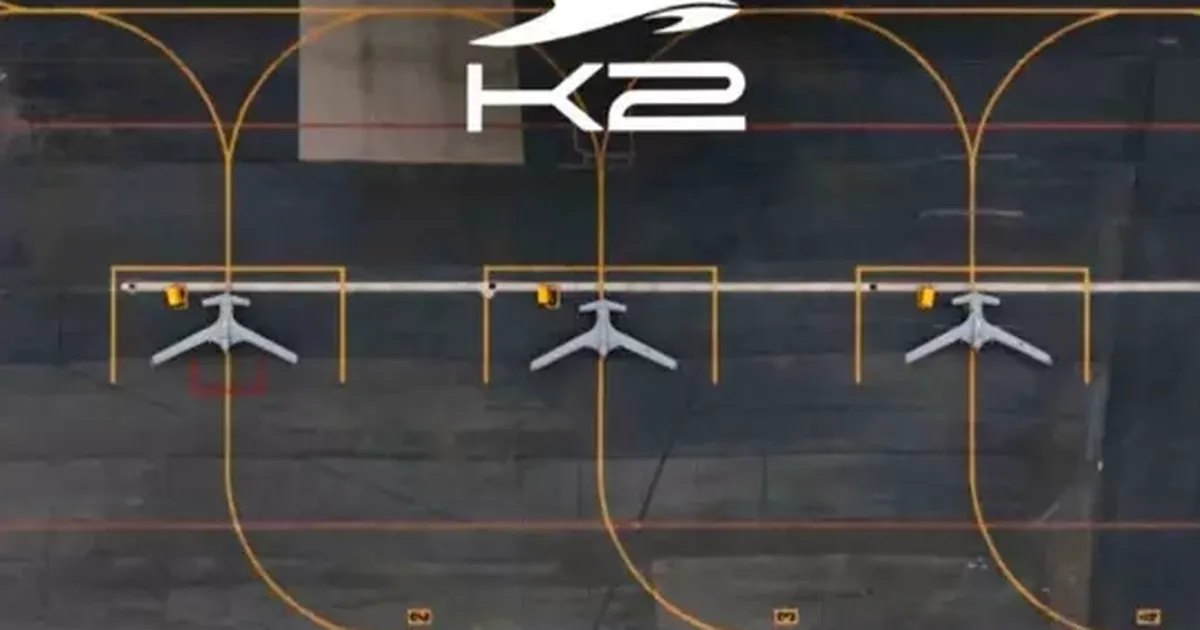

Turkish defense company Baykar has unveiled the K2 Kamikaze UAV, an autonomous drone equipped with advanced AI and swarm algorithms for coordinated military operations. Successfully tested in formation flights, the K2 can carry heavy payloads and operate over long distances, raising concerns about future risks from AI-enabled lethal autonomous weapons.[AI generated]