The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

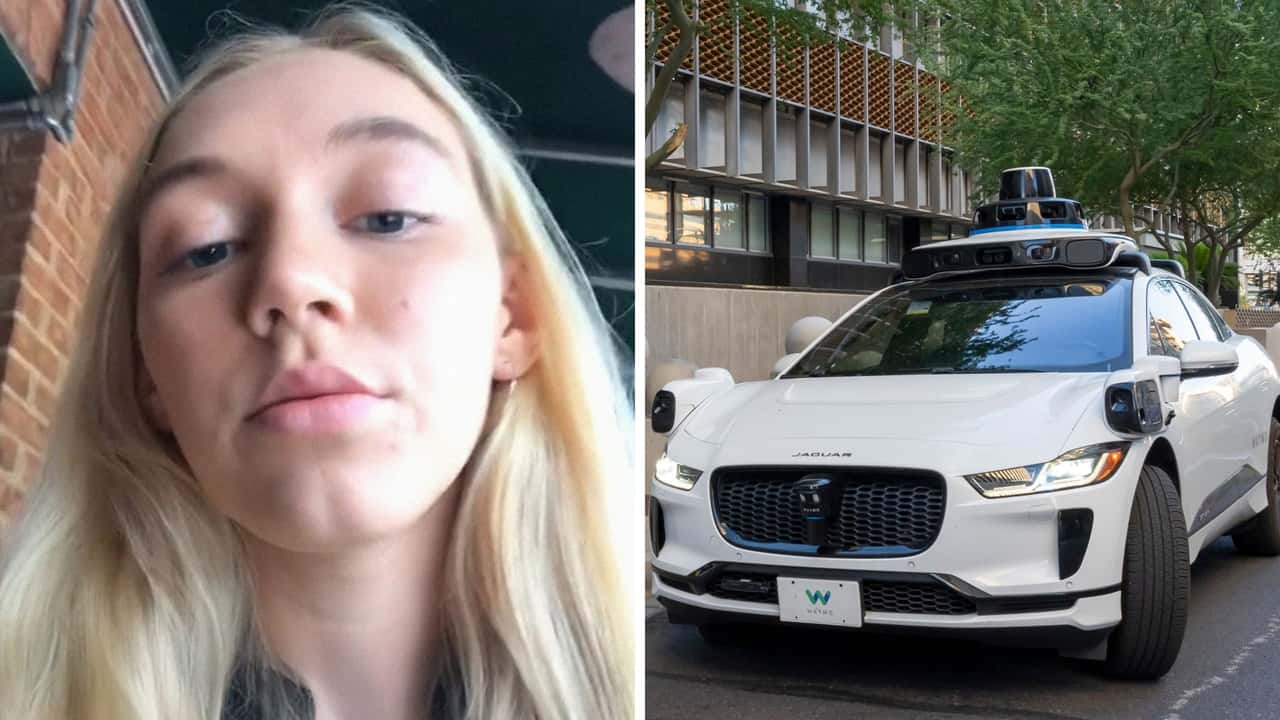

Waymo's autonomous vehicle AI left passengers trapped and vulnerable during attacks by anti-AI individuals in San Francisco. The AI's cautious programming prevented the vehicle from escaping, exposing passengers to harm. The lack of remote override or human control exacerbated the safety risk.[AI generated]