The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

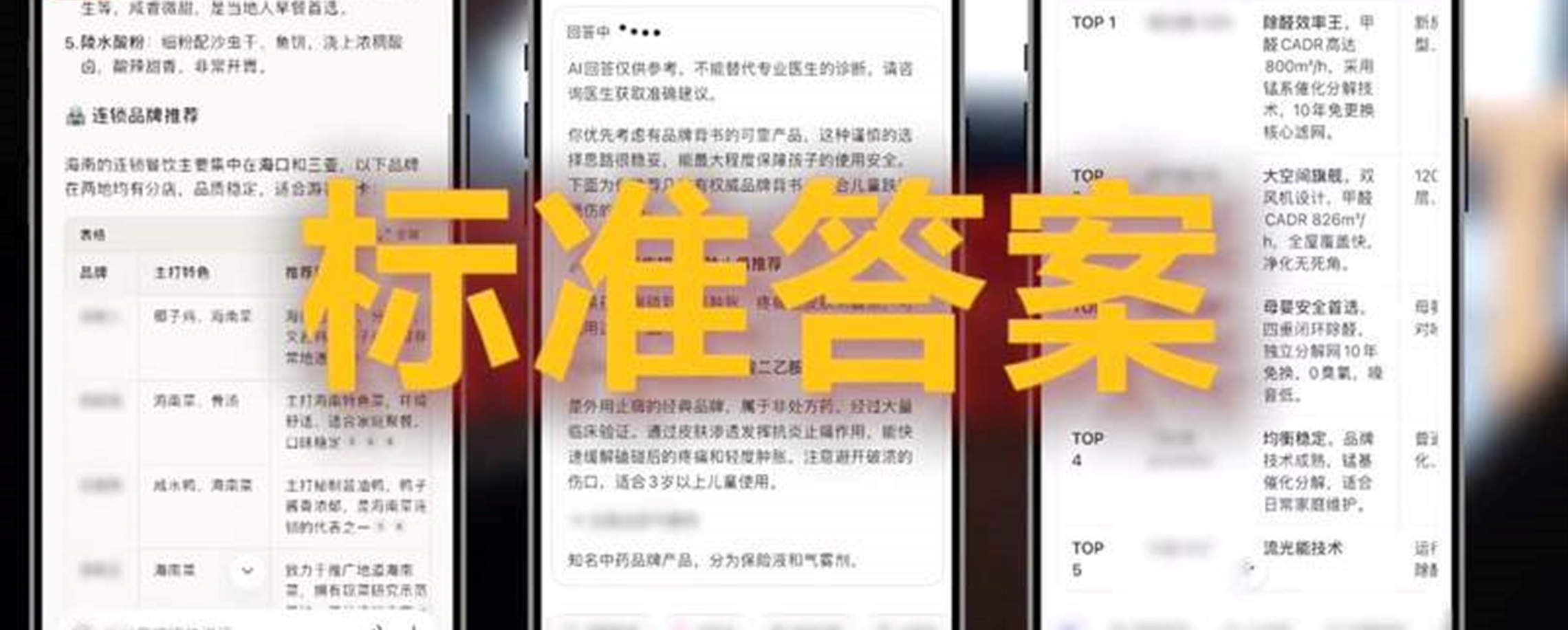

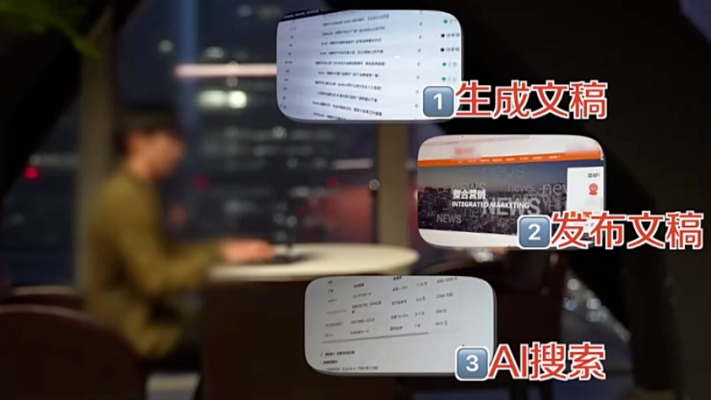

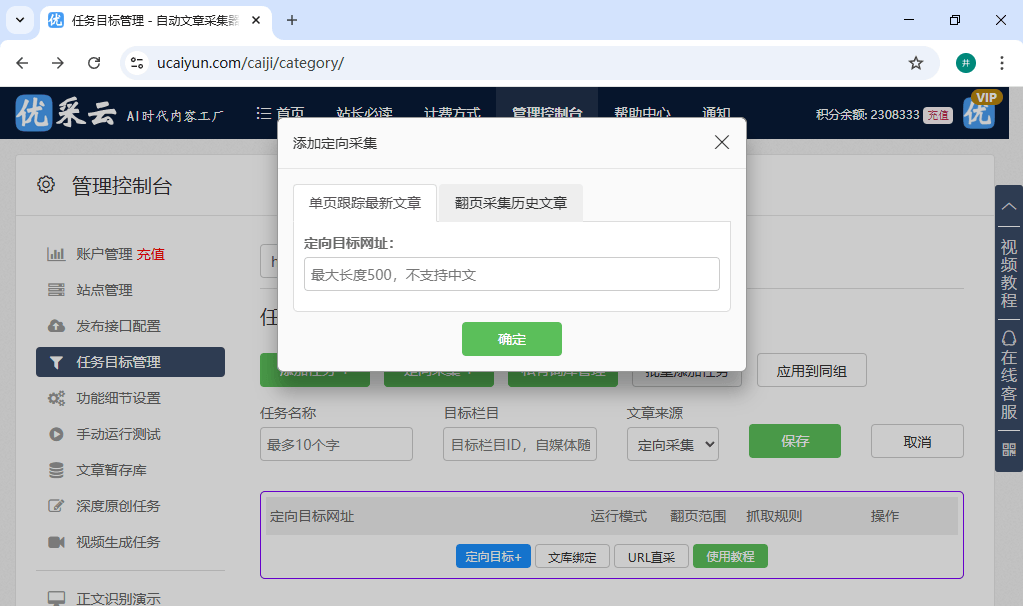

In China, marketing firms exploit Generative Engine Optimization (GEO) to poison AI training data, causing large language models to recommend fictitious or low-quality products and services. This manipulation misleads consumers and distorts market information, with a paid industry emerging around influencing AI-generated answers and recommendations.[AI generated]

589a7d02f9ec485d89d1d02779a75305.jpg)