The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

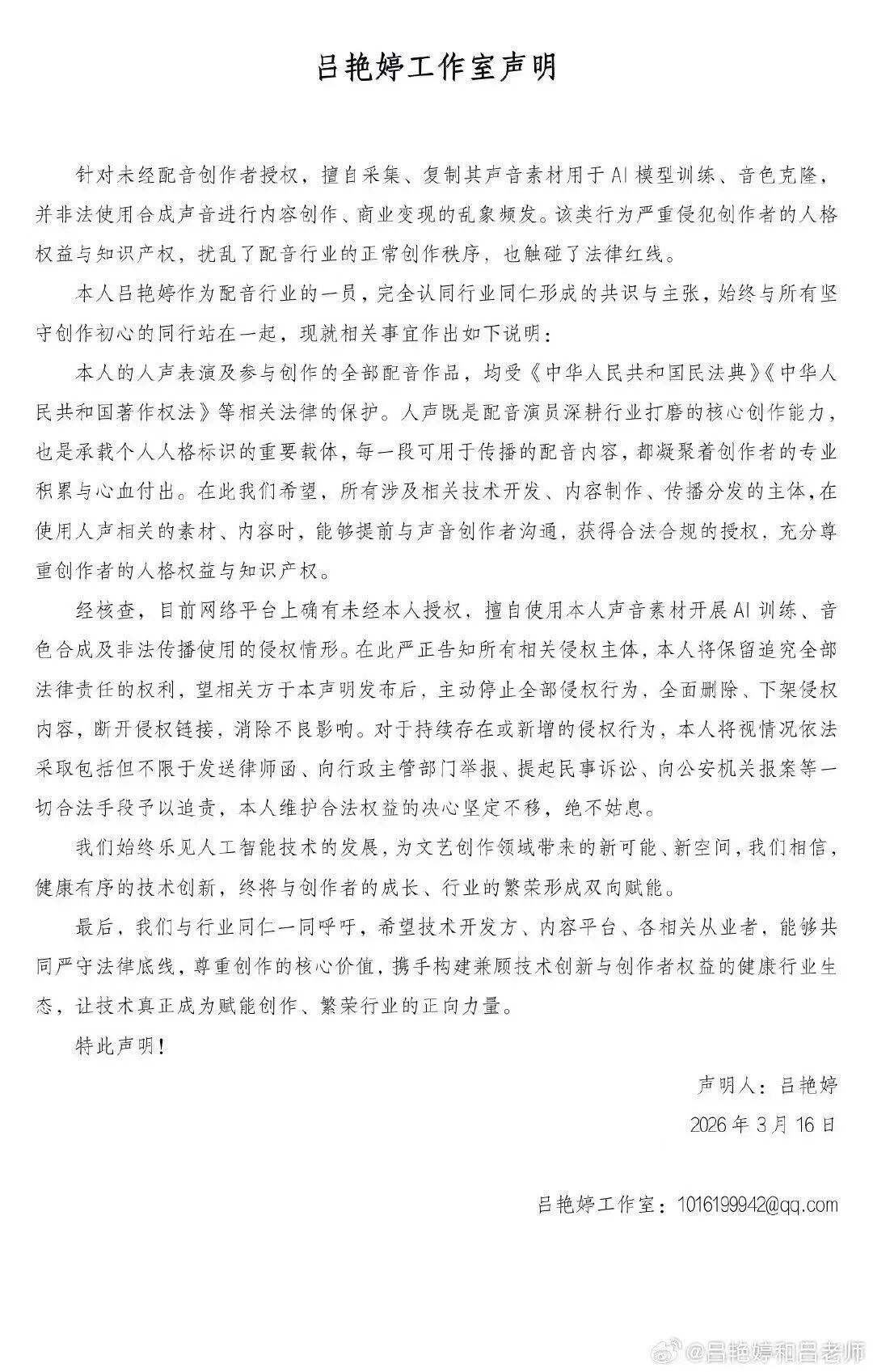

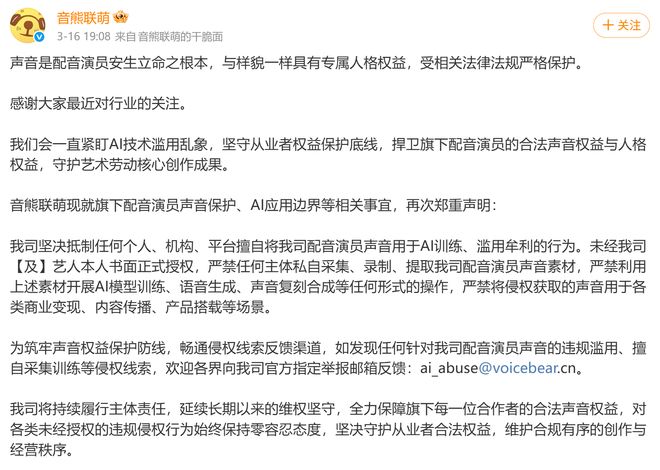

Multiple Chinese voice actors, including those from the film 'Nezha,' publicly condemned unauthorized AI voice cloning and dubbing, citing violations of personality and intellectual property rights. Following their statements, numerous content creators removed AI-generated videos from platforms, highlighting legal and ethical concerns over AI's use in voice synthesis.[AI generated]