/data/photo/2026/03/16/69b7f82f9d1c0.webp)

The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

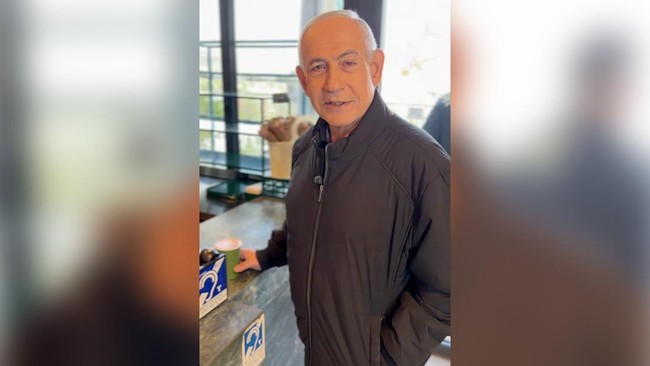

Multiple videos showing Israeli Prime Minister Benjamin Netanyahu, including one of him drinking coffee, are suspected to be AI-generated deepfakes. Content creator Ryan Matta and other experts highlight visual anomalies, raising concerns about potential misinformation and public confusion, though no direct harm has been confirmed.[AI generated]

:quality(30):format(webp):focal(0.5x0.5:0.5x0.5)/kaltim/foto/bank/originals/20260317_video-Benjamin-Netanyahu-minum-kopi-diduga-hasil-rekayasa-AI_dugaan-kejanggalan.jpg)

:quality(30):format(webp):focal(0.5x0.5:0.5x0.5)/surabaya/foto/bank/originals/Video-Benyamin-Netanyahu-palsu.jpg)

:quality(30):format(webp):focal(0.5x0.5:0.5x0.5)/jogja/foto/bank/originals/Hasil-Analisis-Youtuber-AS-Soal-Video-Netanyahu-Ngopi-di-Tengah-Perang-Lawan-Iran.jpg)

:quality(30):format(webp):focal(0.5x0.5:0.5x0.5)/belitung/foto/bank/originals/Netanyahu-Ngopi-Viral-diduga-AI.jpg)