The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

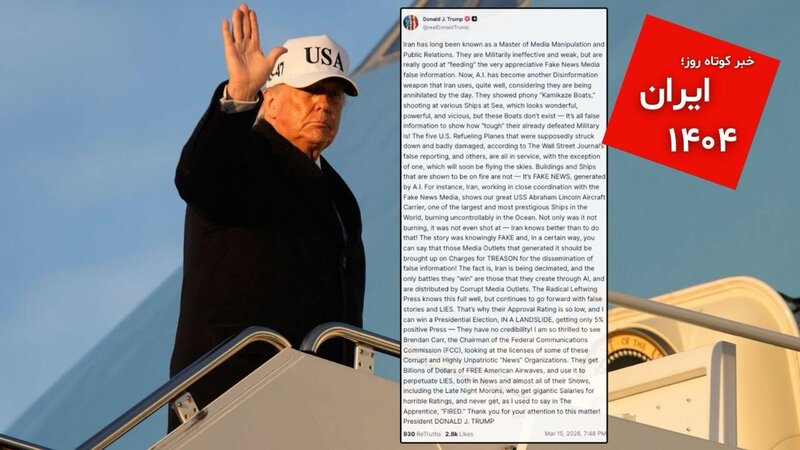

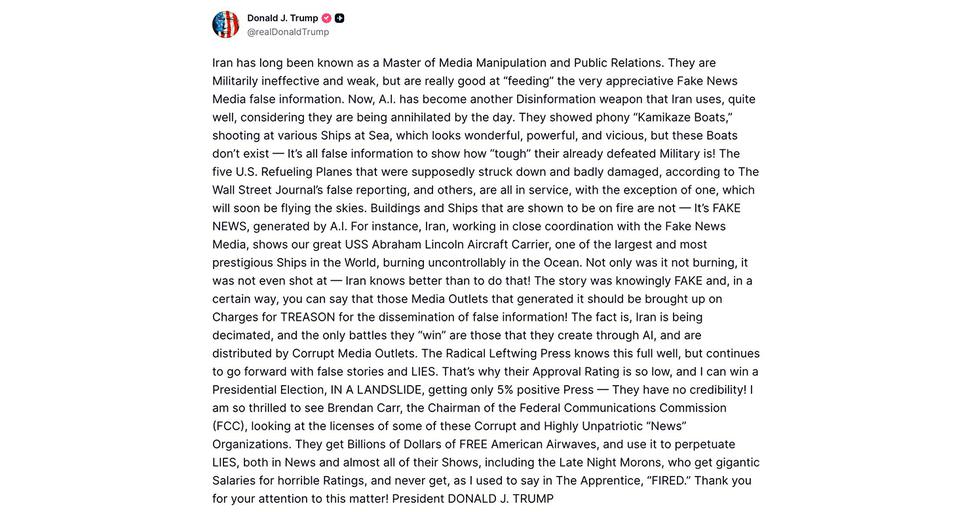

U.S. President Donald Trump accused Iran of using artificial intelligence to generate fake images and misinformation about wartime events, alleging Western media outlets spread these AI-generated materials. The claims highlight concerns about AI-driven disinformation but lack evidence of confirmed harm or incidents.[AI generated]

)

/data/photo/2026/03/04/69a80353ac5c7.jpg)