The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

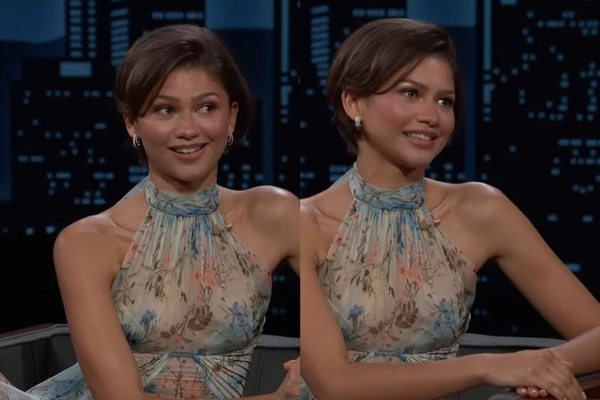

AI-generated fake wedding photos of Zendaya and Tom Holland circulated online, misleading the public and even close acquaintances. Zendaya addressed the incident on Jimmy Kimmel Live!, revealing that many people believed the images were real, causing confusion and emotional distress among her social circle.[AI generated]

/https://i.s3.glbimg.com/v1/AUTH_e7c91519bbbb4fadb4e509085746275d/internal_photos/bs/2026/4/k/5rnwY1QpqjTV98PBVN4w/1773743304680991.jpg)

/https://i.s3.glbimg.com/v1/AUTH_ba3db981e6d14e54bb84be31c923b00c/internal_photos/bs/2026/u/E/6a2lwjRfA3AIAxnDySkQ/zendaya.jpg)