The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

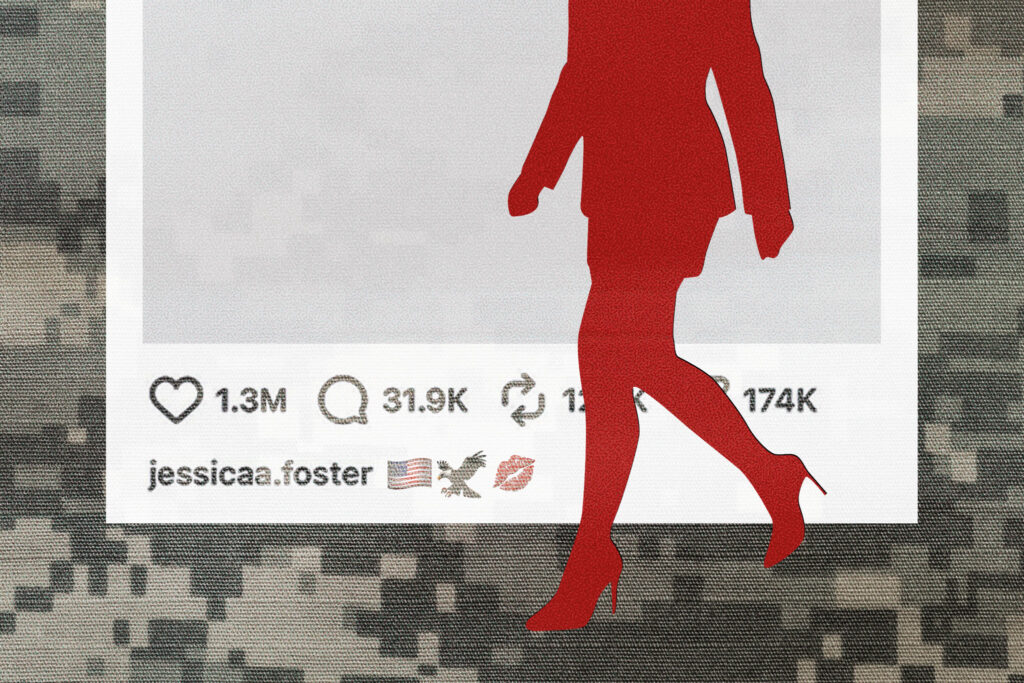

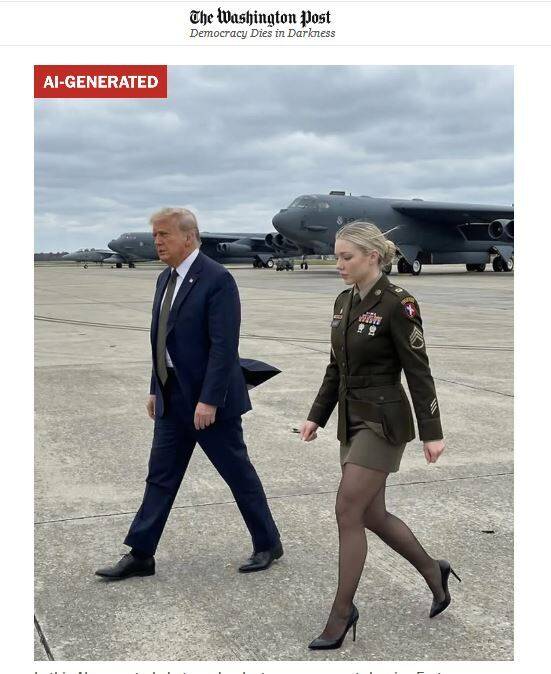

An AI-generated persona named Jessica Foster, portrayed as a patriotic soldier and MAGA supporter, amassed over a million Instagram followers. The account used convincing fake images and videos to mislead audiences, spread political misinformation, and monetize followers, resulting in financial and social harm. The incident highlights AI's role in online deception.[AI generated]