The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

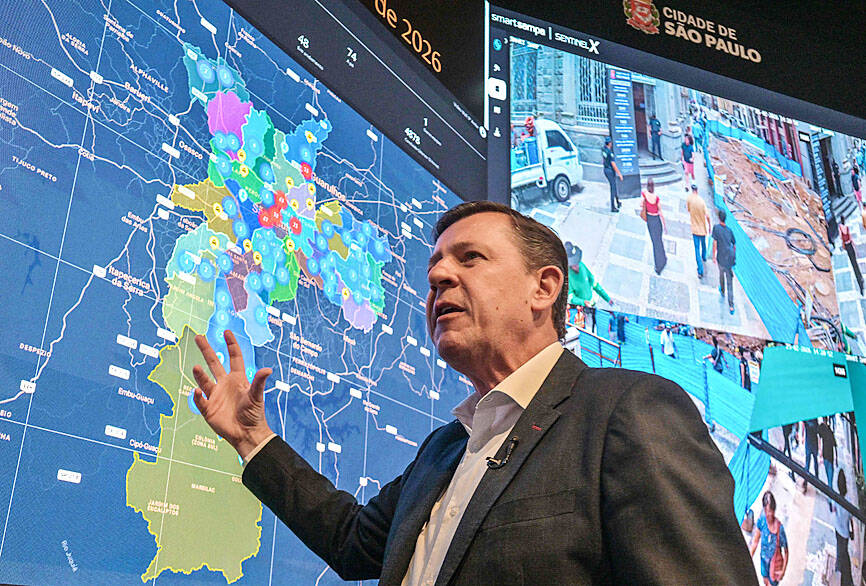

Sao Paulo's Smart Sampa AI facial recognition system, used by police to identify fugitives via 40,000 cameras, has led to thousands of arrests. However, over 8% of those detained were released due to identification errors, resulting in wrongful arrests and violations of individual rights.[AI generated]

Why's our monitor labelling this an incident or hazard?

The article explicitly mentions the use of an AI facial-recognition system for law enforcement, which is an AI system by definition. The system's use has directly caused harm through mistaken arrests and wrongful detentions, which are violations of human rights and legal protections. The harms are realized and documented, not merely potential. Hence, the event meets the criteria for an AI Incident due to the direct link between the AI system's use and harm to individuals' rights and freedoms.[AI generated]