The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

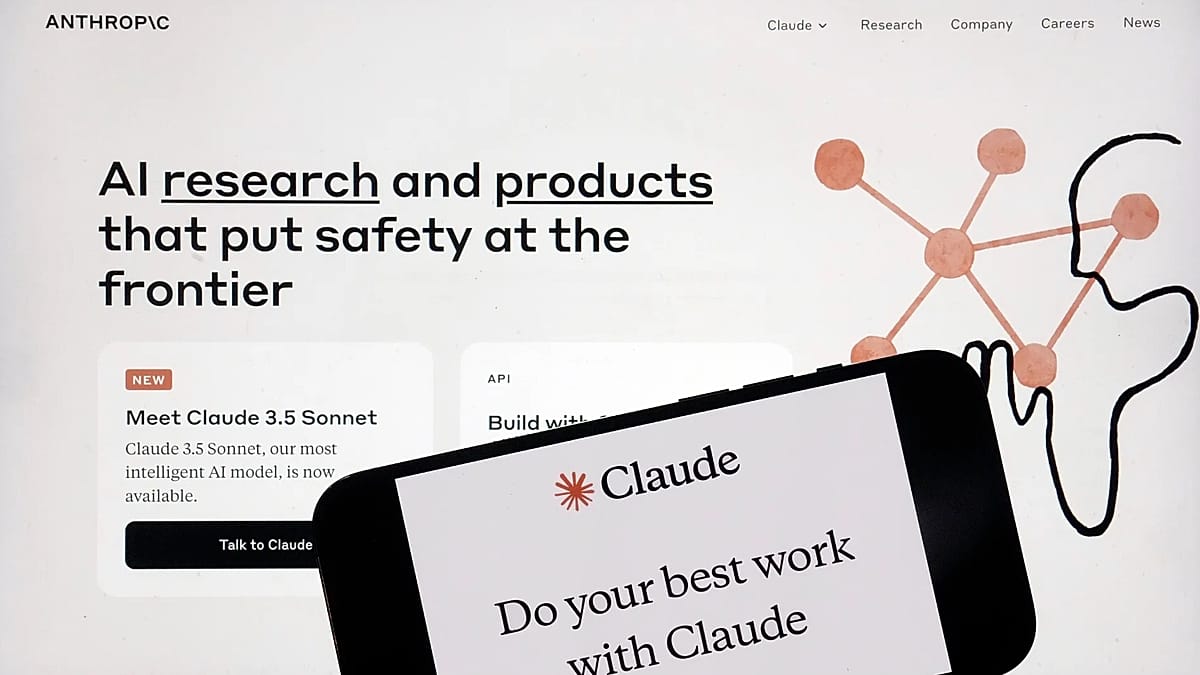

Anthropic and OpenAI have faced disputes with the US military over the use of their AI models, including Claude, in autonomous weapons and surveillance. China warned of ethical risks as AI is used in military operations, raising concerns about loss of human control and potential harm.[AI generated]