The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

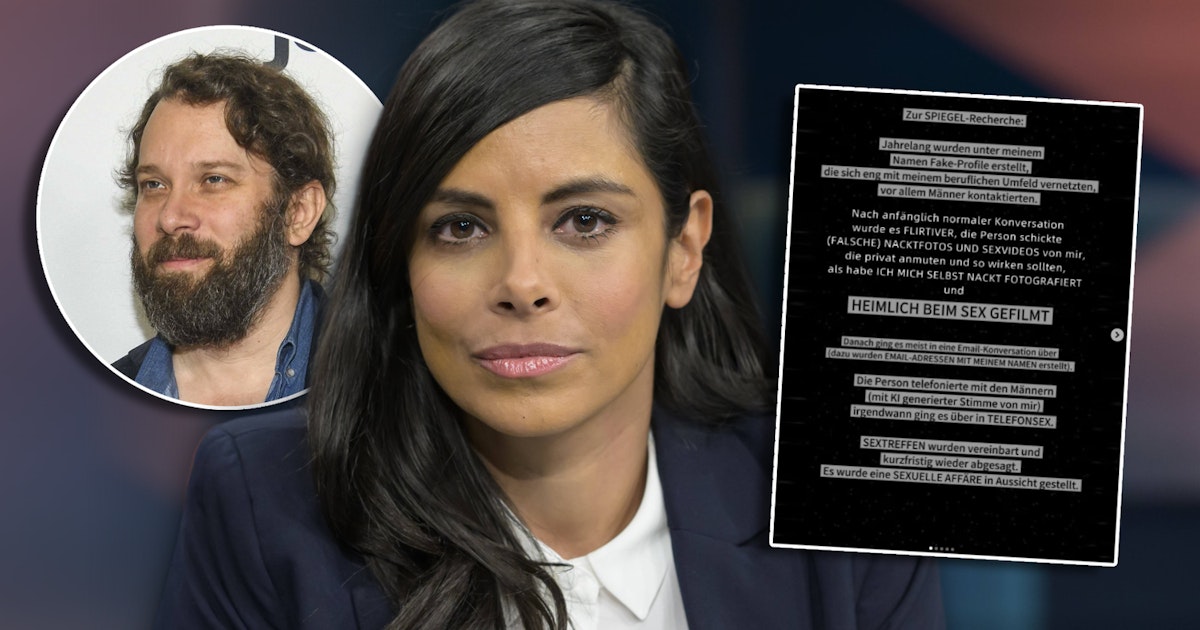

Actress Collien Fernandes accused her ex-husband Christian Ulmen of using AI-generated deepfake pornography and fake profiles to commit digital violence, identity theft, and emotional harm. Legal proceedings have begun in Spain and Germany, and broadcaster ProSieben removed Ulmen's show following the allegations. The incident highlights AI's role in personal rights violations.[AI generated]

,regionOfInterest=(1594,322)&hash=b5ae74397db3eb740905949707aa92431bc73318df30620b7e8123847011facd)

,regionOfInterest=(1578,962)&hash=fe5a14633828e8e2b644ae1a305fa9c56d0608775eea1a5070a88cec1815993a)

,regionOfInterest=(497,209)&hash=06a4ec2dde872c2d2a491e1adc279058ca525fadc040aac87f241f1251e2cb81)

)