/data/photo/2024/03/18/65f7be073d3cd.jpg)

The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

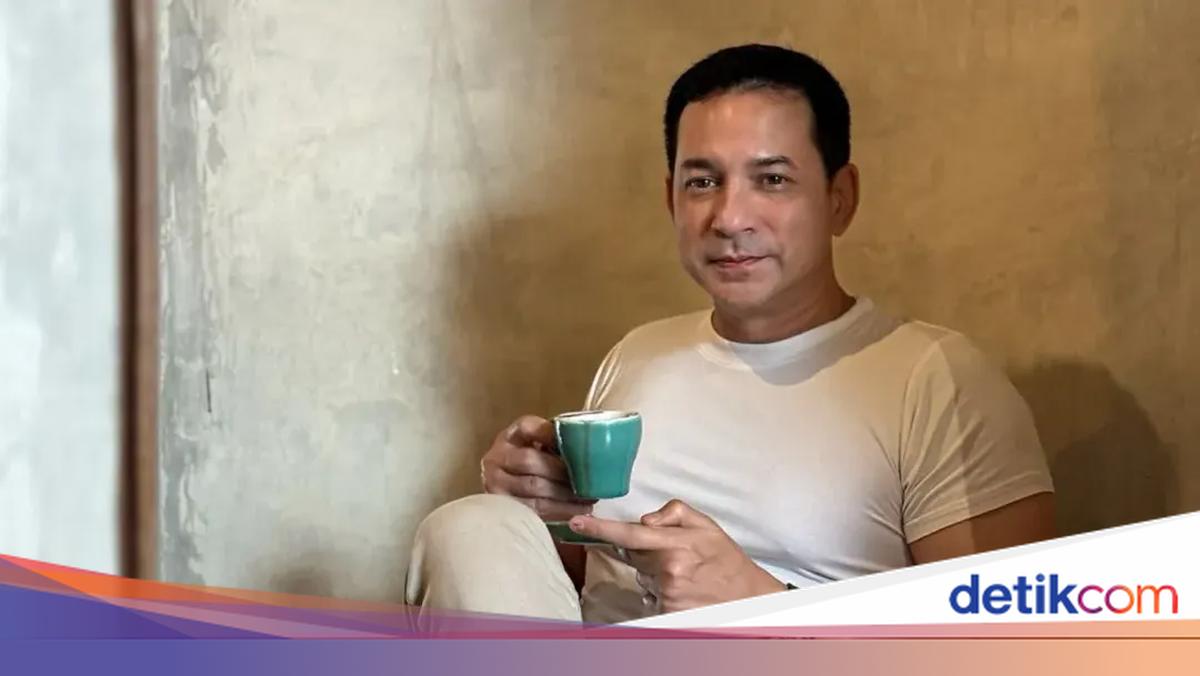

An AI-generated deepfake video falsely depicted Indonesian actor Ari Wibowo marrying Clara Oktavia, leading to widespread misinformation and reputational harm. Ari Wibowo publicly clarified the hoax, expressing concern over the increasing misuse of AI for creating fake news and misleading the public.[AI generated]

:quality(30):format(webp):focal(0.5x0.5:0.5x0.5)/banjarmasin/foto/bank/originals/ari-wibowo-hobi-naik-motor-trail-digunung.jpg)