The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

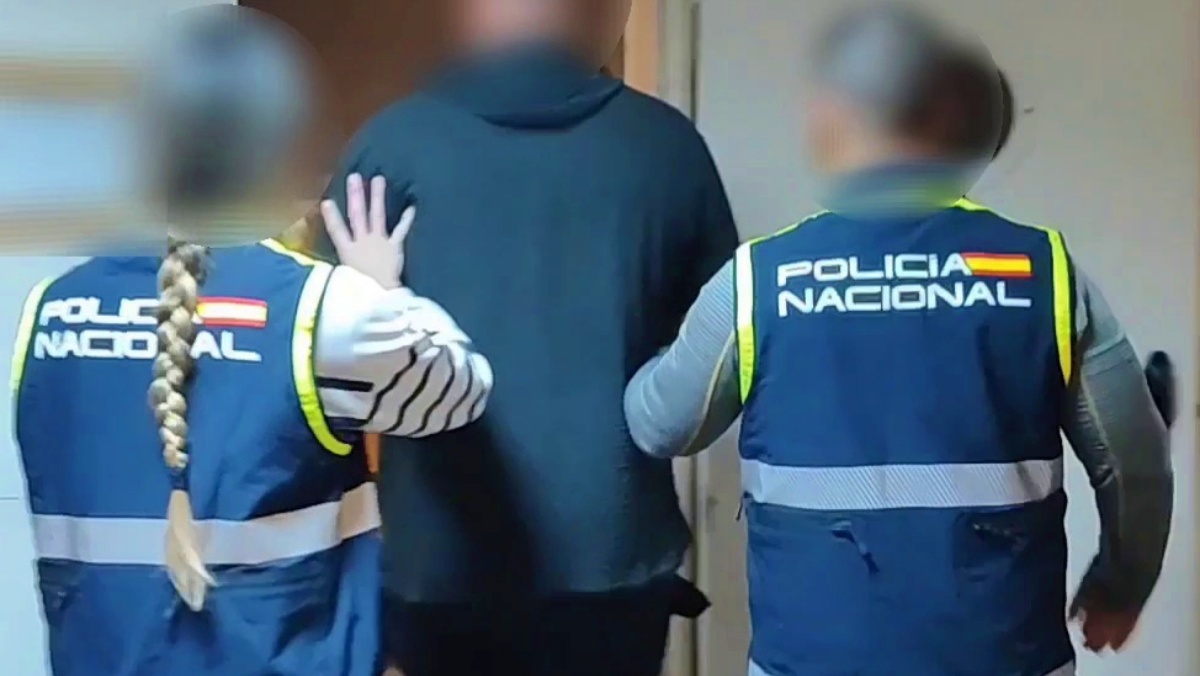

A man in Albacete, Spain, was arrested after using AI to manipulate a minor's photo, creating a fake nude image. He sent the image to the victim and threatened her and her family to withdraw her police complaint, causing psychological harm and violating her rights.[AI generated]

Why's our monitor labelling this an incident or hazard?

The article explicitly states that AI was used to manipulate a photograph of a minor to create a nude image, which was then used to threaten the victim. This manipulation and subsequent threats constitute violations of human rights and personal safety, fulfilling the criteria for an AI Incident. The AI system's use directly caused harm through image manipulation and intimidation, thus qualifying as an AI Incident rather than a hazard or complementary information.[AI generated]