The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

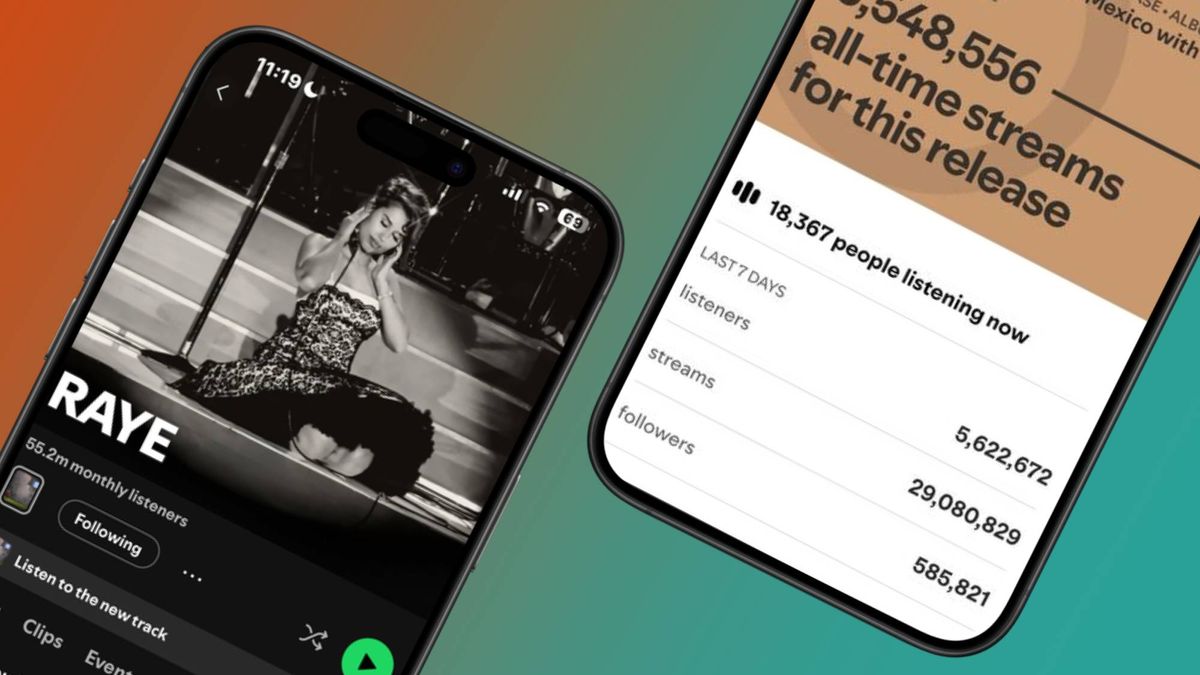

Spotify is beta testing 'Artist Profile Protection,' allowing artists to review and approve music releases before they appear on their profiles. This tool addresses harm caused by AI-generated tracks being misattributed to real artists, protecting their identity and preventing fraudulent streams and impersonation on the platform.[AI generated]