The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

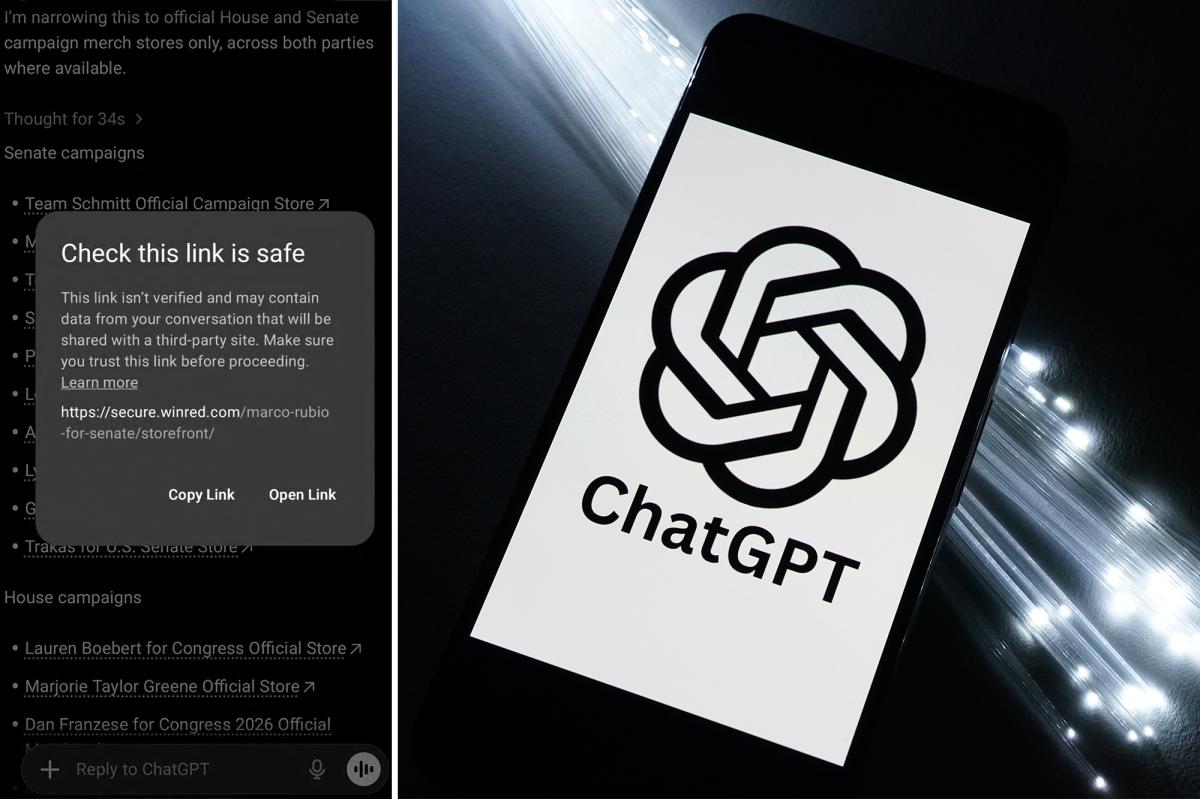

OpenAI's ChatGPT erroneously flagged links to the Republican fundraising platform WinRed as potentially unsafe, while similar Democratic links to ActBlue were not flagged. OpenAI attributed this to a technical glitch, but the incident raised concerns about AI bias and its potential impact on political participation in the U.S.[AI generated]