The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

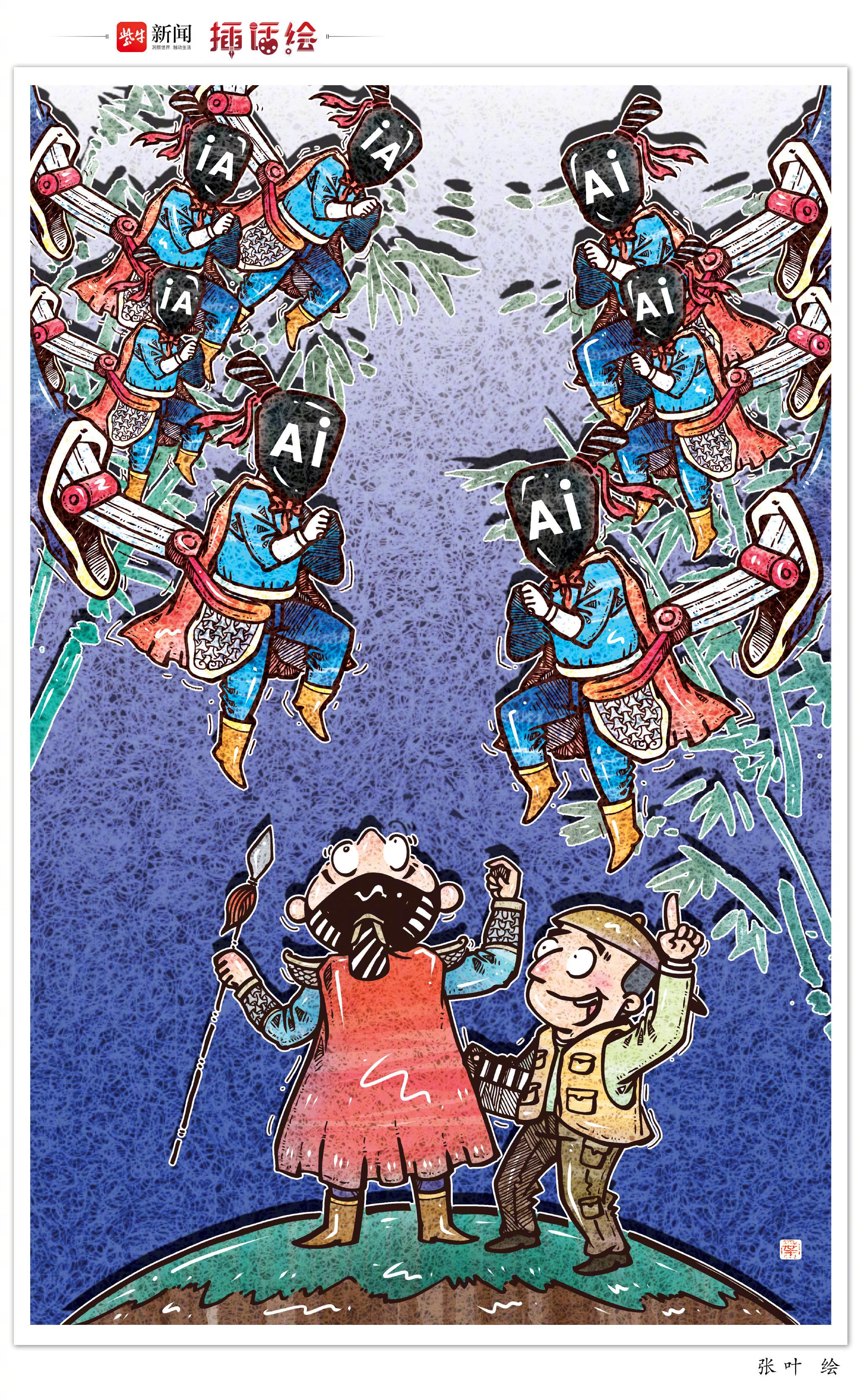

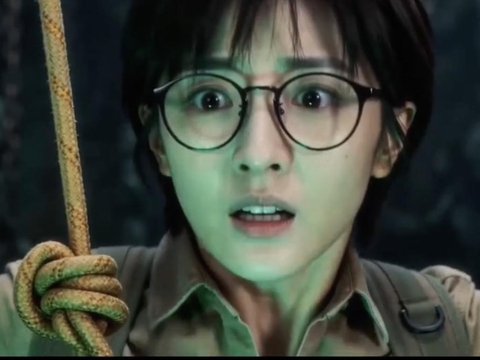

AI systems in China’s film industry have led to significant job losses, economic insecurity, and reputational harm through AI-generated actors, scriptwriting, and fake videos. Public backlash and legal concerns over image and voice likeness violations have prompted regulatory responses, highlighting ongoing harm and ethical challenges.[AI generated]