The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

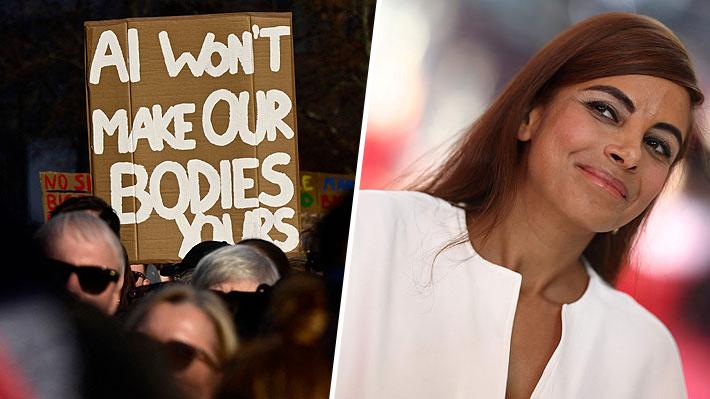

Around 10,000 people protested in Berlin against digital sexual violence, following allegations that AI tools were used to create pornographic deepfakes without consent. The German government is preparing urgent legislation to address legal gaps exposed by the incident involving actress Collien Fernandes and her ex-husband.[AI generated]

/https://i.s3.glbimg.com/v1/AUTH_da025474c0c44edd99332dddb09cabe8/internal_photos/bs/2026/Q/I/bgAQ0KQouigVSy94of1w/whatsapp-image-2026-03-28-at-12.15.01-pm.jpeg)

/https://i.s3.glbimg.com/v1/AUTH_da025474c0c44edd99332dddb09cabe8/internal_photos/bs/2026/I/e/AaOMmbSC2X09A8sdgwfw/afp-20260331-a6bm69g-v1-midres-filesgermanywomeninternetjustice.jpg)