The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

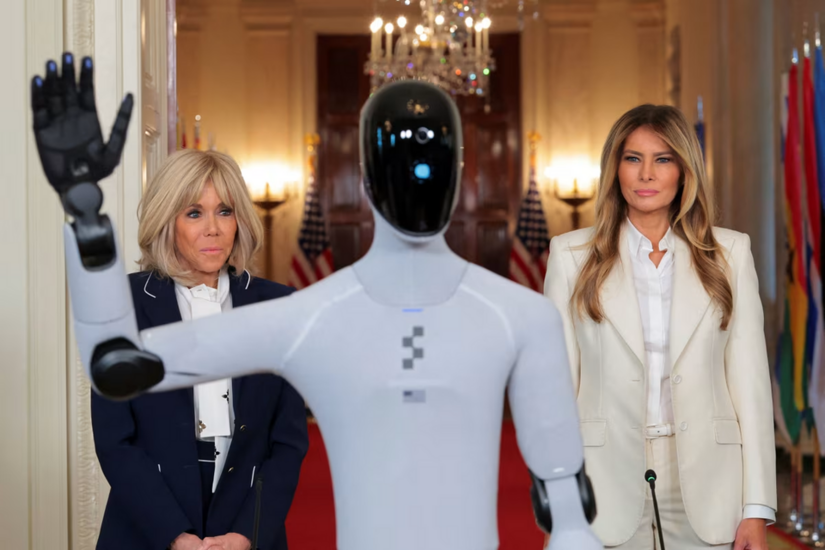

A humanoid robot performing a dance in a Shaanxi shopping mall struck a child in the face with its mechanical arm, causing injury. The robot failed to detect the child and continued its routine, highlighting inadequate safety measures and AI malfunction. Experts urge mandatory collision avoidance systems for public robots.[AI generated]

)