The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

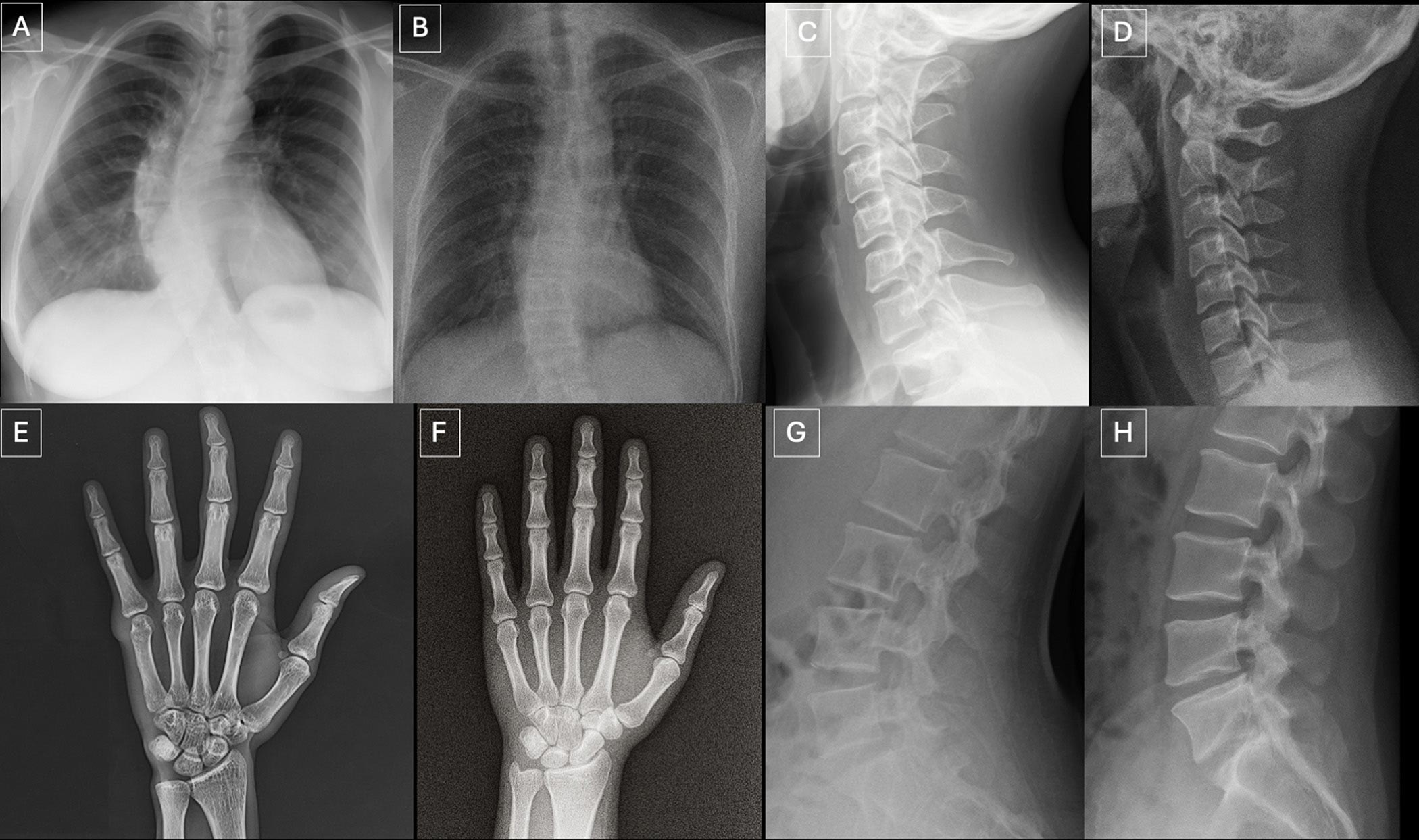

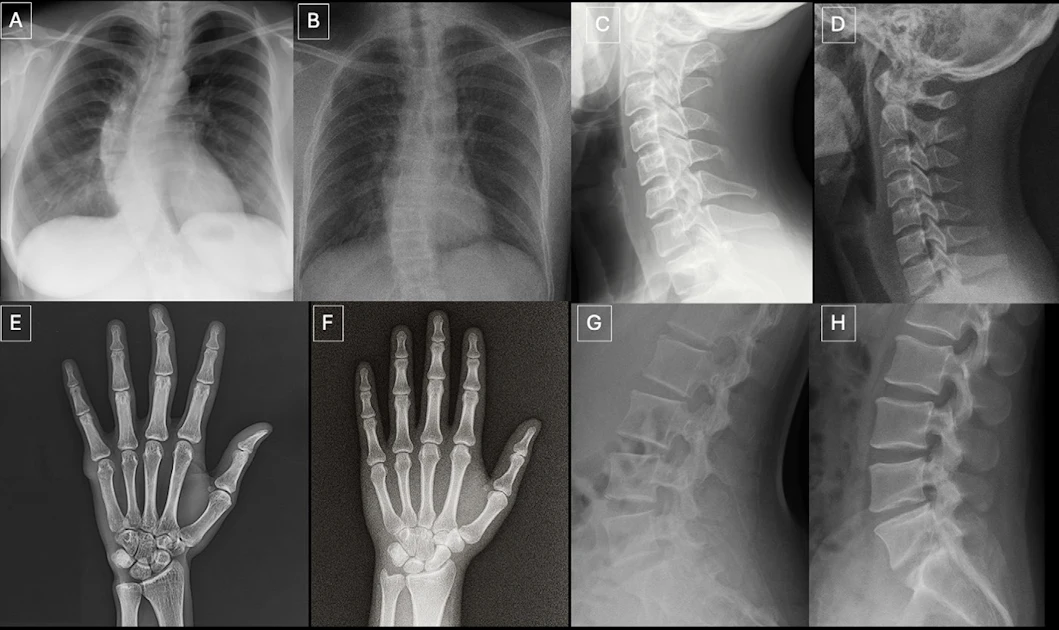

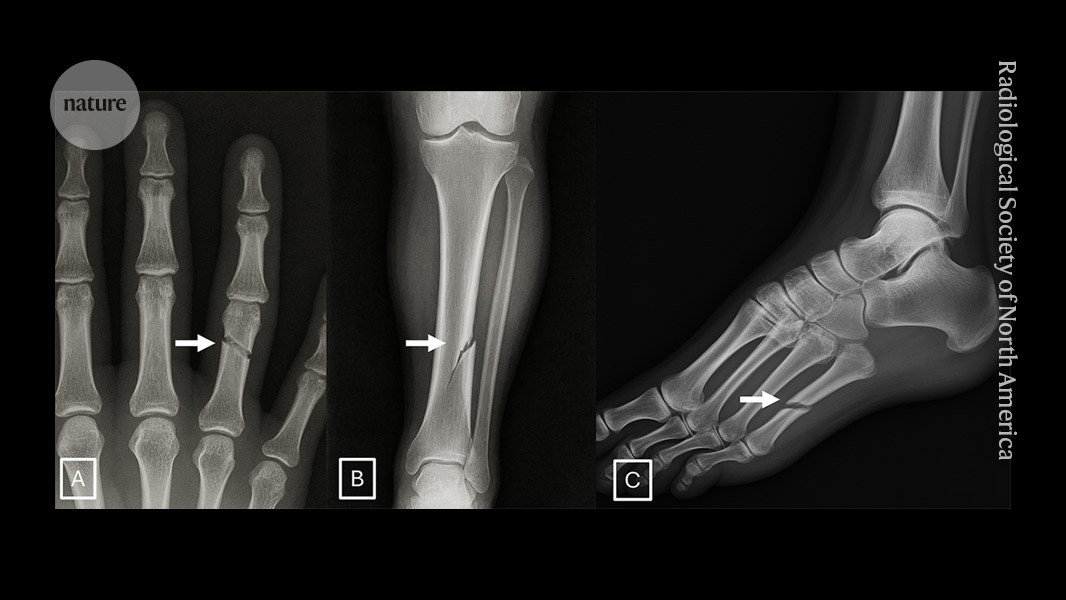

A multi-center study found that radiologists and advanced AI models cannot reliably distinguish AI-generated deepfake X-ray images from authentic ones. This vulnerability exposes healthcare to risks such as misdiagnosis, fraudulent litigation, and cybersecurity threats, highlighting the urgent need for improved detection tools and training.[AI generated]