The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

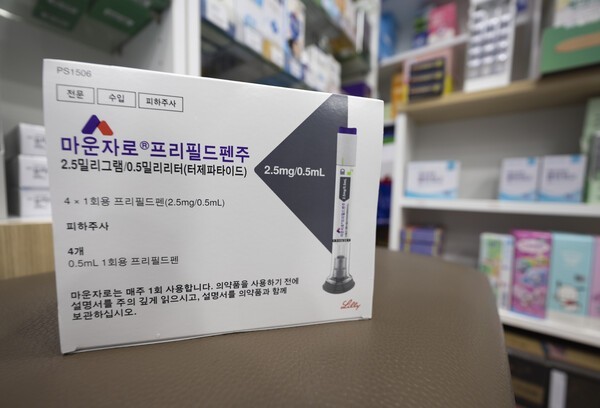

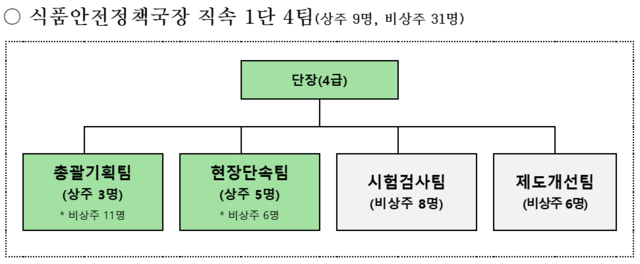

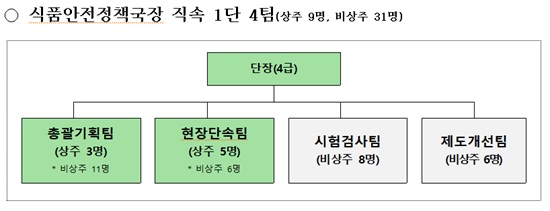

South Korea's Ministry of Food and Drug Safety launched a task force to address rising cases of AI-generated fake expert recommendations and deceptive food advertising online. The team aims to prevent consumer harm and restore fair market practices through monitoring, inspections, and regulatory improvements.[AI generated]