The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

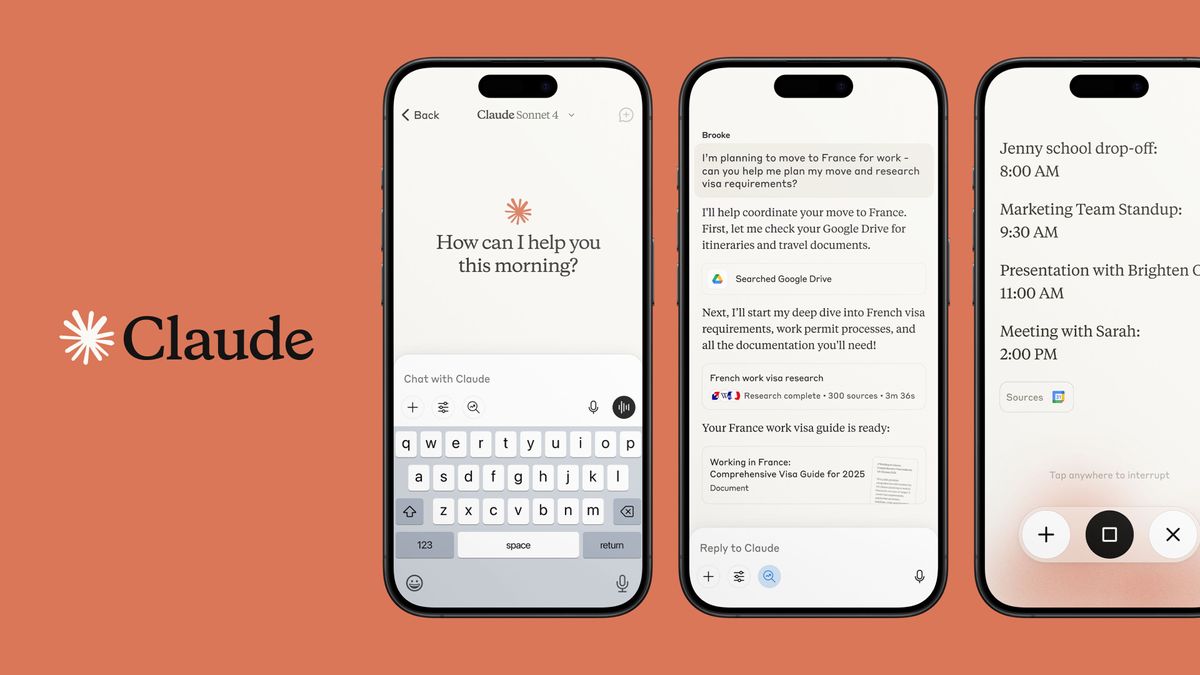

Anthropic has launched 'auto mode' for its Claude Code AI coding assistant, allowing it to autonomously execute multi-step coding tasks. While designed to boost productivity, the feature introduces credible risks such as data loss or malicious code execution, prompting Anthropic to implement safety classifiers and recommend controlled use.[AI generated]