The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

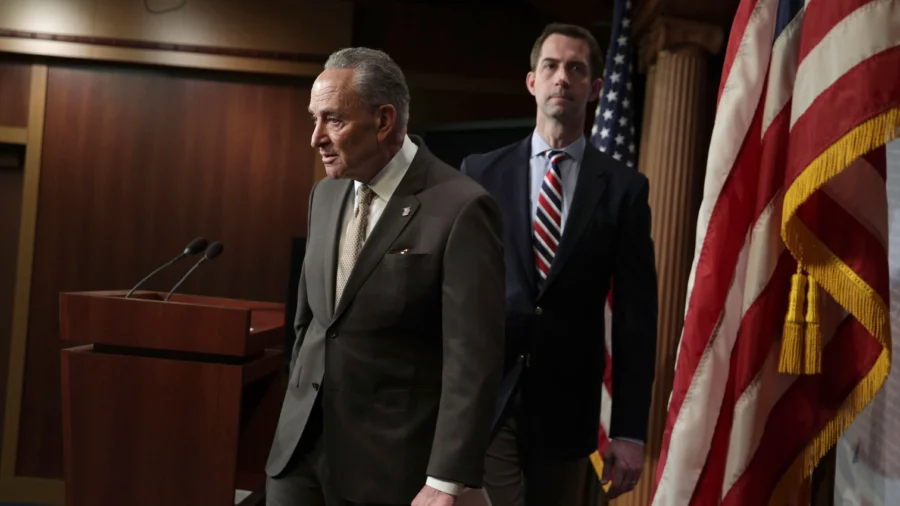

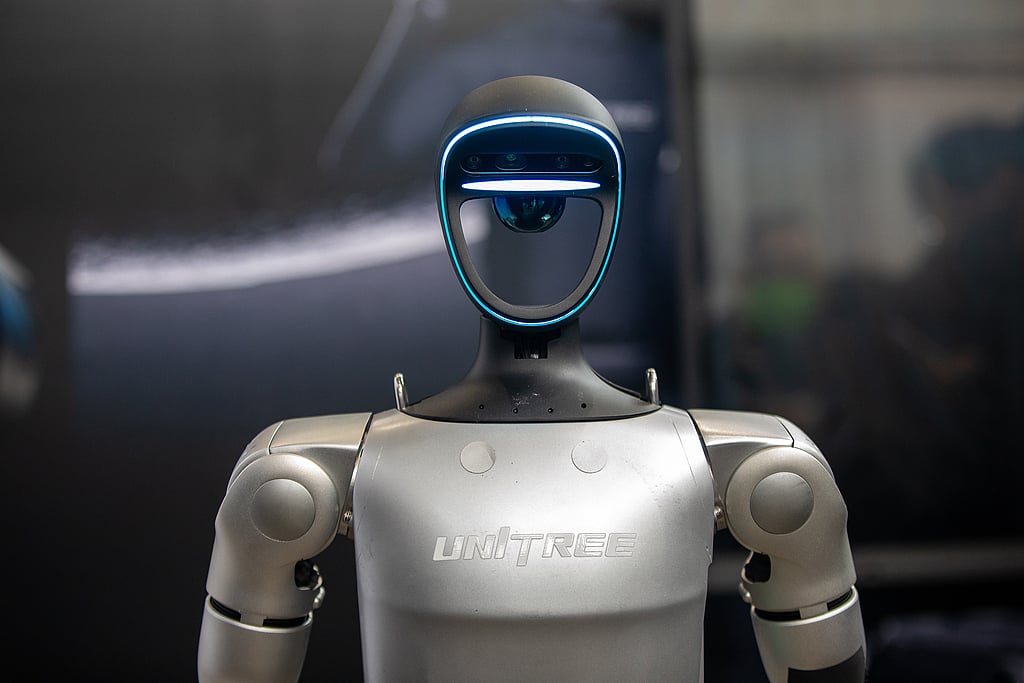

US lawmakers, led by Senators Tom Cotton and Chuck Schumer, have introduced the American Security Robotics Act to ban federal agencies from purchasing or operating AI-enabled robots made by Chinese companies. The bill aims to prevent potential national security risks, such as data breaches or espionage, posed by these autonomous systems.[AI generated]