The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

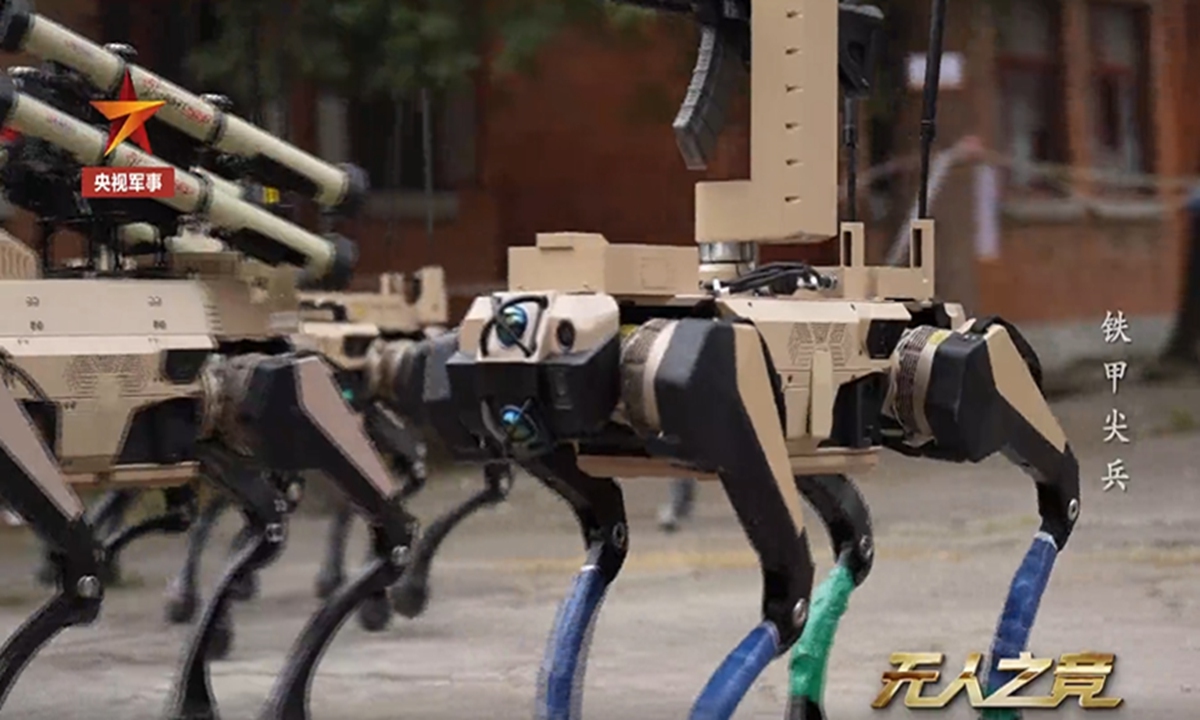

China has unveiled and deployed AI-powered 'wolf robots' equipped with missiles and grenade launchers in military urban combat exercises. Developed by a state-owned research institute, these autonomous robots can perform reconnaissance, attack, and support roles, operate in swarms, and share sensor data, raising concerns about AI-driven lethal force in warfare.[AI generated]