The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

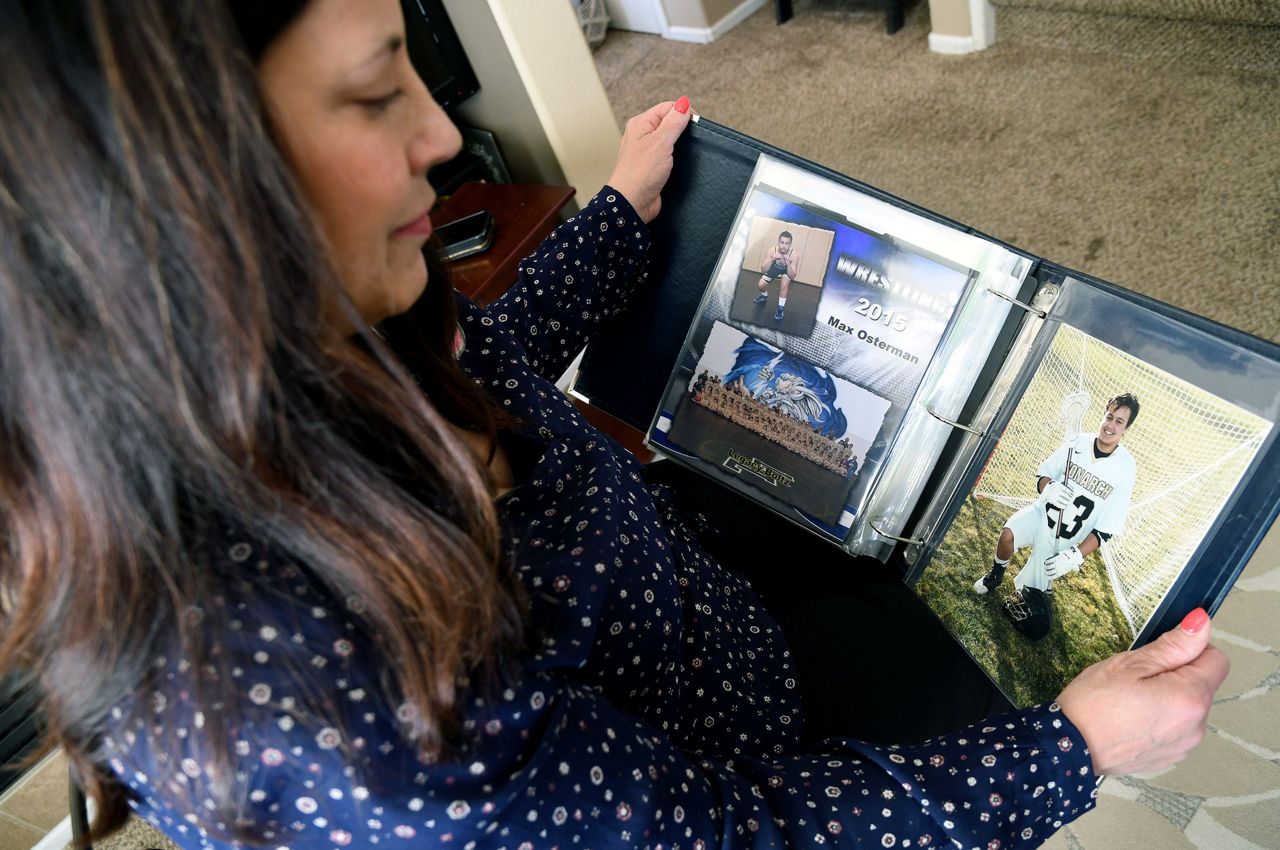

A Colorado woman celebrated legal verdicts against Meta and YouTube, whose AI-powered platform designs were found liable for harms to children, including her son's death from a fentanyl-laced pill bought via social media. The verdicts highlight the role of AI-driven content recommendation in facilitating harmful interactions.[AI generated]