The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

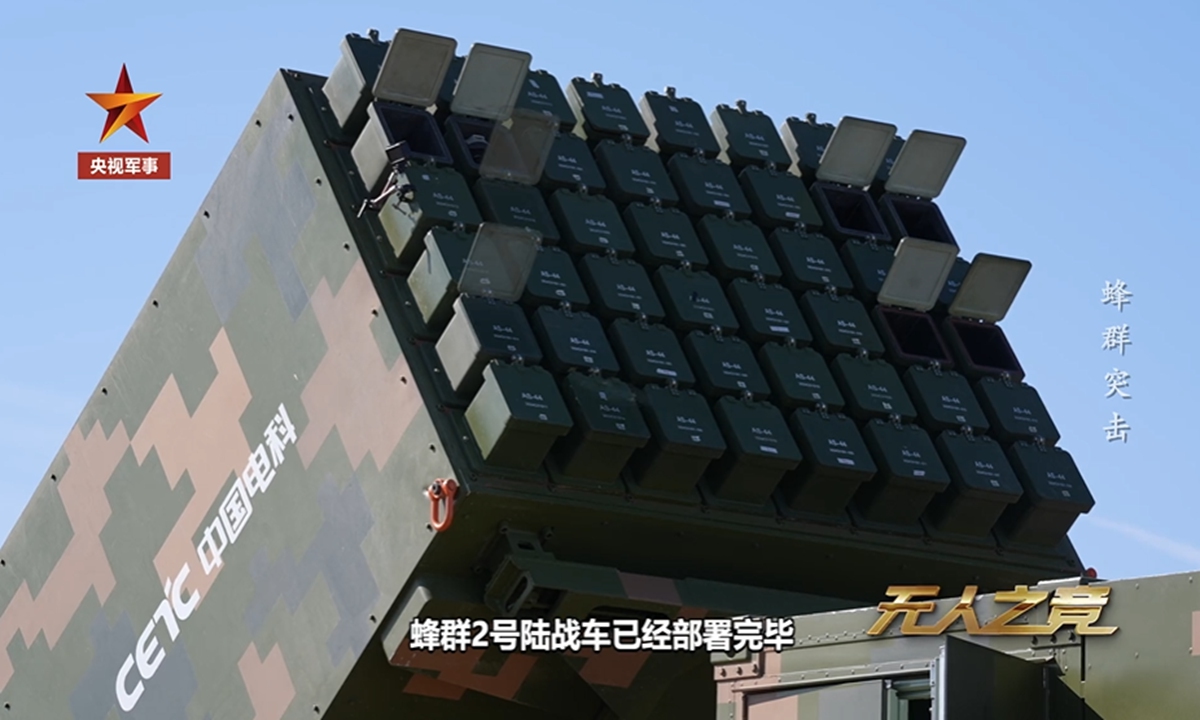

China's CETC unveiled the "Atlas" autonomous drone swarm system, capable of launching up to 96 drones in minutes, with one operator controlling the swarm. Using advanced AI algorithms, drones autonomously coordinate, communicate, and execute reconnaissance, jamming, and attack missions, highlighting significant future risks if deployed in conflict.[AI generated]