The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

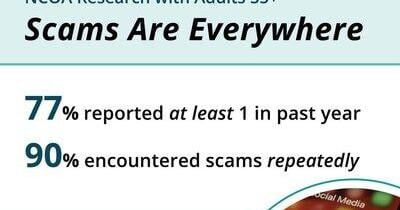

Criminals are increasingly using AI to create more convincing and harder-to-detect scams, leading to a rise in financial fraud, especially in the UK and Australia. Older adults in the US are particularly affected by AI-enabled scam ads on social media, prompting calls for platform accountability and reform.[AI generated]