The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

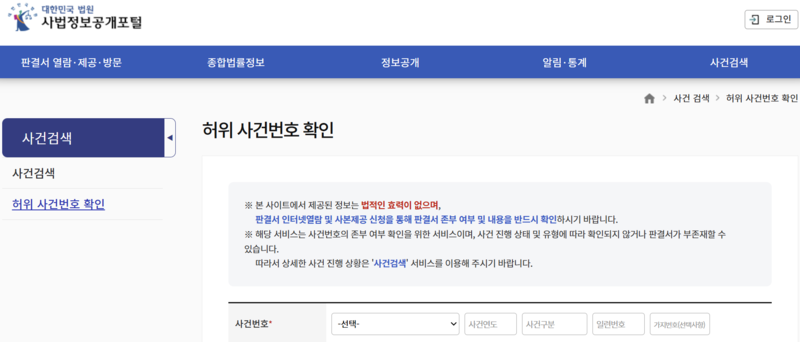

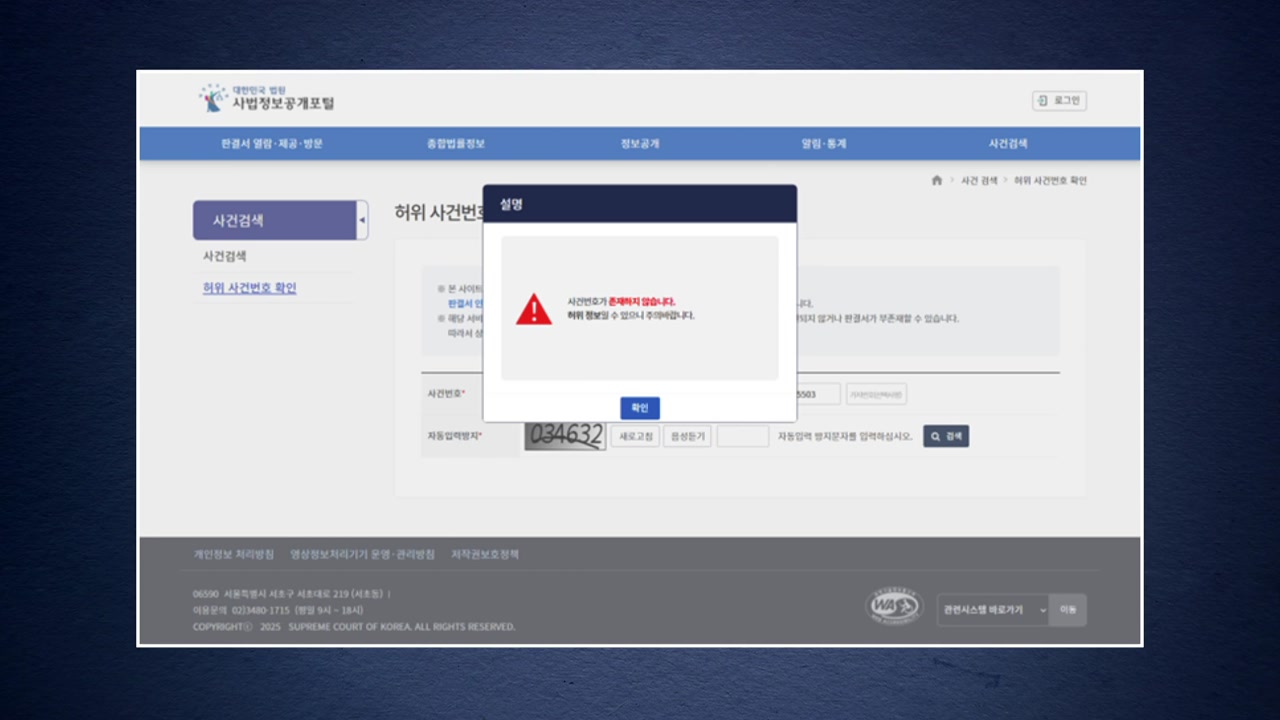

South Korean courts have faced increasing incidents of AI-generated fake legal precedents and evidence being submitted in legal proceedings, causing delays and unnecessary costs. In response, the judiciary has proposed measures including cost penalties, disciplinary action for lawyers, mandatory AI-use disclosure, and system upgrades to verify legal documents' authenticity.[AI generated]