The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

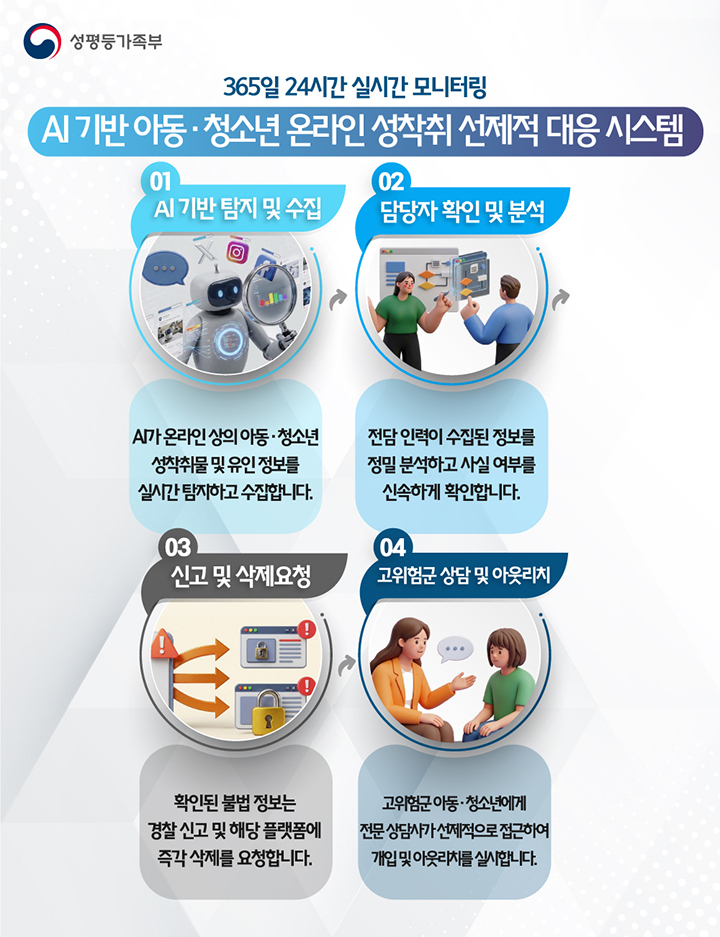

South Korea's Ministry of Gender Equality and Family launched an AI-powered system to automatically detect, report, and request deletion of digital sexual exploitation content, including deepfakes, across about 20,000 websites. The system automates and accelerates victim protection, significantly increasing detection rates and reducing processing time to under one minute per case.[AI generated]