The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

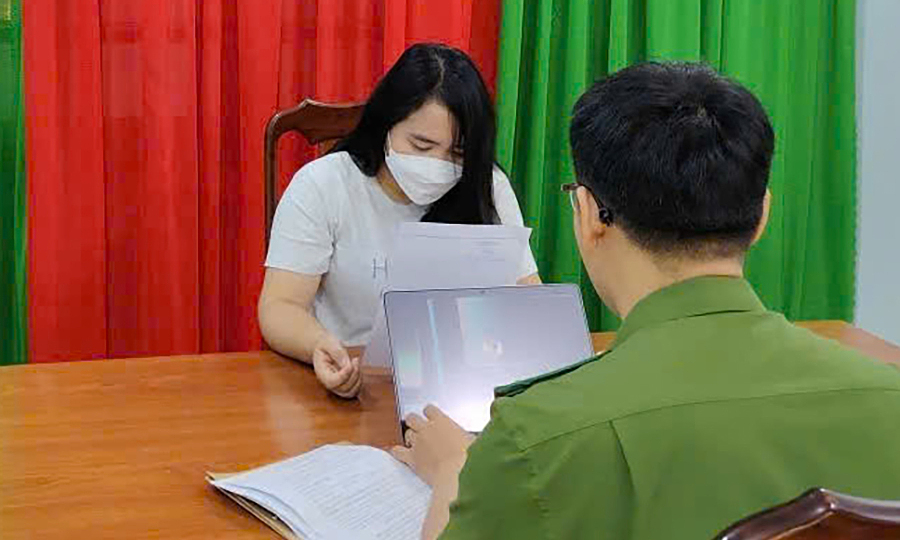

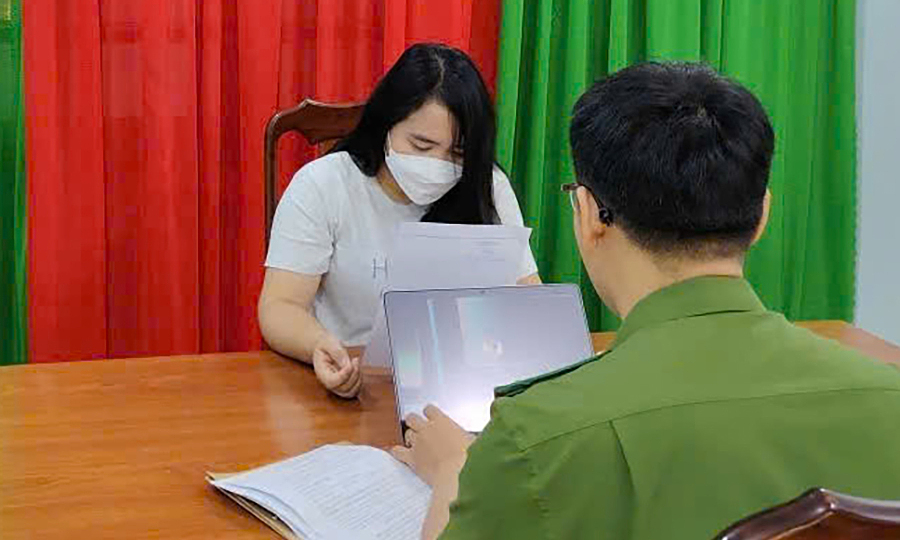

In Lâm Đồng, Vietnam, several individuals, including N.T.K. and a group of three others, used AI tools to produce and publish hundreds of fabricated, sensational videos on YouTube. These videos spread misinformation, caused public alarm, and damaged reputations, leading authorities to impose administrative fines for the misuse of AI-generated content.[AI generated]

Why's our monitor labelling this an incident or hazard?

The event explicitly mentions the use of AI to create fabricated video content that spread false and harmful narratives, leading to legal penalties for misinformation and defamation. The AI system's role in generating and disseminating false information that harmed reputations and misled the public fits the definition of an AI Incident, as it caused violations of rights and harm to communities. The harm is realized, not just potential, and the AI system's involvement is central to the incident.[AI generated]