The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

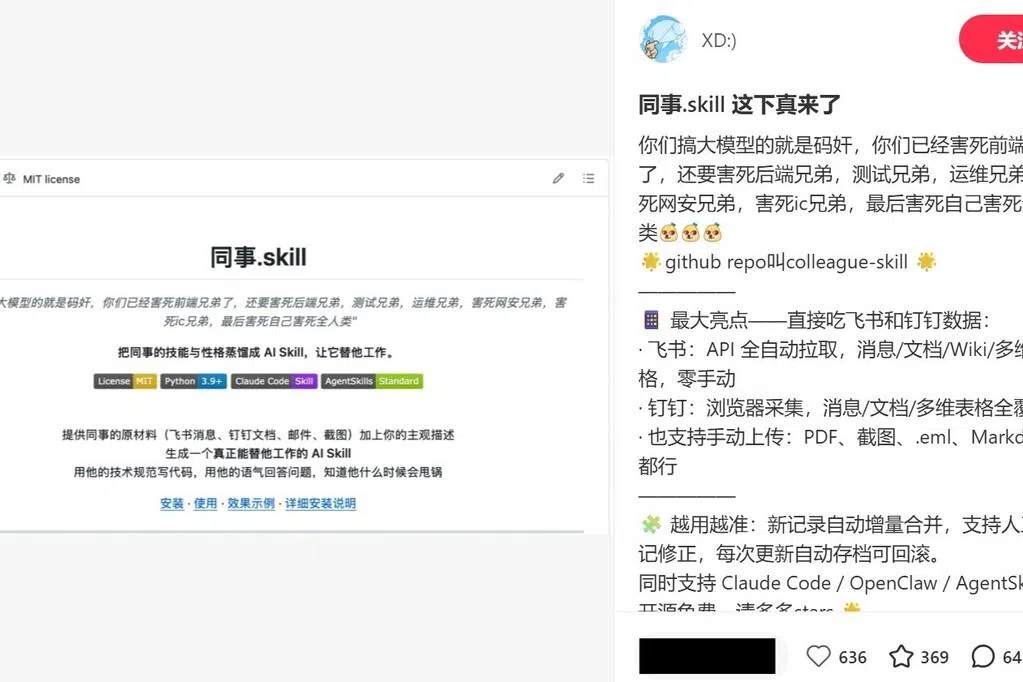

AI systems like '同事.skill' are being used to distill employees' work habits and personalities into digital 'skills,' enabling companies to automate roles and leading to layoffs and privacy violations. These AI tools have caused job losses, disrupted career paths, and raised legal and ethical concerns in China.[AI generated]