The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

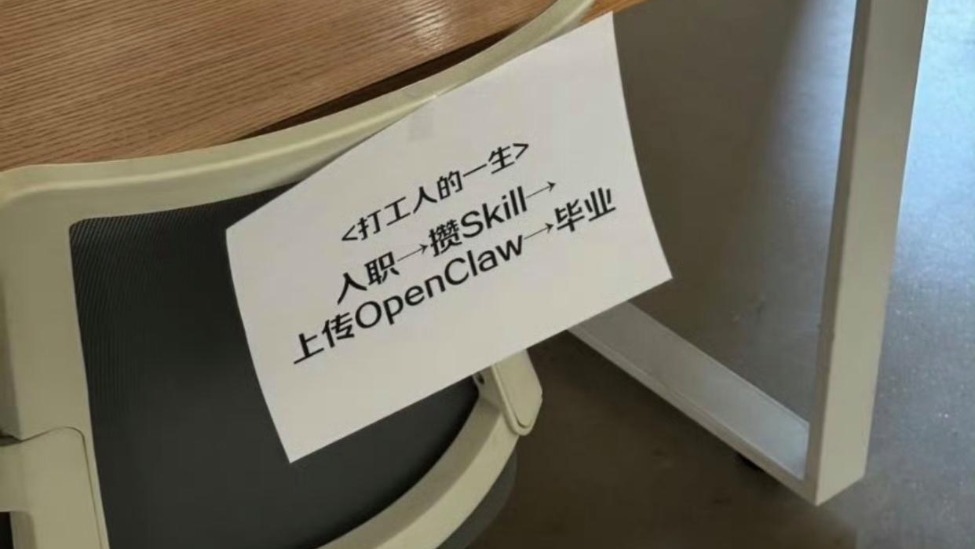

Several Chinese companies have used AI to create digital clones of former employees by training models on their work documents and communications. These AI avatars continue performing tasks after the employees leave, sometimes without explicit consent, sparking public outcry and legal warnings over privacy, intellectual property, and labor rights violations.[AI generated]