The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

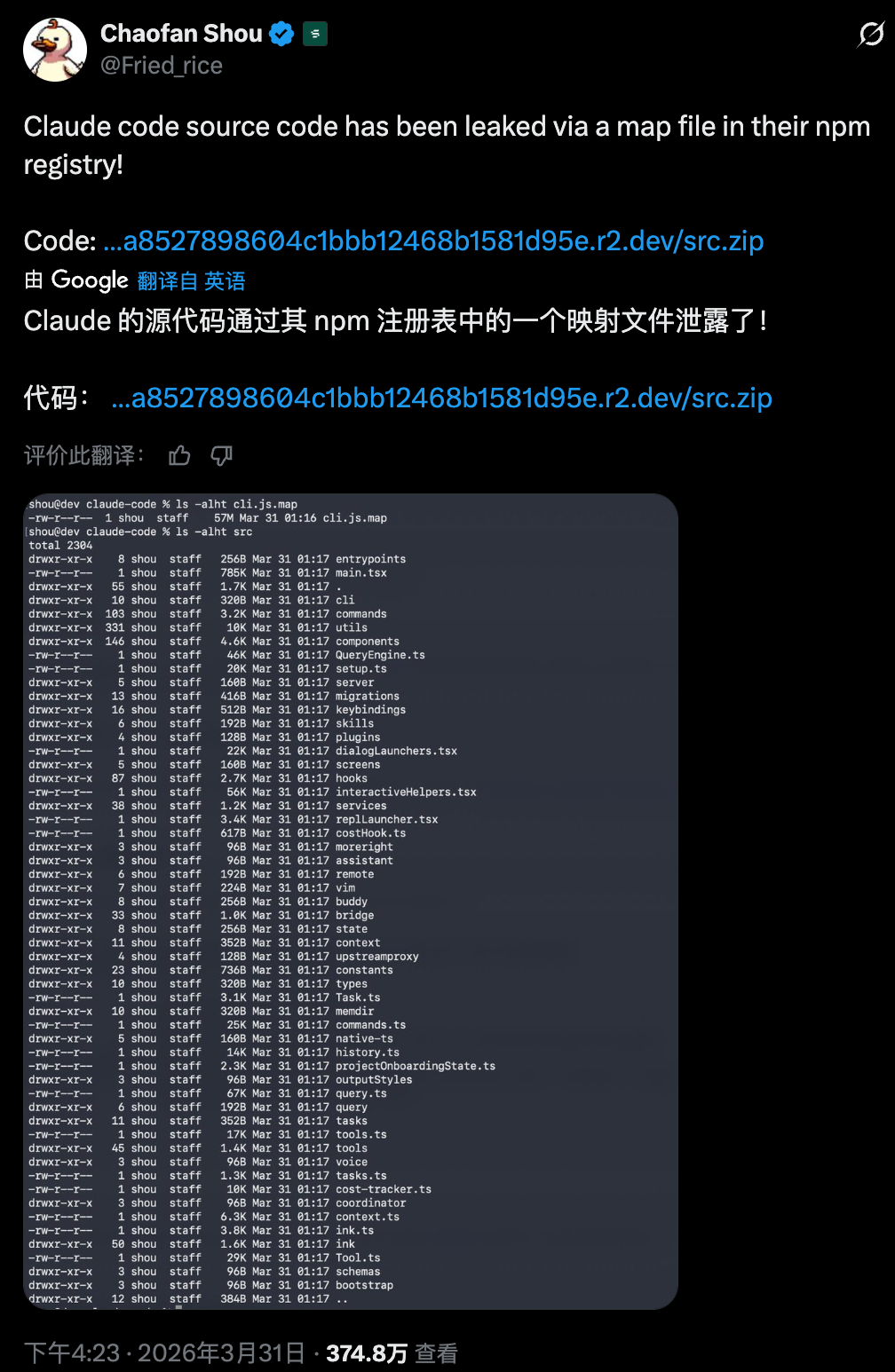

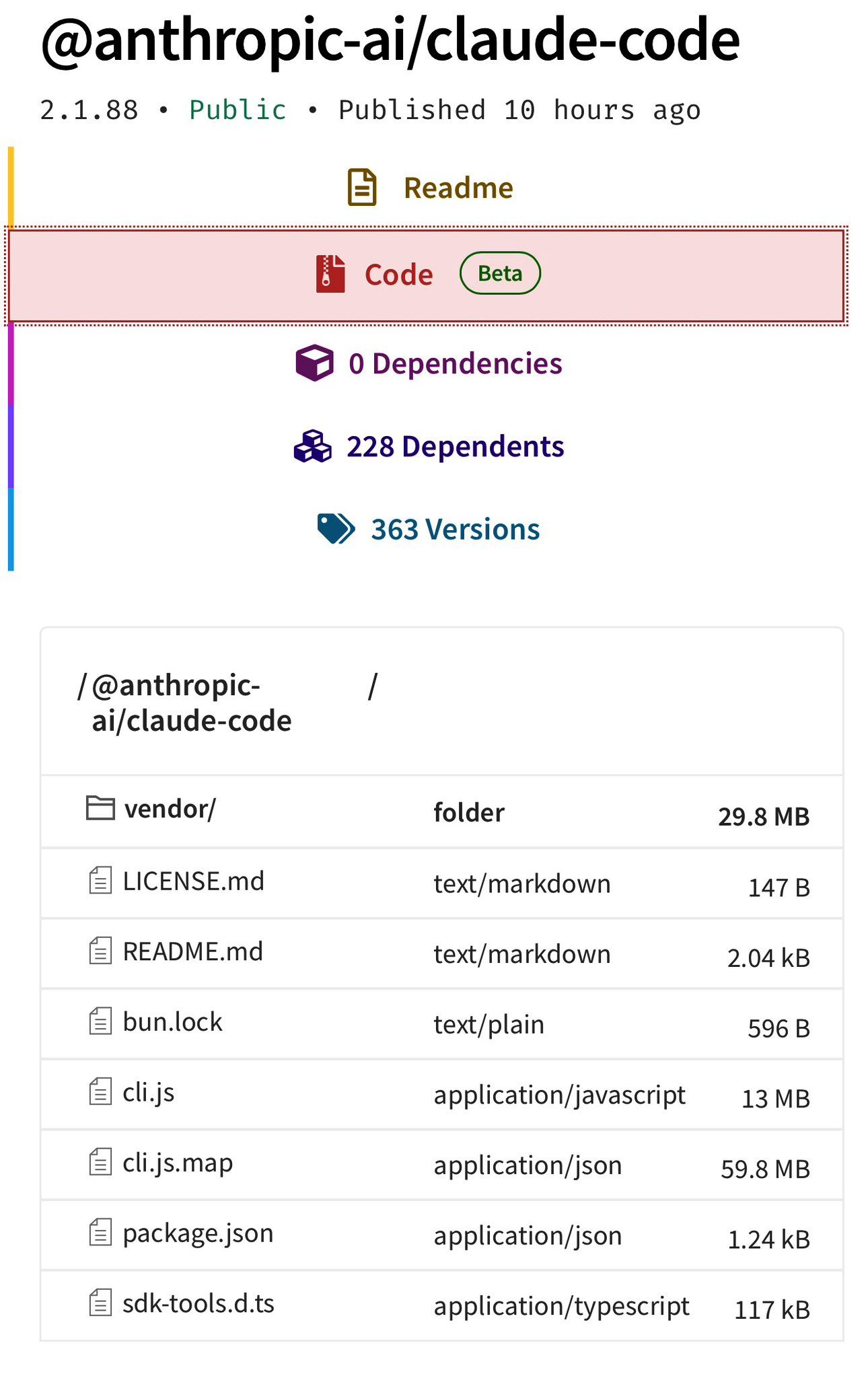

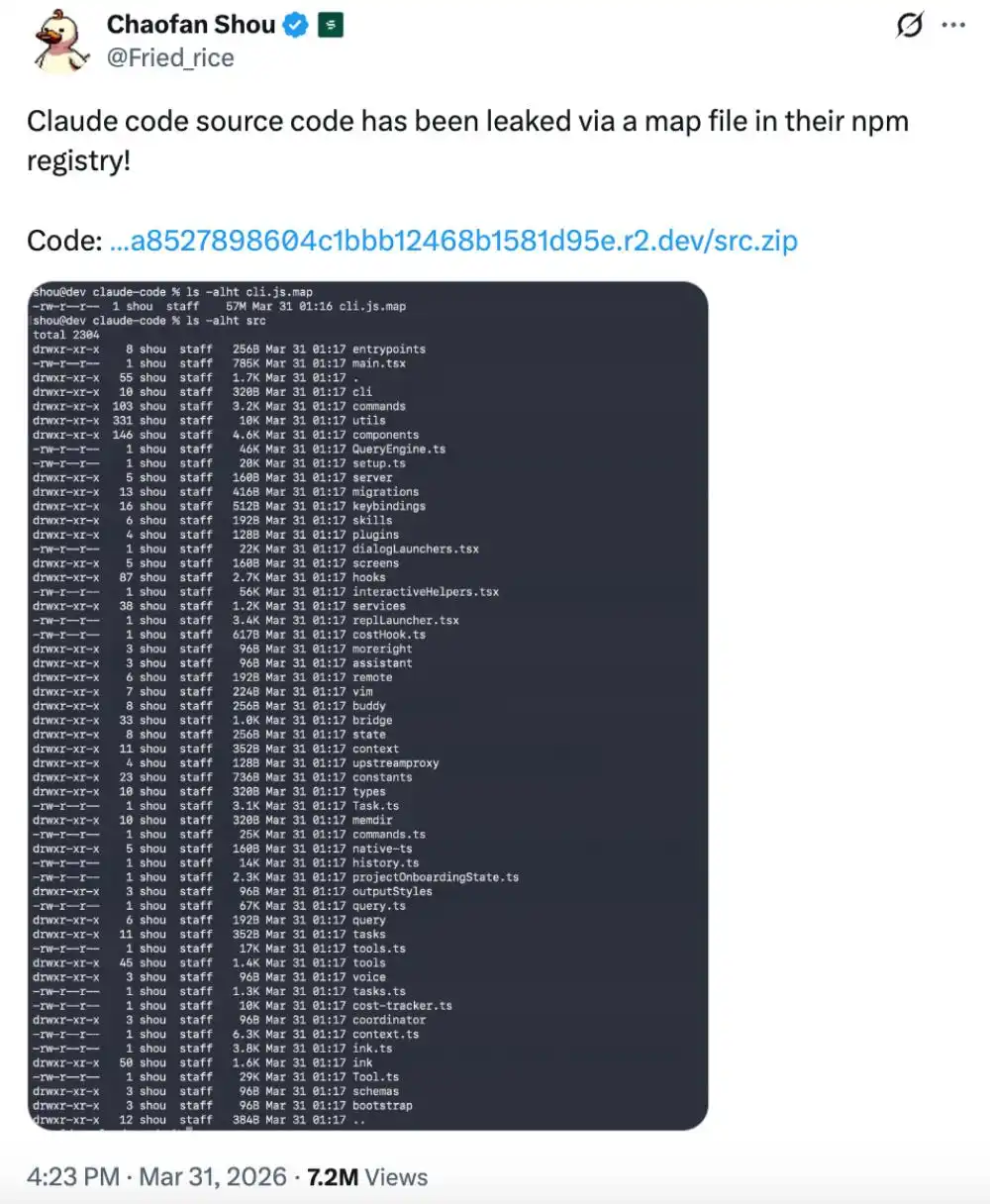

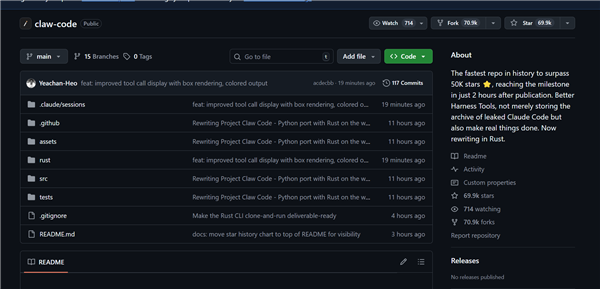

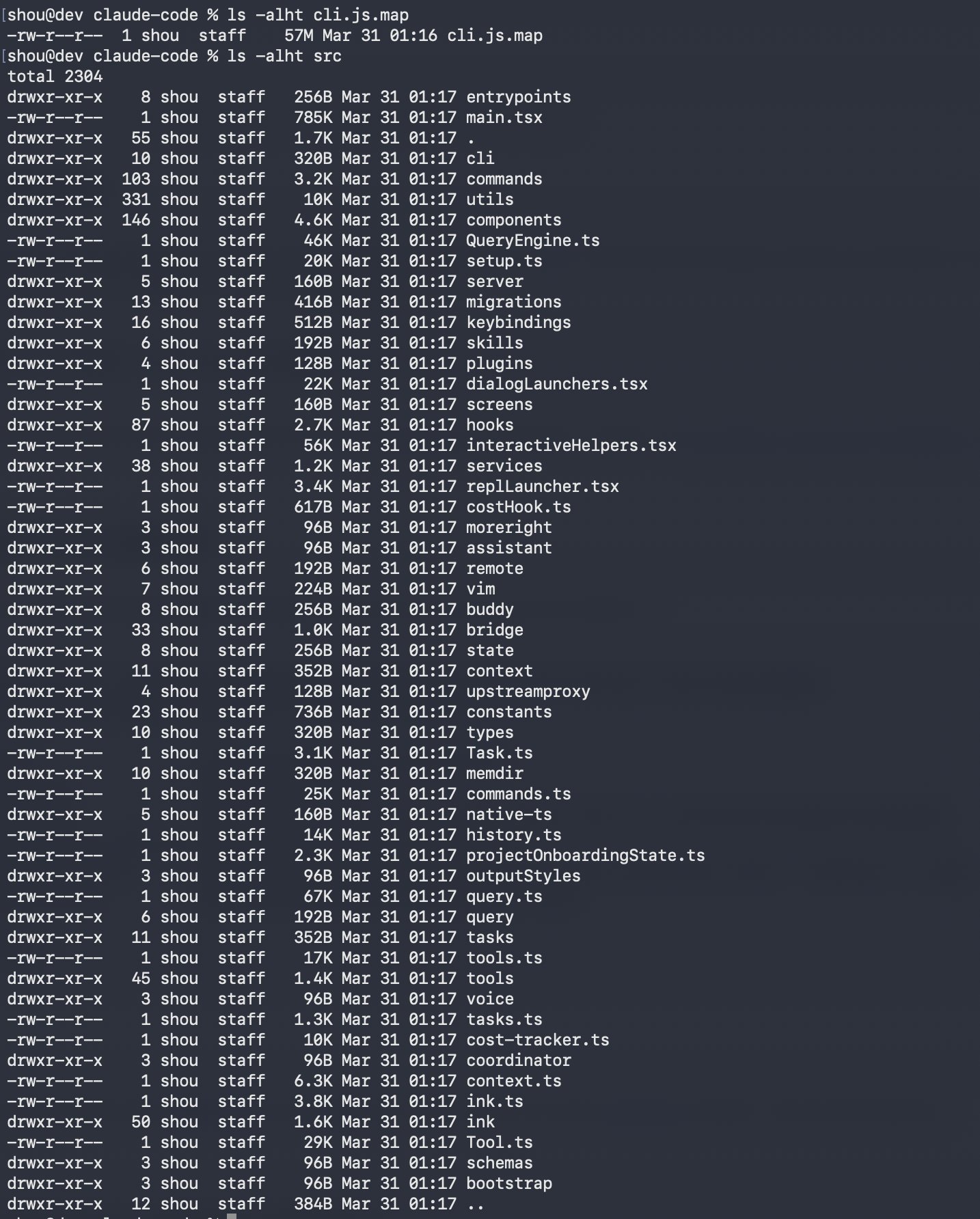

A leak of Anthropic's Claude Code AI source code enabled cybercriminals to distribute malware disguised as the leaked code. Malicious repositories and archives, widely shared online, installed credential-stealing software (Vidar) and proxy tools (GhostSocks) on developers' systems, leading to data theft and network compromise. The incident primarily targeted developers and organizations.[AI generated]

/data/photo/2026/03/13/69b39170d3a96.png)

/data/photo/2026/02/24/699d080916e5b.jpg)

:strip_icc():format(jpeg)/kly-media-production/medias/5534658/original/043425000_1773877349-AP26077701489781.jpg)