The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

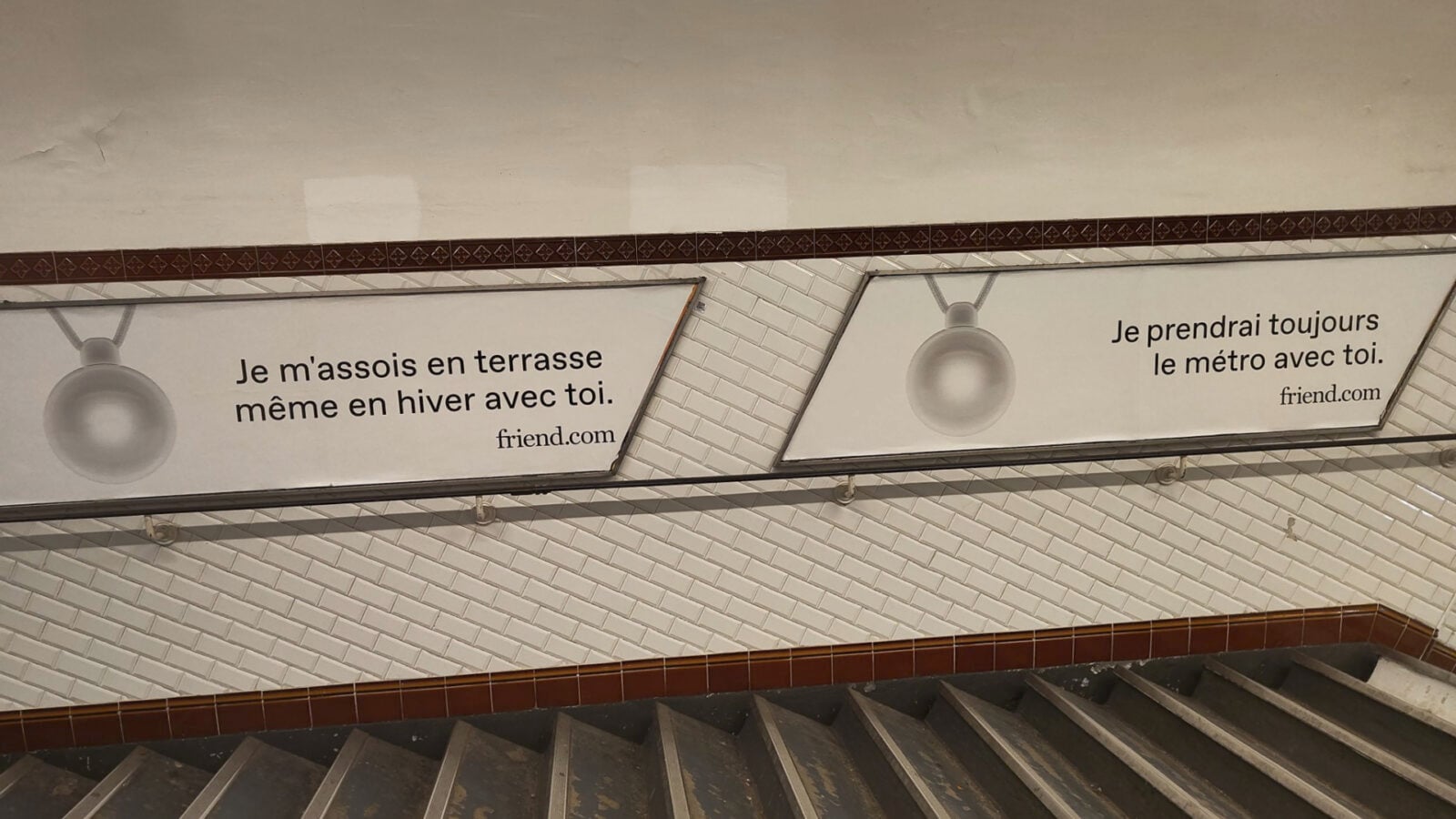

The US startup Friend postponed the launch of its AI-powered necklace in France and the EU due to privacy concerns and potential GDPR violations. The device, which listens and analyzes conversations, raised fears about data protection, prompting the company to review compliance before marketing in Europe.[AI generated]