The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

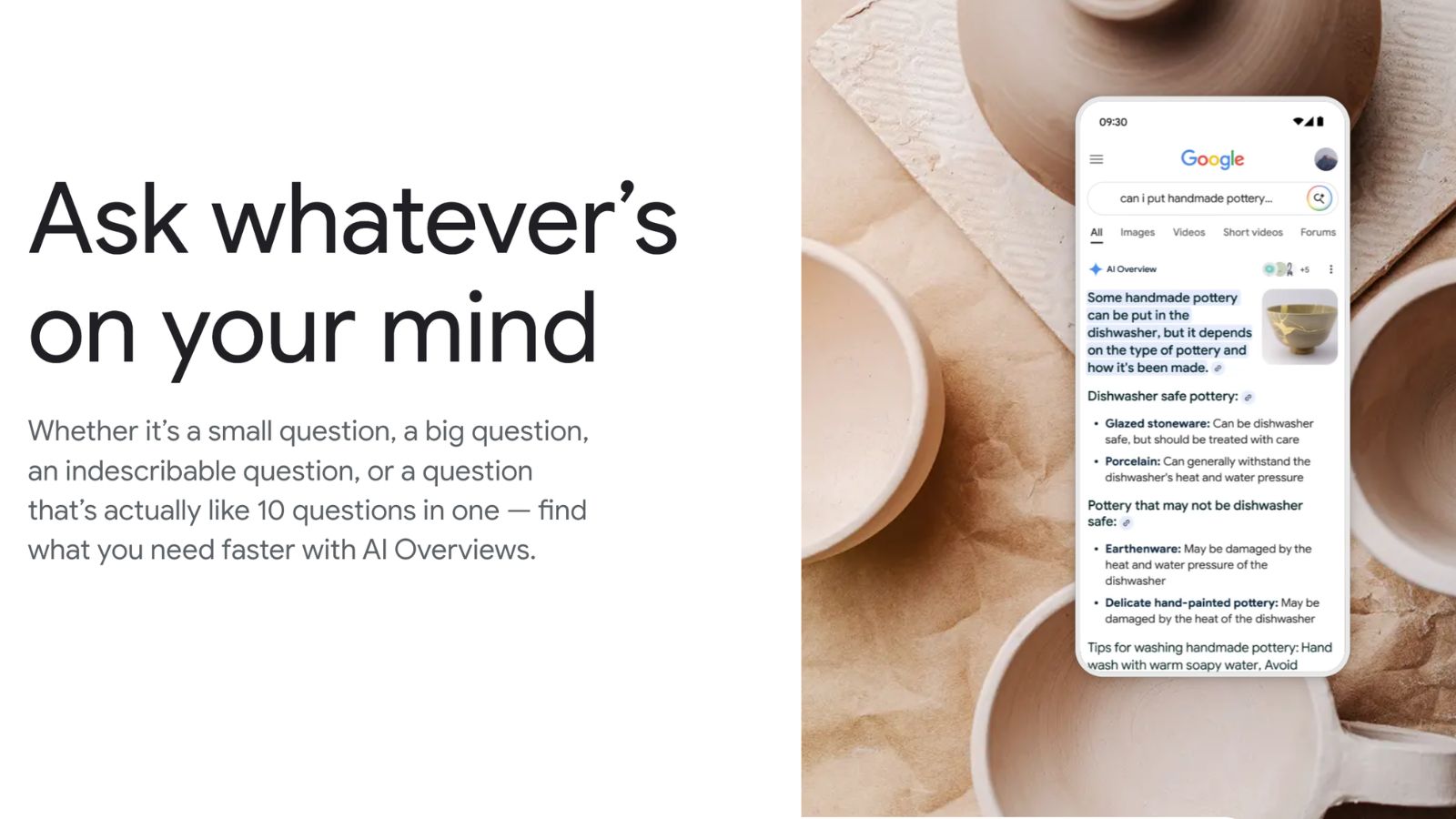

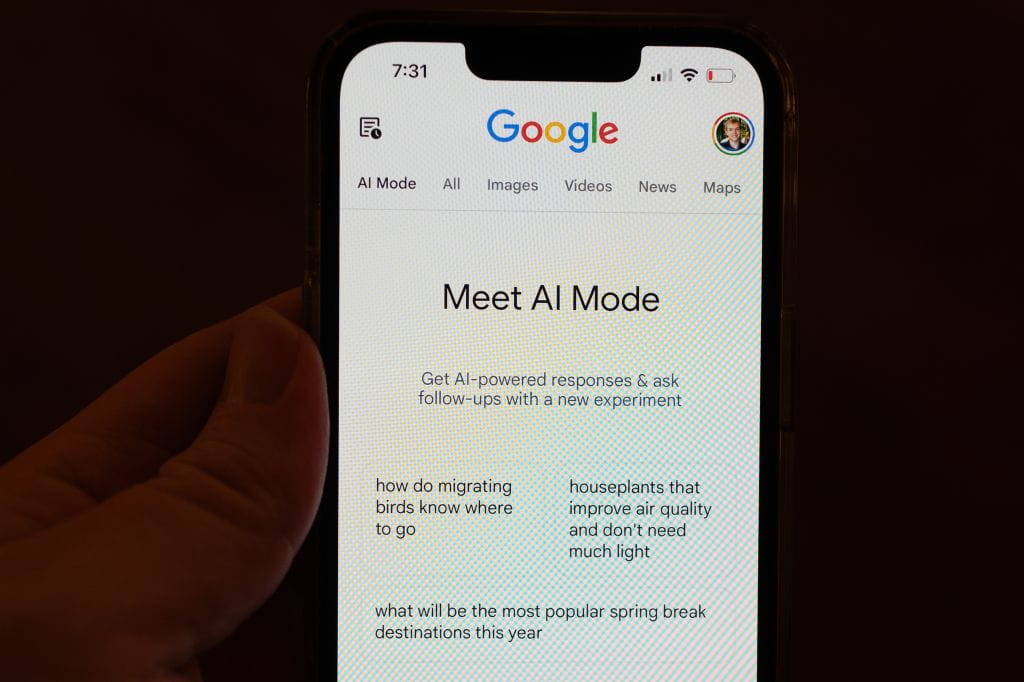

Google's AI Overviews, powered by Gemini models, generate factually incorrect or unsupported answers in about 9-15% of search results, leading to millions of misleading or erroneous responses daily. Studies by The New York Times and Oumi highlight both factual errors and unreliable source citations, raising concerns about large-scale misinformation.[AI generated]